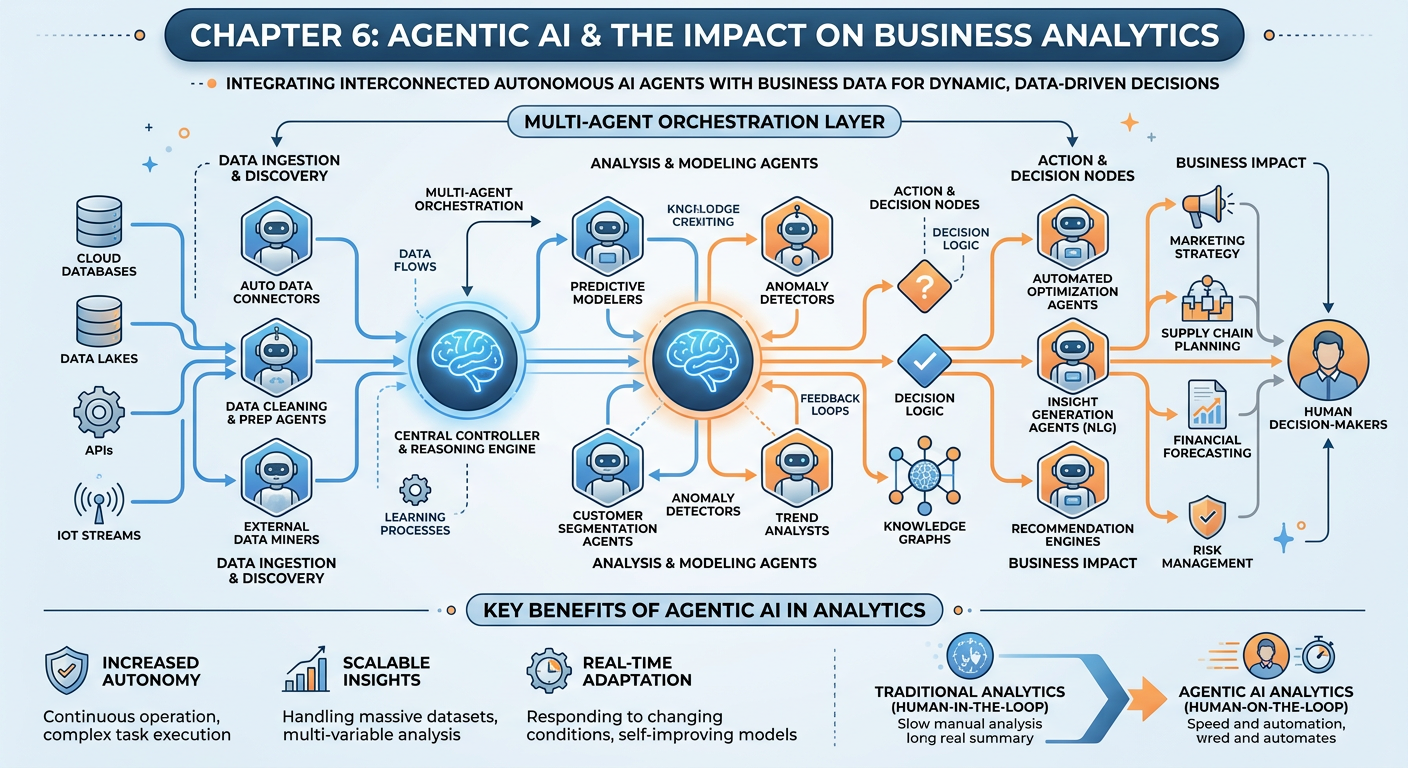

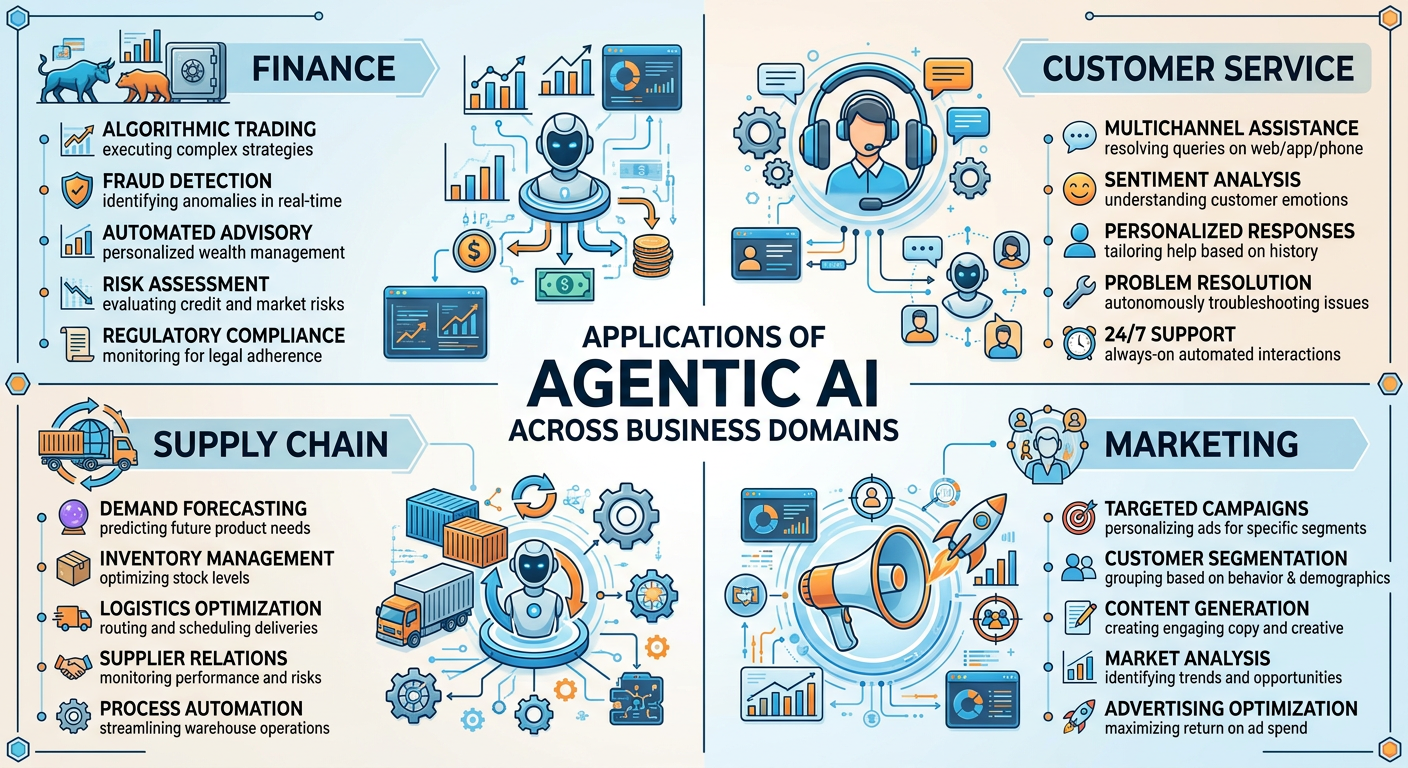

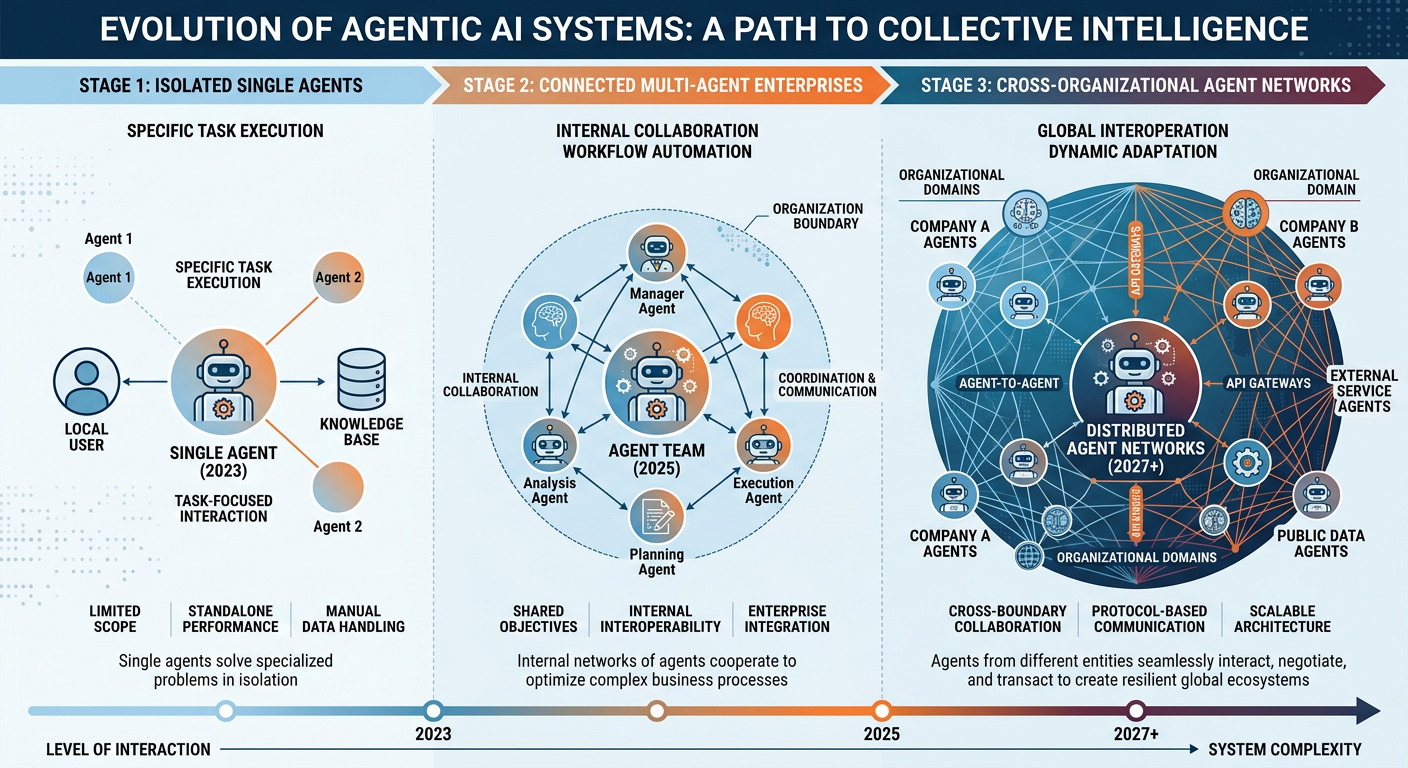

Figure 1:Explainer Infographic: Chapter 6: Agentic AI and the Impact on Business Analytics.

1Introduction: The Dawn of the Autonomous Enterprise¶

Imagine waking up on a Monday morning to discover that your company’s quarterly forecasting report has already been generated, cross-validated, and distributed to every department head — not by a team of analysts working through the weekend, but by an interconnected set of AI agents that perceived shifts in market data on Friday afternoon, autonomously coordinated with each other to retrieve relevant datasets, ran competing predictive models, resolved contradictions through negotiation, and composed a coherent executive summary — all without a single human instruction after the initial system configuration.

This is not science fiction. This is Agentic AI, and it is fundamentally reshaping the landscape of business analytics in ways that are both thrilling and, to some, deeply unsettling.

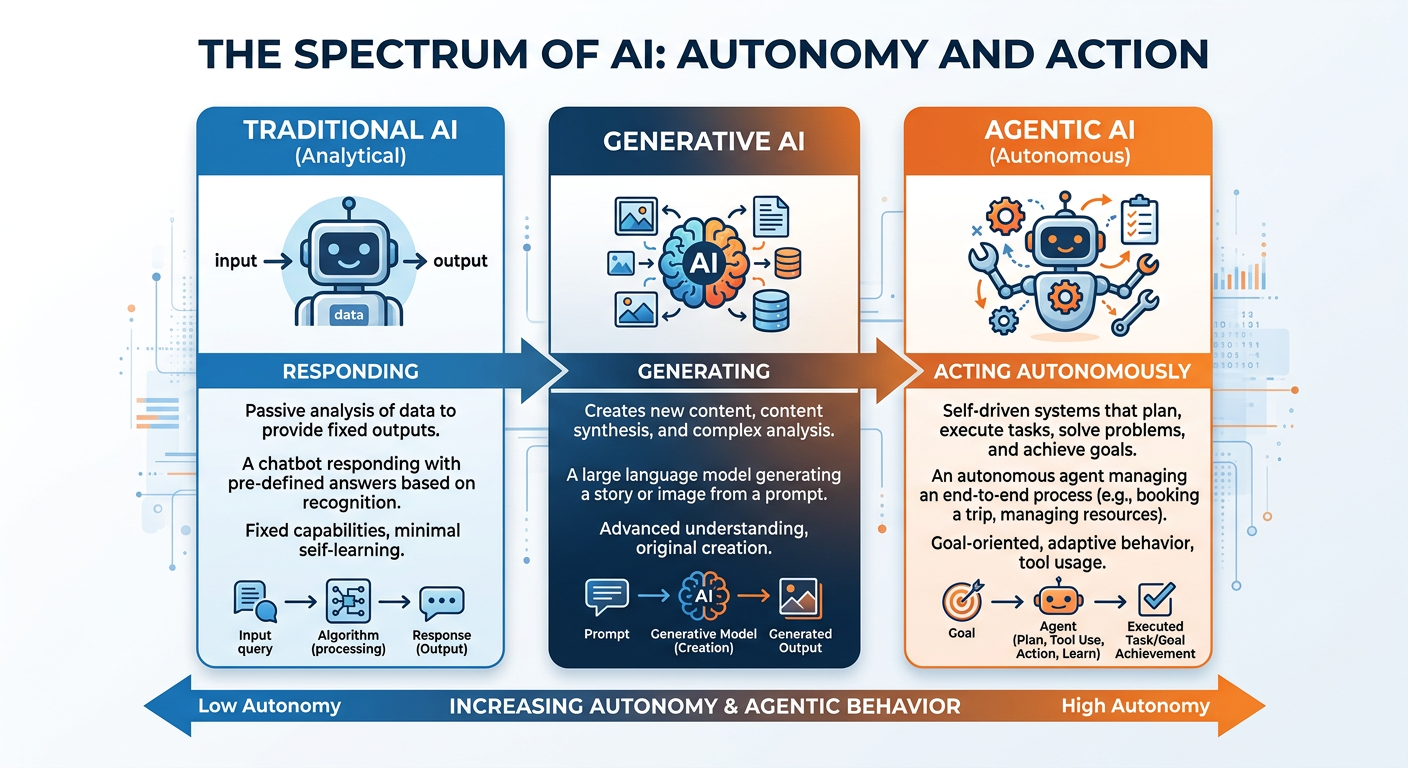

For most of the history of computing, AI was a tool that waited. You asked it a question; it answered. You gave it data; it processed. The relationship was transactional and always initiated by a human. The rise of large language models (LLMs) and sophisticated machine learning architectures in the early 2020s began to change this dynamic, and by the mid-2020s, a new paradigm had fully emerged: agentic AI, where AI systems act, plan, remember, reason, and collaborate — not just respond.

In this chapter, we will explore what agentic AI means from a technical and business perspective, how autonomous agents are deployed within organizational contexts, how multi-agent systems coordinate to accomplish complex analytical tasks, and — critically — what all of this means for graduate-level analytics professionals entering or advancing within this new environment. We will also get our hands dirty with a real-world voice AI deployment using Vapi.ai, one of the leading platforms for building and deploying voice-enabled AI agents in production business settings.

By the end of this chapter, you should be able to articulate what distinguishes an agentic AI system from a conventional AI tool, design the conceptual architecture of a multi-agent analytics workflow, evaluate the business implications and ethical considerations of deploying autonomous agents, and deploy a functional voice AI agent using Vapi.ai.

26.1 What Is Agentic AI? Defining the Paradigm Shift¶

The term “agent” has a long intellectual history in computer science, cognitive science, and philosophy. In the context of AI, an agent is any system that perceives its environment, makes decisions, and takes actions in pursuit of goals. This definition, rooted in the foundational work of Russell and Norvig’s Artificial Intelligence: A Modern Approach, has remained relatively stable for decades.

What has changed dramatically is the capability of agents. Traditional AI agents — like the rule-based chatbots of the 2000s or the recommendation engines of the 2010s — were narrow, brittle, and heavily scripted. They could execute predefined decision trees with remarkable speed, but they could not generalize, adapt to novel situations, or coordinate with other systems in flexible ways.

Modern agentic AI systems, built on the foundation of large language models and connected to real-world tools through APIs, databases, and code interpreters, exhibit a qualitatively different set of capabilities. They can:

Plan multi-step sequences of actions toward a goal

Use tools including web search, code execution, file management, and API calls

Remember context across extended interactions and sessions

Reason through ambiguous situations by generating and evaluating hypotheses

Communicate with other agents, humans, and external systems in natural language

Self-correct when an approach fails, adapting strategy without human intervention

The business significance of this shift cannot be overstated. When AI moves from being a passive analytical tool to an active participant in business processes, the entire practice of analytics transforms. Analysts are no longer merely data scientists who build models; they become agent architects who design, supervise, and evaluate autonomous systems that conduct analysis on their behalf.

Figure 2:The fundamental distinction between traditional AI tools and agentic AI systems: from reactive processing to autonomous, goal-directed action loops.

2.16.1.1 The Agent Loop: Perceive, Plan, Act, Reflect¶

At the architectural heart of every agentic AI system is what practitioners call the agent loop — a cyclical process through which the agent continuously updates its understanding of the environment, decides what to do next, executes an action, and evaluates the result.

In a business analytics context, this loop might look like the following: An agent is assigned the goal of identifying anomalies in regional sales data for the prior quarter. The agent perceives the available data sources by querying a database catalog. It plans a sequence of steps: retrieve data, clean and normalize, apply anomaly detection algorithms, cross-reference with external market conditions, and draft a findings memo. It acts by executing each step, using tools like SQL query runners, Python code interpreters, and web search APIs. It reflects after each step, checking whether the output is sensible and revising its plan if something unexpected occurs — say, if the anomaly detection returns zero results, triggering the agent to question its data quality assumptions and re-run with corrected parameters.

This loop runs continuously and recursively until the agent determines it has achieved its goal or until it escalates to a human supervisor when it encounters a situation it cannot resolve autonomously. The sophistication of this loop — how well the agent plans, how robustly it handles failure, how accurately it knows when to ask for help — is the primary differentiator between agent systems of different quality levels.

36.2 The Architecture of Autonomous Agents in Business Settings¶

To deploy agentic AI effectively in a business analytics context, practitioners must understand the key architectural components that make autonomous behavior possible. These components are not abstract theoretical constructs; they correspond to real technical decisions made during the design and deployment of agent systems.

3.16.2.1 The Core Language Model: The Agent’s Brain¶

At the center of most modern agentic AI systems is a large language model — GPT-4o/o3 (OpenAI), Claude Sonnet 4.5/Opus 4.5 (Anthropic), Gemini 2.0 Flash/Pro (Google), Llama 3.3 (Meta), or similar frontier models. The LLM serves as the agent’s reasoning engine: it interprets goals, constructs plans, evaluates options, and generates actions expressed as text or structured outputs. The quality of the LLM directly determines the quality of the agent’s reasoning, particularly in complex or ambiguous situations.

For business analytics applications, model selection involves trade-offs among reasoning capability, speed, cost per token, context window length, and domain-specific performance. A financial fraud detection agent that must process thousands of transaction records in a single context window has very different model requirements than a customer sentiment analysis agent that works with short social media posts.

3.26.2.2 Tools and Integrations: The Agent’s Hands¶

An LLM alone is a text-in, text-out system with no ability to affect the world. Tools are what give agents the capacity to act. In modern agent frameworks, tools are typically implemented as callable functions — Python functions, API endpoints, or database queries — that the LLM can invoke by generating a structured request (usually in JSON format), with the results fed back into the model’s context for subsequent reasoning.

3.36.2.3 Memory Systems: The Agent’s History¶

One of the most important and often underappreciated components of agentic AI is memory. Human analysts accumulate knowledge over time — they remember past analyses, know which data sources have been unreliable, understand which stakeholders prefer which types of visualizations, and build intuition about domain patterns. For AI agents to be genuinely useful in business settings, they need analogous memory capabilities.

Agent memory is typically structured across four tiers:

In-Context Memory refers to the current conversation or task session stored in the model’s active context window. This is the most immediate form of memory but is limited in length and does not persist across sessions.

External Memory (Retrieval-Augmented Generation) involves a vector database or document store that the agent can query to retrieve relevant past analyses, company reports, domain knowledge, or procedural documentation. This effectively gives the agent access to an organizational knowledge base.

Episodic Memory logs the sequence of actions taken during past agent runs, allowing the system to learn from previous successes and failures in completing similar tasks.

Semantic Memory stores general knowledge about the organization, its data schemas, business rules, stakeholder preferences, and domain-specific terminology.

Figure 3:The four-tier memory architecture of production-grade AI agents, enabling persistent knowledge across sessions and tasks.

3.46.2.4 Planning and Orchestration: The Agent’s Mind¶

Planning is what transforms an agent from a reactive responder into a genuine autonomous actor. Modern agent planning strategies range from simple chain-of-thought prompting — where the agent writes out its reasoning step by step before acting — to sophisticated multi-step planning frameworks like ReAct (Reasoning + Acting), Tree of Thoughts, and Plan-and-Execute architectures.

In business analytics, effective planning means decomposing high-level analytical goals (“Tell me why our customer churn rate increased last quarter”) into specific, executable subtasks, sequencing those subtasks logically, identifying dependencies and contingencies, and adapting the plan as new information emerges during execution. The quality of an agent’s planning capability is often the factor that most distinguishes a useful analytics agent from a frustrating one.

46.3 Multi-Agent Systems: The Organizational Intelligence Layer¶

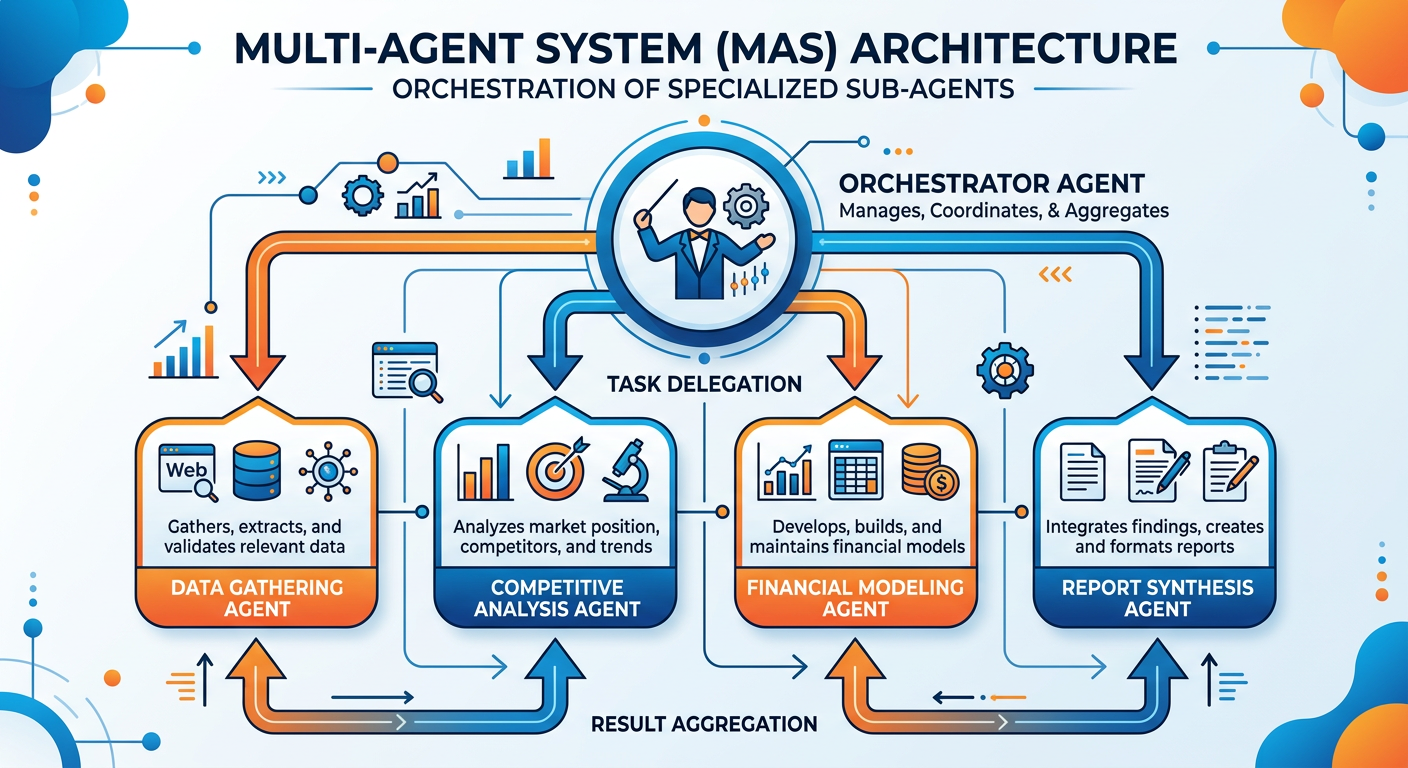

While a single autonomous agent can accomplish remarkable analytical tasks, the true transformative potential of agentic AI in business emerges when multiple agents work together in coordinated systems. Multi-agent systems (MAS) — networks of specialized agents that collaborate, delegate, and negotiate to accomplish complex organizational objectives — represent what we might call the organizational intelligence layer of the AI-era enterprise.

4.16.3.1 Patterns of Multi-Agent Coordination¶

Multi-agent systems can be organized according to several coordination patterns, each suited to different types of business analytics workflows.

The Orchestrator-Worker Pattern is the most common architecture in current enterprise deployments. A central orchestrator agent receives high-level goals from human principals, decomposes them into subtasks, assigns subtasks to specialized worker agents, monitors progress, consolidates results, and returns a unified output. In an analytics context, the orchestrator might be a general-purpose reasoning agent, while workers specialize in data retrieval, statistical modeling, natural language generation, visualization design, and quality assurance.

The Peer-to-Peer Collaborative Pattern involves agents of roughly equal standing that negotiate and coordinate directly with each other, without a central authority. This architecture is well-suited for tasks that require synthesizing multiple independent perspectives — for example, a system where separate agents analyze the same business problem from financial, operational, and customer experience viewpoints, then debate their conclusions to arrive at a more robust recommendation.

The Competitive-Ensemble Pattern runs multiple independent agents on the same analytical task and uses a meta-evaluator to compare results, identifying where agents agree and disagree and flagging disagreements for human review. This pattern dramatically improves reliability and helps catch agent errors that would go undetected in a single-agent system.

The Pipeline Pattern arranges agents in a sequential chain where each agent’s output becomes the next agent’s input, forming an analytical assembly line. This is well-suited for structured, repeatable analytical workflows — for example, a data quality agent feeds a feature engineering agent, which feeds a modeling agent, which feeds a report generation agent.

Figure 4:Four foundational coordination patterns in multi-agent business analytics systems, each optimized for different workflow types.

4.26.3.2 Real-World Multi-Agent Applications in Business Analytics¶

The transition from theory to practice is where business analytics professionals spend most of their professional energy, so it is worth examining several real-world domains where multi-agent systems are already delivering measurable value.

Financial Services: Autonomous Risk Assessment Several major financial institutions have deployed multi-agent systems for credit risk assessment that operate 24/7, continuously monitoring loan portfolios against updated macroeconomic indicators, borrower financial disclosures, and market signals. In these architectures, specialized agents handle specific dimensions of risk analysis — credit history interpretation, collateral valuation, cash flow modeling, regulatory compliance checking — and an orchestrator synthesizes their assessments into a unified risk score with natural language explanations.

Retail: Demand Forecasting and Inventory Optimization Large retailers have built multi-agent systems that combine demand forecasting agents (processing sales history, seasonality patterns, promotional calendars), supply chain agents (tracking supplier lead times, logistics disruptions, warehouse capacity), and pricing optimization agents into unified inventory management systems. These systems can autonomously adjust purchase orders, reorder points, and pricing recommendations in response to real-time conditions — a task that previously required multiple analyst teams working in coordination.

Healthcare Analytics: Clinical Decision Support Healthcare organizations are experimenting with multi-agent architectures that combine clinical data analysis agents, medical literature retrieval agents, regulatory compliance agents, and patient communication agents to support clinical decision-making at scale. These systems must operate under strict governance frameworks given the stakes of errors, making them particularly interesting case studies in agentic AI oversight.

Marketing: Campaign Intelligence Systems Marketing analytics teams at consumer brands are deploying agent systems that continuously monitor campaign performance across channels, identify underperforming segments, generate hypothesis-driven explanations for performance variances, design A/B test proposals, and produce weekly narrative performance reports — collapsing what was formerly a week-long manual analytical cycle into hours.

56.4 Agentic AI Frameworks and Platforms: The Practitioner’s Landscape¶

The practical deployment of agentic AI systems requires choosing among a rapidly evolving ecosystem of frameworks, platforms, and tools. Graduate-level analytics professionals need not be expert developers in all of these, but they must be sufficiently fluent to evaluate options, communicate with technical teams, and make informed architectural decisions.

5.16.4.1 Developer Frameworks¶

Several open-source and commercial frameworks have emerged as dominant tools for building agentic AI systems.

LangChain and LangGraph are Python-based frameworks that provide abstraction layers for connecting LLMs to tools, memory systems, and other agents. LangGraph, the more recent evolution, provides explicit support for building stateful, graph-based agent workflows where nodes represent agent actions and edges represent control flow.

AutoGen (Microsoft) is a framework designed specifically for multi-agent conversation, allowing developers to create networks of agents that communicate through structured dialogue, with support for human-in-the-loop interruptions, code execution, and tool use.

CrewAI provides a high-level abstraction for building multi-agent teams with defined roles, goals, backstories, and collaborative workflows. It is particularly popular in business analytics contexts because its role-based abstractions map naturally onto organizational structures.

OpenAI Assistants API provides a managed platform for building agents with built-in tool support, persistent thread management, and vector store integration, reducing the infrastructure burden of deploying production agents.

5.26.4.2 Voice AI Platforms: The Conversational Frontier¶

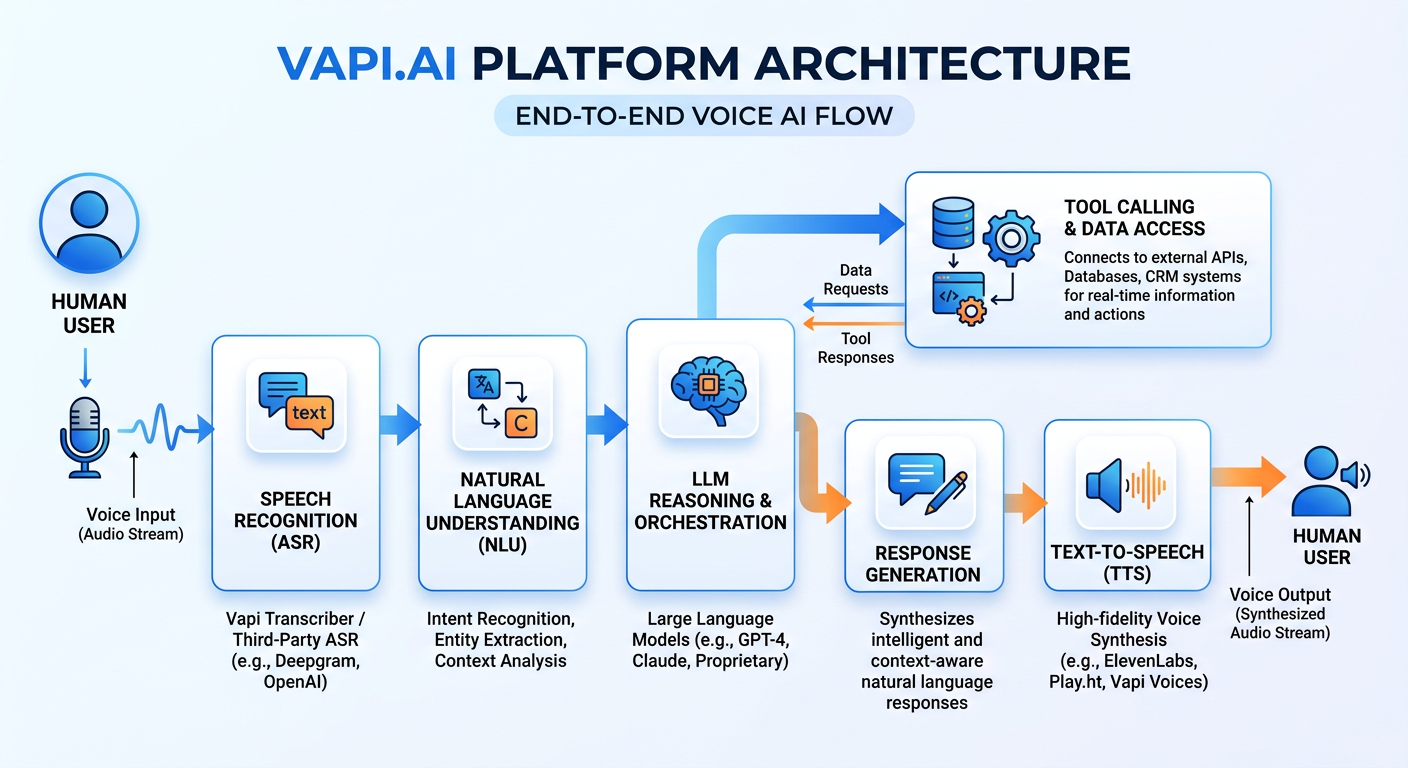

One of the most practically significant and rapidly growing categories of agentic AI deployment in business is voice AI — AI agents that communicate through spoken language in real-time. Voice agents are transforming customer service, sales operations, appointment scheduling, field service coordination, and internal analytics querying.

Vapi.ai has emerged as one of the leading platforms for building and deploying production-grade voice AI agents. Unlike simple text chatbots, Vapi-powered voice agents engage in real-time spoken conversation with latency profiles suitable for natural dialogue, connect to backend systems through function calls, support complex multi-turn conversation flows, and can be integrated with phone systems (via telephony providers), web applications, and mobile apps.

The relevance of voice AI to business analytics is multidimensional. First, voice agents enable non-technical users — executives, field staff, customer service representatives — to query analytical systems using natural language. Second, voice AI deployments generate rich conversational data that is itself analytically valuable. Third, understanding the architecture and capabilities of voice AI systems is essential for analytics professionals who design the backend data systems that power these agents.

Figure 5:Architecture of a production voice AI agent deployment, showing the real-time flow from speech input through reasoning to spoken response.

66.5 Transforming Business Analytics Practice: Roles, Workflows, and Value Creation¶

The emergence of agentic AI is not merely a technological development — it is a fundamental restructuring of what business analytics work looks like, who does it, how value is created, and what skills are most valuable.

6.16.5.1 The Evolving Role of the Business Analytics Professional¶

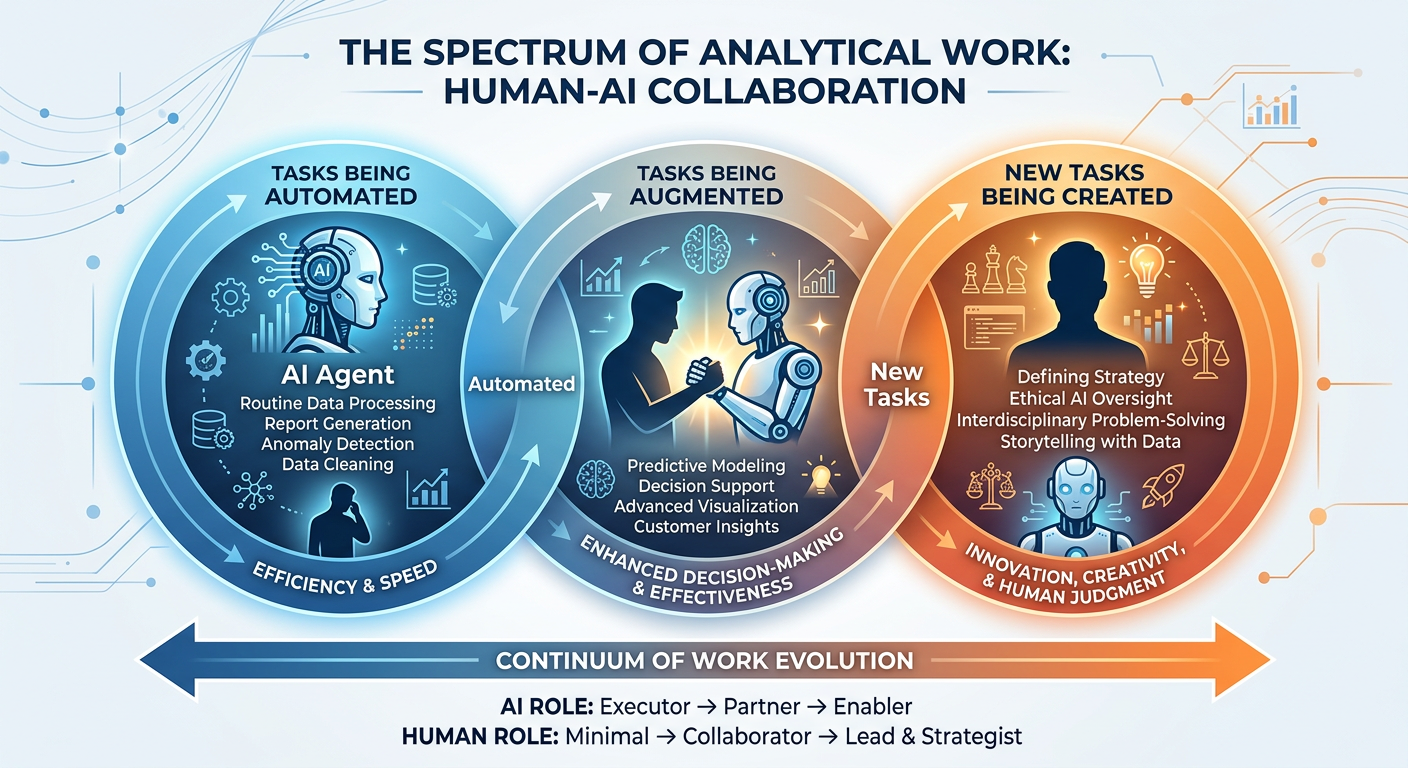

It is tempting to frame agentic AI as a threat to analytics professionals — if agents can conduct analyses autonomously, what is left for human analysts to do? This framing is both understandable and ultimately misleading. The historical pattern of analytical automation consistently shows that automating analytical processes expands the demand for analytics expertise rather than contracting it, by making analysis faster, cheaper, and more pervasive throughout organizations.

The more accurate framing is that agentic AI transforms the analytics professional’s role in several critical dimensions.

From data manipulator to agent architect: The most time-consuming portion of traditional analytics work — data retrieval, cleaning, transformation, and basic visualization — is increasingly automated by agents. Analytics professionals increasingly focus on designing the workflows, specifying the goals, selecting the tools, and evaluating the outputs of agent systems rather than executing these tasks manually.

From individual analyst to system designer: Where a traditional analyst might build a single model or dashboard, an analytics professional in the agentic era designs multi-agent systems that continuously perform analytical functions, effectively creating analytical infrastructure rather than one-off analyses.

From backward-looking reporter to forward-looking strategist: When routine analytical reporting is automated, human analysts are freed to focus on interpreting results, identifying strategic implications, challenging assumptions, and designing new analytical questions — the higher-order cognitive work that agents cannot yet reliably perform.

From technical expert to translation specialist: Perhaps the most enduring value of human analytics professionals in the agentic era is their ability to translate between business questions and analytical specifications — understanding what a business stakeholder actually needs (which is often not what they literally ask for) and designing agent systems that deliver genuine insight rather than technically correct but practically useless outputs.

Figure 6:The evolving role of business analytics professionals from manual data processors to strategic agent architects in the agentic AI era.

6.26.5.2 Agentic Analytics Workflows: A Process Perspective¶

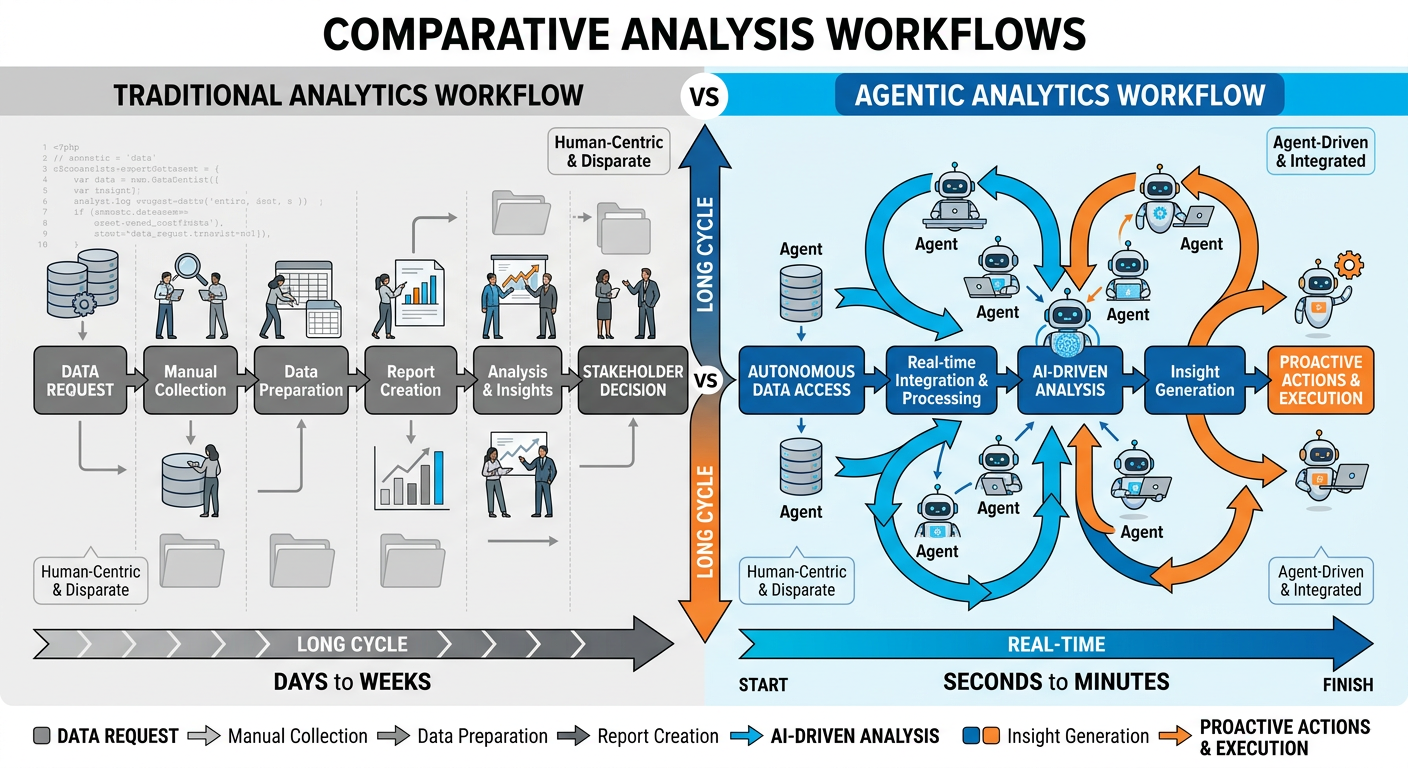

To understand concretely how agentic AI transforms analytics workflows, consider a comparative analysis of a common business analytics task — competitive intelligence reporting — before and after agentic AI deployment.

Traditional Workflow (Pre-Agentic):

**A ** team of two analysts spends three days each week gathering competitor pricing data from websites, financial disclosures from SEC databases, social media sentiment from manual review, customer review data from ratings platforms, and news mentions from Google Alerts. They compile this data into a spreadsheet, manually compute comparative metrics, and draft a narrative summary. The process takes roughly 48 person-hours per cycle and produces a report approximately 72 hours after the observation period.

Agentic Workflow (Post-Deployment):

**A ** multi-agent competitive intelligence system runs continuously. A web monitoring agent tracks competitor websites for pricing and product changes in real time. A financial data agent queries SEC EDGAR and financial data APIs on a scheduled basis. A social listening agent processes sentiment signals from multiple platforms through API integrations. A synthesis agent aggregates structured outputs from all monitoring agents, computes trend metrics, identifies significant changes, and drafts narrative sections. An orchestrator agent compiles the final report, flags items requiring human review, and distributes it to stakeholders. The process runs 24/7, produces daily updates rather than weekly reports, and requires approximately 2 person-hours per week for human oversight and quality review.

The value created is not simply efficiency — it is a qualitative improvement in the analytical product itself: higher frequency, lower latency, broader data coverage, and more consistent quality.

6.36.5.3 Measuring the Value of Agentic Analytics Deployments¶

Business case construction for agentic AI analytics investments requires a measurement framework that captures both quantitative efficiency gains and qualitative capability improvements.

Quantitative metrics typically include: analyst hours saved per analytical cycle, reduction in time-to-insight from data collection to stakeholder delivery, increase in the volume and frequency of analytical outputs, reduction in data errors detected in analytical reports, and cost per analytical insight produced.

Qualitative metrics include: stakeholder satisfaction with analytical product quality, analyst job satisfaction and higher-value work ratio, organizational decision velocity (how quickly business units make data-informed decisions), and breadth of analytical coverage across business domains.

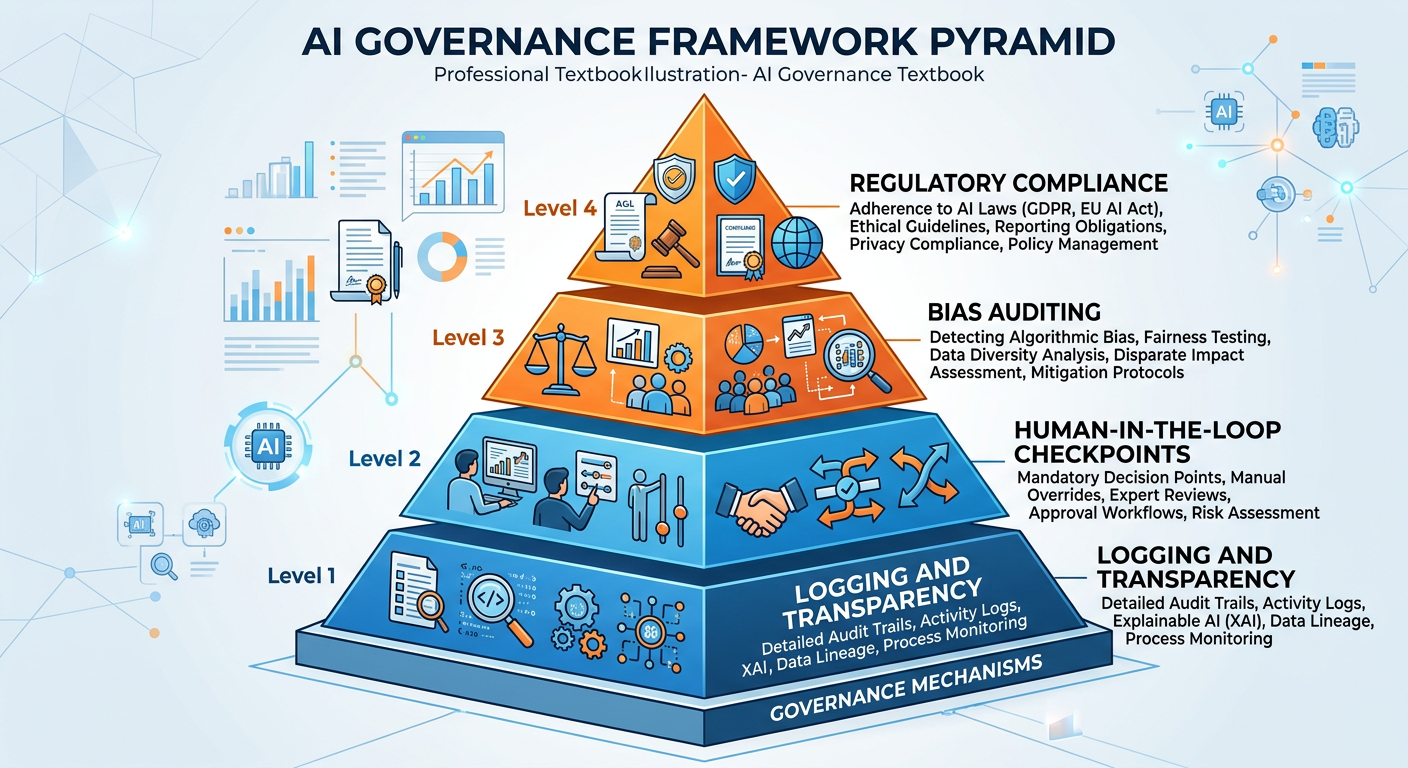

76.6 Governance, Ethics, and Risk in Agentic AI Analytics¶

The autonomy that makes agentic AI systems powerful also creates novel governance, ethical, and risk challenges that analytics leaders must address proactively. Unlike traditional analytics tools that passively process data and present results for human decision-making, autonomous agents act in the world — they query databases, call APIs, generate documents, and in some architectures, execute transactions. The stakes of agent errors are correspondingly higher.

7.16.6.1 The Accountability Gap¶

When a human analyst makes an error in a financial forecast, accountability is clear. When an autonomous agent system produces a flawed forecast that influences a significant business decision, accountability becomes distributed across the organization: the analyst who specified the agent’s objective, the engineer who built the agent, the manager who approved its deployment, the platform vendor who provides the underlying model, and the organization that chose to rely on the agent’s output without sufficient human review. This accountability gap is one of the central governance challenges of agentic AI deployment.

Best-practice organizations address the accountability gap by maintaining explicit human-in-the-loop checkpoints for high-stakes analytical outputs, requiring agent audit trails that document every tool call, data source accessed, and reasoning step taken, and establishing clear internal governance policies that assign named human accountability for agent system outputs.

7.26.6.2 Data Privacy and Security in Agentic Systems¶

Agentic AI systems that autonomously access databases, APIs, and external services create expanded attack surfaces for data security incidents. An agent with broad data access permissions operating autonomously is a high-value target for adversarial manipulation through prompt injection — attacks in which malicious content embedded in data sources attempts to hijack the agent’s behavior by overriding its original instructions.

7.36.6.3 Bias Amplification at Scale¶

When human analysts produce biased analyses, the scope of impact is limited by human throughput. When agentic systems produce biased analyses, they can do so at massive scale, with high consistency, before the bias is detected. Analytics professionals must be particularly vigilant about bias in the data, objectives, and evaluation criteria fed to autonomous agents, since these systems will optimize effectively for whatever they are given — including flawed proxies for the actual business objective.

Figure 7:**A ** layered governance framework for responsible deployment of agentic AI in business analytics environments.

7.46.6.4 The Explainability Imperative¶

Regulatory frameworks in multiple jurisdictions are increasingly requiring that automated decision systems be explainable — that is, that affected parties can receive a meaningful account of why a particular decision was made. For agentic AI analytics systems that influence decisions affecting employees, customers, or financial counterparties, explainability is not merely a technical nice-to-have; it is a legal and ethical requirement.

Agent audit trails — detailed logs of every reasoning step, tool call, data retrieval, and decision branch taken during an agent’s execution — are the foundation of agentic AI explainability. Building robust logging into agent architectures from the outset is significantly easier than retrofitting it after deployment.

86.7 The Future Trajectory: Where Agentic AI Is Taking Business Analytics¶

Predicting the future trajectory of a technology that is evolving as rapidly as agentic AI requires both analytical rigor and intellectual humility. Nevertheless, several directional trends are sufficiently well-established to warrant serious attention from business analytics professionals preparing for the next phase of their careers.

8.16.7.1 Proactive Analytics: From Answering Questions to Asking Them¶

Perhaps the most consequential near-term development is the shift from reactive to proactive analytics. Traditional analytics systems answer questions that humans ask. Emerging agentic systems are beginning to identify the questions worth asking — autonomously scanning data environments for anomalies, patterns, and opportunities that human analysts might not think to investigate.

This capability — sometimes called analytics-driven discovery or proactive intelligence — represents a fundamental expansion of what analytics contributes to organizations. Rather than being a function that responds to business questions, analytics becomes a function that generates strategic questions for business leaders to consider.

8.26.7.2 Continuous Learning Agents¶

Current production agent systems are largely static: they use fixed models and fixed tool sets, updated through deliberate redeployment cycles. The emerging frontier is continuously learning agents that update their internal knowledge representations and behavioral strategies based on experience, feedback, and new data — analogous to how skilled human analysts grow more effective over time.

For business analytics, continuously learning agents promise systems that become more accurate in their domain-specific analyses over time, better calibrated to the idiosyncrasies of a particular organization’s data environment, and more effective at anticipating the preferences and needs of specific stakeholder groups.

8.36.7.3 Agent-to-Agent Economies¶

**A ** more speculative but intellectually fascinating development is the emergence of agent-to-agent economies — environments in which AI agents transact with each other directly, exchanging analytical services, data, and computational resources in automated markets. Early examples of this pattern are already visible in programmatic advertising markets, algorithmic trading systems, and automated API marketplaces. As agents become more capable and more numerous, these inter-agent transactions are likely to become a significant feature of the digital business landscape.

Figure 8:Projected trajectory of agentic AI capabilities in business analytics from 2024 to 2030, highlighting key developmental milestones.

96.8 Case Study: JPMorgan Chase and the COIN Program¶

One of the most widely cited and analytically instructive real-world deployments of autonomous AI in business analytics is JPMorgan Chase’s Contract Intelligence (COIN) system, introduced publicly in 2017 and significantly expanded in subsequent years. While COIN predates many of the multi-agent architectures described in this chapter, its trajectory and business impact provide an invaluable case study in the organizational dynamics of deploying autonomous AI for analytical work.

COIN was developed to automate the interpretation of commercial loan agreements — a task that previously required approximately 360,000 lawyer-hours per year and remained prone to human error despite the substantial labor investment. The system used a combination of natural language processing and machine learning to extract structured data from unstructured legal documents, classify clauses, identify anomalies, and flag items requiring human legal review.

The initial deployment reduced the 360,000 annual lawyer-hours to seconds of computation time per document, with error rates substantially below the human baseline for document interpretation tasks. But the more interesting and less commonly discussed dimension of the COIN case is what happened to the legal and analytics professionals whose document review work was automated.

Rather than being displaced, many of these professionals were redeployed to higher-value work: complex contract negotiation that required relational and strategic judgment, development and supervision of additional AI automation projects, quality review and governance of the COIN system’s outputs, and exploration of entirely new analytical applications that the time savings made feasible. JPMorgan’s reported experience aligns with the broader pattern: automation of routine analytical work tends to expand rather than contract the total demand for analytics expertise, while shifting the nature of that expertise upward in cognitive complexity. By 2025, JPMorgan Chase had scaled its AI deployment to over 400 use cases enterprise-wide, investing more than $2 billion annually in AI and data infrastructure, with new agentic systems powering equity research summarization, earnings call analysis, and real-time credit monitoring — all capabilities that emerged from the organizational capability built around COIN’s initial success.

9.1Discussion Question¶

Case-Based Discussion: The Analytics Professional’s Evolving Mandate

JPMorgan Chase’s COIN system illustrates both the transformative potential and the organizational complexity of deploying autonomous AI for analytical work in a highly regulated industry. Consider the following scenario:

You are the Chief Analytics Officer at a mid-sized regional bank with $15 billion in assets. Your analytics team of 28 professionals currently spends approximately 60% of their collective time on routine data extraction, cleaning, reporting, and document review tasks — work that is increasingly automatable through agentic AI systems. Your CTO has proposed a phased deployment of a multi-agent analytics system that would automate these routine tasks over 18 months.

Drawing on the JPMorgan COIN case and the frameworks presented in this chapter, address the following questions in a structured discussion:

What governance framework would you put in place before beginning theagentic AI deployment, and which specific risks would you prioritize in a regulated banking environment?

How would you communicate the transition to your analytics team to minimize talent attrition while maximizing their engagement in the transformation process? What new roles and skill development pathways would you create?

The multi-agent system will eventually produce analytical outputs that influence credit decisions affecting small business borrowers. What human-in-the-loop mechanisms would you require, and how would you structure accountability when the system produces an output that leads to a demonstrably poor business decision?

Three years after full deployment, how would you measure whether the agentic AI investment delivered genuine organizational value — not just cost reduction, but qualitative improvement in the analytics function’s strategic contribution?

JPMorgan operated at massive scale with substantial AI development resources. What modifications to their approach would you make given your institution’s smaller scale and more limited technical capacity?

106.9 Hands-On Activity: Building a Voice AI Analytics Assistant with Vapi.ai¶

10.1Overview¶

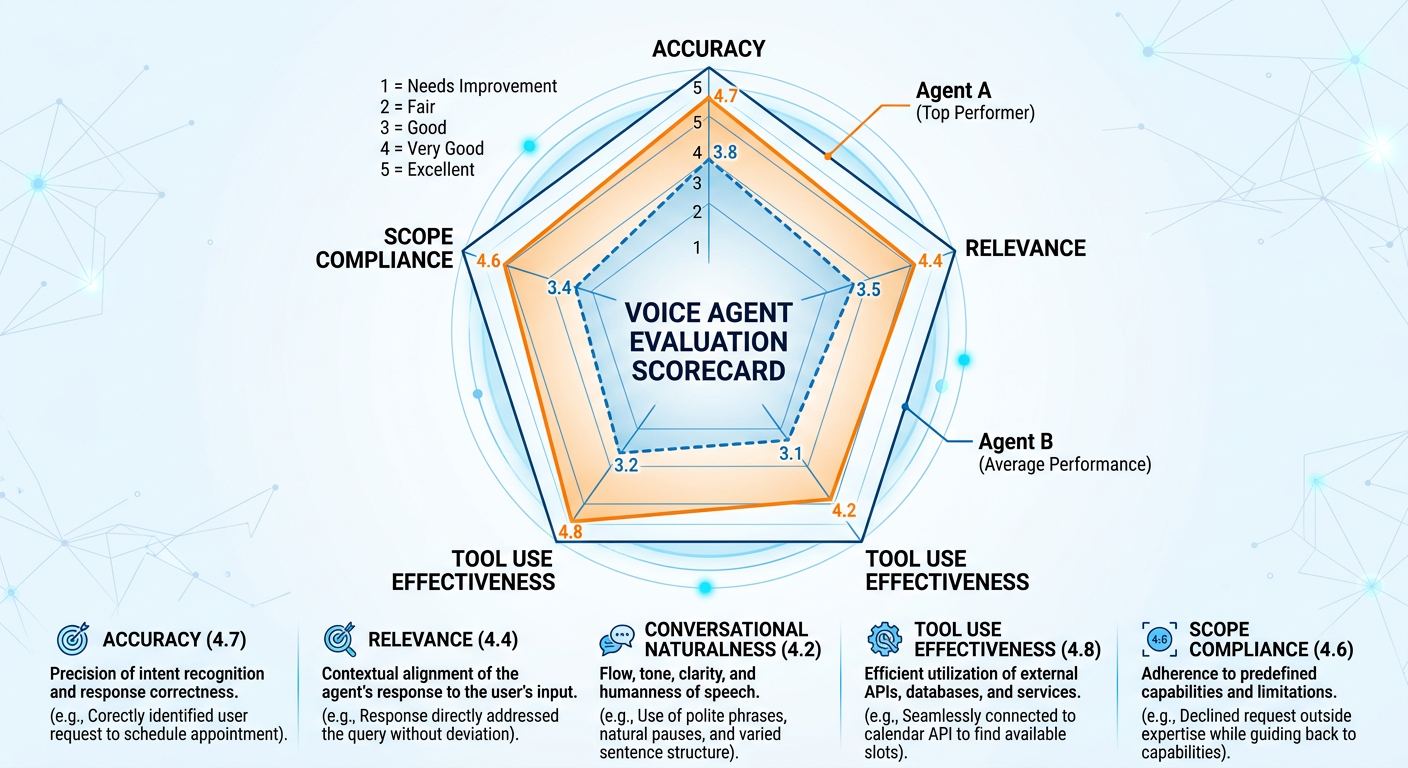

In this hands-on activity, you will design, configure, and test a voice-enabled AI analytics assistant using Vapi.ai — one of the leading platforms for deploying production-grade voice AI agents. By the end of this activity, you will have a functioning voice agent capable of answering business analytics queries using natural spoken conversation, calling backend functions to simulate data retrieval, and maintaining coherent multi-turn dialogue with a business user.

This activity bridges the conceptual frameworks of this chapter with practical deployment experience, giving you direct insight into the architecture, capabilities, and limitations of voice-based agentic AI systems.

Figure 9:Step-by-step workflow for the Vapi.ai voice AI analytics assistant hands-on activity.

10.2Learning Objectives¶

Upon completing this activity, students will be able to:

Create and configure a voice AI agent on the Vapi.ai platform

Write effective system prompts that define an agent’s persona, scope, and behavioral boundaries

Configure tool/function calling to simulate backend data retrieval

Evaluate voice agent performance across dimensions of accuracy, naturalness, latency, and task completion

Identify design improvements based on test interaction results

10.3Prerequisites¶

A computer with internet access and a working microphone

A free Vapi.ai account (signup at vapi.ai — no credit card required for trial usage)

Approximately 90 minutes of uninterrupted work time

Completion of Chapter 6 reading prior to beginning the activity

10.4Background: Why Voice AI Matters for Business Analytics¶

Voice interfaces represent a fundamentally different analytical modality than dashboards, reports, or text-based chatbots. When a regional sales manager can ask their analytics system “Why did our Southeast region underperform last month compared to the same period last year?” and receive a spoken, conversational response that synthesizes data from multiple sources, the barrier between analytical insight and business action drops dramatically.

Vapi.ai achieves this through a sophisticated real-time pipeline: spoken audio is captured and transcribed using a speech-to-text model (options include Deepgram, OpenAI Whisper, and others), the transcribed text is processed by a connected LLM that generates a response while optionally calling backend tools to retrieve data, and the response text is converted to natural-sounding speech using a text-to-speech engine (options include ElevenLabs, PlayHT, Azure Neural Voices, and Cartesia) and returned to the user — all within a latency window that enables natural conversational flow.

For analytics professionals, understanding this pipeline is important not just for building voice agents but for designing the backend data systems, APIs, and knowledge bases that make voice analytics genuinely useful.

10.5Part 1: Platform Setup and Orientation (15 minutes)¶

Step 1.1: Create Your Vapi Account

Navigate to vapi.ai and create a free account using your FAU email address. After verifying your email, you will land on the Vapi dashboard. Take a few minutes to orient yourself to the interface. The primary navigation includes:

Assistants: Where you create and configure voice AI agents

Phone Numbers: Where you can provision phone numbers for telephony deployments (not required for this activity)

Calls: A log of all calls and interactions made through your agents

Files: A document store where you can upload knowledge base documents for your agent to reference

Tools: Where you define custom functions your agent can call

Step 1.2: Review the Dashboard

Before building, spend five minutes exploring the dashboard to familiarize yourself with the layout. Note the Test button in the Assistant view — this allows you to conduct web-based voice conversations with your agent without requiring a phone number, which is how you will test your agent in this activity.

Step 1.3: Review Vapi Documentation

Navigate to the Vapi documentation (docs.vapi.ai) and briefly review the “Getting Started” and “Assistants” sections. Pay particular attention to the descriptions of the system prompt, voice selection, and tools configuration options, as these are the primary levers you will use in this activity.

10.6Part 2: Designing Your Analytics Assistant (20 minutes)¶

Before building anything in the platform, effective agent design requires deliberate upfront specification. This is a critical professional habit — the quality of an agent’s behavior is almost entirely determined by the quality of its design specification.

Step 2.1: Define Your Agent’s Role and Scope

For this activity, you will build a voice analytics assistant for a hypothetical mid-sized retail company called SunCoast Retail Group, which operates 47 stores across Florida. The agent’s role is to serve as an always-available analytical assistant for regional managers, enabling them to query key business metrics through natural voice conversation.

The agent should be capable of handling queries in the following analytical domains:

Sales performance: Monthly and quarterly sales by region, store, and product category

Inventory status: Current stock levels, low-stock alerts, and reorder recommendations

Customer metrics: Transaction counts, average order values, loyalty program statistics

Staffing analytics: Hours worked, overtime alerts, and schedule efficiency metrics

Step 2.2: Write the System Prompt

The system prompt is the foundational instruction set that defines your agent’s identity, capabilities, scope, and behavioral boundaries. In Vapi, the system prompt is entered in the Assistant configuration under the “Model” section.

For your SunCoast Retail Group analytics assistant, write a system prompt using the following structure and guidance. You may use the template below as a starting point and customize it:

You are ARIA (Analytics and Reporting Intelligence Assistant), a voice-enabled

business analytics assistant for SunCoast Retail Group, a 47-store Florida

retail chain. You help regional managers and store directors access key

business metrics through natural conversation.

YOUR ROLE:

- Answer questions about sales performance, inventory, customer metrics,

and staffing analytics

- Provide clear, concise spoken responses optimized for voice delivery

- Proactively suggest related metrics when answering a question

(e.g., when asked about sales, mention if inventory levels are relevant)

- Escalate to a human analyst for queries involving strategic decisions,

unusual anomalies, or data not available in your current tools

YOUR COMMUNICATION STYLE:

- Speak conversationally and clearly — you are talking, not writing

- Use plain language, avoid jargon unless the user initiates it

- Keep responses under 60 seconds of speaking time unless the user requests detail

- Confirm numeric figures clearly (e.g., "one million, two hundred thousand dollars")

- When uncertain about data, say so explicitly rather than guessing

YOUR BOUNDARIES:

- Do not access or discuss individual employee performance data

- Do not make autonomous recommendations to change pricing without

explicit management authorization context

- Do not store or repeat sensitive information like customer PII

- If a query is outside your analytical scope, say so clearly and

suggest who the user should contact

CURRENT DATE CONTEXT: Always assume the current month is the most recent

complete fiscal month unless the user specifies otherwise.Take time to refine this prompt based on your own judgment. Consider: What edge cases might come up in real conversations with retail managers? What behaviors do you want to explicitly encourage or discourage? What tone best suits the organizational context?

Step 2.3: Design Sample Queries

Before proceeding to build, write out ten sample queries that a real regional manager might ask ARIA. These will serve as your test script in Part 4. Examples to get you started:

“How did the Tampa region perform last month compared to the same month last year?”

“Which stores are currently showing low inventory on seasonal items?”

“What is our average transaction value for loyalty members versus non-members this quarter?”

“Are any of our stores running significant overtime this week?”

Design your ten queries to cover a range of analytical domains, complexity levels, and conversational styles (including at least two that are slightly ambiguous or conversational rather than precise).

10.7Part 3: Building the Agent in Vapi (25 minutes)¶

Step 3.1: Create a New Assistant

In the Vapi dashboard, navigate to Assistants and click Create Assistant. Select Blank Template to start from scratch. Give your assistant the name “ARIA — SunCoast Analytics.”

Step 3.2: Configure the Model

In the Model section of the assistant configuration:

Provider: Select OpenAI (or your preferred available provider)

Model: Select GPT-4o or GPT-4o-mini (GPT-4o-mini is recommended for lower latency, which is important in voice interactions)

System Prompt: Paste the system prompt you wrote in Step 2.2

Temperature: Set to 0.3 (lower temperature produces more consistent, factual-style responses appropriate for analytics)

Max Tokens: Set to 300 (constrains response length to voice-appropriate duration)

Step 3.3: Configure the Voice

In the Voice section:

Provider: Select ElevenLabs or Cartesia (both produce natural-sounding voices suitable for a professional analytics assistant)

Voice: Choose a professional-sounding voice. For ARIA, a clear, confident voice with moderate speaking pace is appropriate. Listen to previews and select one that you feel suits a business analytics context.

Speaking Rate: Set to 1.0 (default) — you can adjust after testing if responses feel rushed or too slow

Step 3.4: Configure the Transcriber

In the Transcriber section:

Provider: Select Deepgram (recommended for low-latency transcription)

Model: Nova-2 (Deepgram’s general-purpose model with good business vocabulary handling)

Language: English (US)

Step 3.5: Configure Tools (Function Calling)

This is where your agent gains the ability to simulate data retrieval — a critical demonstration of the agentic capability discussed throughout this chapter. In Vapi, tools are defined as JSON schemas that describe functions the agent can call when it needs data to answer a question.

Navigate to the Tools section in the Vapi dashboard and create the following tools. For each tool, you will define the function name, description, and parameters. In a production system, these would call real APIs or database endpoints; in this activity, we will configure them as mock tools that demonstrate the architecture.

Tool 1: get_sales_performance

{

"name": "get_sales_performance",

"description": "Retrieves sales performance data for a specified region,

store, or product category over a specified time period.

Use this when the user asks about sales figures, revenue,

performance comparisons, or growth metrics.",

"parameters": {

"type": "object",

"properties": {

"region": {

"type": "string",

"description": "The geographic region or store name.

Options: Tampa, Orlando, Miami, Fort Lauderdale,

Jacksonville, or All Regions."

},

"time_period": {

"type": "string",

"description": "The time period for the query.

Examples: last_month, last_quarter,

year_to_date, same_period_last_year."

},

"category": {

"type": "string",

"description": "Optional product category filter.

Examples: Electronics, Apparel, Home Goods, All."

}

},

"required": ["region", "time_period"]

}

}Tool 2: get_inventory_status

{

"name": "get_inventory_status",

"description": "Retrieves current inventory levels, low-stock alerts,

and reorder recommendations for specified stores or

product categories. Use when users ask about stock

levels, inventory concerns, or reorder needs.",

"parameters": {

"type": "object",

"properties": {

"store_id": {

"type": "string",

"description": "Specific store identifier or 'all_stores'

for chain-wide view."

},

"alert_level": {

"type": "string",

"description": "Filter by alert severity.

Options: critical, low, normal, all."

}

},

"required": ["store_id"]

}

}Tool 3: get_customer_metrics

{

"name": "get_customer_metrics",

"description": "Retrieves customer transaction analytics including

average order value, transaction counts, and

loyalty program statistics.",

"parameters": {

"type": "object",

"properties": {

"region": {

"type": "string",

"description": "Geographic region or 'all' for chain-wide metrics."

},

"segment": {

"type": "string",

"description": "Customer segment. Options: loyalty_members,

non_members, all_customers."

},

"time_period": {

"type": "string",

"description": "Time period for metrics.

Examples: last_month, last_quarter, year_to_date."

}

},

"required": ["region", "time_period"]

}

}After creating each tool in the Vapi dashboard, return to your ARIA assistant configuration and add all three tools to the assistant under the Tools section of the assistant editor.

Step 3.6: Configure Conversation Settings

In the Advanced settings of your assistant:

First Message: Enter the opening message ARIA will speak when a conversation begins: “Hello, this is ARIA, your SunCoast Analytics assistant. How can I help you with your business data today?”

End Call Phrases: Add phrases like “goodbye,” “that’s all I needed,” and “thank you, that’s everything” — when the user says these, the call will end gracefully

Max Duration: Set to 600 seconds (10 minutes) for testing purposes

Background Sound: Set to Office (subtle ambient sound that makes the voice interaction feel more natural)

10.8Part 4: Testing and Evaluation (20 minutes)¶

Step 4.1: Initial Functionality Test

Click the Test button in your assistant configuration. This opens a web-based voice interface connected to your ARIA agent. You will need to allow microphone access in your browser.

Begin with a simple greeting and your first prepared test query. Speak naturally — use the kind of casual conversational register a real regional manager would use, not perfectly structured query language.

Conduct at least five conversations using your prepared test queries, allowing each conversation to develop naturally over multiple turns rather than treating each query as a one-shot interaction. Notice how the agent handles follow-up questions, requests for clarification, and context from earlier in the conversation.

Step 4.2: Stress Testing — Edge Cases and Failures

After completing your prepared queries, deliberately probe the agent’s boundaries with queries designed to test its robustness:

Ask a question that is outside ARIA’s defined scope (e.g., “What should I do about my underperforming store manager?”)

Ask an ambiguous question that could be interpreted multiple ways

Ask for information that a real analytics agent would need more context to answer accurately

Try interrupting mid-response to change the topic

Ask a follow-up question that requires memory of earlier conversation context

Document how ARIA handles each of these stress scenarios. Note where the system prompt’s behavioral boundaries hold, where they fail, and where the agent’s responses are surprising or inappropriate.

Step 4.3: Latency and Voice Quality Assessment

Evaluate the technical performance of your ARIA deployment across the following dimensions using a structured rating sheet:

| Dimension | Rating (1-5) | Notes |

|---|---|---|

| Response Latency | Time from end of speaking to start of agent response | |

| Transcription Accuracy | How accurately your speech was transcribed | |

| Voice Naturalness | How natural the text-to-speech voice sounds | |

| Response Relevance | How well responses addressed the actual query | |

| Conversational Flow | How natural the multi-turn dialogue felt overall | |

| Tool Call Behavior | Did the agent appropriately invoke tools? |

Step 4.4: Iterative Improvement

Based on your testing observations, identify at least three specific improvements to make to your ARIA configuration. These might include system prompt refinements, voice adjustments, additional tool definitions, or conversation setting changes.

Implement your improvements and conduct a second round of testing with a fresh set of queries. Document the before-and-after performance differences.

10.9Part 5: Reflection and Analysis (10 minutes)¶

After completing your testing and iteration, write a structured reflection addressing the following questions. This reflection will form the basis of your lab report submission.

Reflection Questions:

Architecture Insight: Which components of the Vapi.ai pipeline (transcription, LLM reasoning, tool calling, voice synthesis) had the greatest impact on the quality of the user experience, based on your testing? Which component appeared to be the most significant source of errors or limitations?

System Prompt Design: How did your system prompt design choices influence ARIA’s behavior? Which aspects of your prompt worked better than expected? Which aspects produced unexpected or problematic behaviors that required revision?

Agentic Behavior Assessment: Based on your testing, how closely does ARIA’s behavior match the definition of agentic AI presented in this chapter? In what ways does it fall short of fully autonomous agentic behavior, and what additional capabilities would be needed to close those gaps?

Business Application Evaluation: If ARIA were deployed in a real retail organization, what would be the three most significant barriers to adoption by actual regional managers? How would you address each barrier?

Ethical Reflection: What ethical concerns arose during your testing that were not fully addressed by the boundary conditions in your system prompt? How would you address these concerns in a production deployment?

10.10Submission Requirements¶

Submit the following items through the FAU Canvas course portal:

Your completed ARIA system prompt (the final version after iteration)

Screenshots of your Vapi assistant configuration and tool definitions

Your completed performance rating sheet from Step 4.3

Your structured reflection responses from Part 5

A brief (one paragraph) description of the three improvements you implemented and their observed effects

116.10 Chapter Summary¶

This chapter has traversed the landscape of agentic AI from foundational definitions to practical deployment, with particular emphasis on the implications for business analytics practice. The emergence of autonomous agents and multi-agent systems represents the most significant structural change to business analytics since the introduction of data warehouses and self-service BI platforms — arguably more significant, because it shifts the primary locus of analytical activity from human practitioners to human-supervised AI systems.

We examined the architecture of autonomous agents — the agent loop of perception, planning, action, and reflection; the role of large language models as reasoning engines; the critical importance of memory systems; and the tool integrations that give agents the capacity to act in the world. We explored the four primary patterns of multi-agent system coordination and examined real-world deployments across financial services, retail, healthcare, and marketing analytics.

We surveyed the practitioner landscape of agent frameworks and platforms, with particular attention to voice AI and the Vapi.ai platform that you explored hands-on. We analyzed how agentic AI is transforming the role of the analytics professional — from data manipulator to agent architect, from individual analyst to system designer, from backward-looking reporter to forward-looking strategic partner.

We took governance seriously, examining the accountability gap, data security challenges including prompt injection, bias amplification risks, and the explainability imperative. And we considered the future trajectory through proactive analytics, continuously learning agents, and the emerging possibility of agent-to-agent economies.

Figure 10:Knowledge map of Chapter 6: The interconnected concepts of Agentic AI and their implications for business analytics practice.

The central message for analytics professionals entering this era is neither uncritical enthusiasm nor defensive resistance. Agentic AI is not a threat to be managed or a trend to be waited out — it is a structural transformation of the analytical function that demands thoughtful engagement, new skills, and evolved professional identity. The analytics professionals who will thrive in the coming decade are those who understand not just how to use AI tools, but how to design, evaluate, govern, and continuously improve the agentic systems that will increasingly do the analytical work of organizations.

Your hands-on experience with Vapi.ai in this chapter represents a microcosm of that broader challenge: you specified a goal, designed a system, tested it against reality, found its limitations, iterated to improve it, and reflected on what you learned. That cycle — design, deploy, evaluate, iterate — is the fundamental rhythm of analytics work in the agentic era.

12Key Terms¶

Agentic AI — AI systems characterized by autonomous goal-directed behavior, multi-step planning, tool use, persistent memory, and the ability to operate with minimal human supervision.

Agent Loop — The cyclical process of perceive, plan, act, and reflect through which autonomous agents continuously update their behavior in response to their environment.

Multi-Agent System (MAS) — An architecture comprising two or more autonomous AI agents that interact to achieve individual or collective goals.

Orchestrator Agent — In multi-agent systems, a central agent that receives high-level goals, decomposes them into subtasks, assigns tasks to specialized worker agents, and consolidates results.

Prompt Injection — A security attack in which malicious content embedded in data processed by an agent attempts to override the agent’s original instructions.

ReAct (Reasoning + Acting) — An agent planning framework that interleaves chain-of-thought reasoning with action execution, enabling agents to adapt plans dynamically based on action results.

Tool Calling — The mechanism by which a language model invokes external functions, APIs, or services to retrieve data or take actions in the world.

Vector Database — A specialized database that stores and retrieves information based on semantic similarity, enabling agents to access relevant knowledge through natural language queries.

Voice AI — AI agents that communicate through real-time spoken language, combining speech-to-text, language model reasoning, and text-to-speech technologies.

Vapi.ai — A production-grade platform for building and deploying voice AI agents, supporting real-time telephony and web-based voice interactions with LLM reasoning and tool calling.

12.1📝 Discussion Guidelines¶

Primary Response: Your initial post must address all parts of the prompt with depth and critical thinking. It must include at least one citation (scholarly or credible industry source) to support your argument.

Peer Responses: You must respond thoughtfully to at least two of your peers. Your responses must go beyond simple agreement (e.g., “I agree with your point”) and add substantial value to the conversation by offering an alternative perspective, sharing related research, or asking a challenging follow-up question.

13Chapter Quiz¶

The following ten questions assess comprehension of key concepts from Chapter 6. Questions vary in type and complexity. Submit your responses through the FAU Canvas portal as directed by your instructor.

1. Which of the following best distinguishes an agentic AI system from a conventional AI tool?

a) Agentic AI systems use larger language models than conventional AI tools b) Agentic AI systems can initiate multi-step goal-directed actions autonomously, while conventional AI tools respond only when queried c) Agentic AI systems are exclusively cloud-based, while conventional AI tools can run locally d) Agentic AI systems require no human oversight, while conventional AI tools require constant supervision

2. In the agent loop architecture described in this chapter, what is the purpose of the reflect phase?

a) To generate the final report delivered to business stakeholders b) To evaluate the result of an action and determine whether to adapt the plan or proceed c) To request additional permissions from system administrators d) To log the agent’s activity for compliance and audit purposes

3. An analytics team wants to maximize reliability on a high-stakes quarterly earnings forecast by running three independent agent analyses of the same dataset and comparing results. Which multi-agent coordination pattern best describes this approach?

a) Orchestrator-Worker Pattern b) Pipeline Pattern c) Peer-to-Peer Collaborative Pattern d) Competitive-Ensemble Pattern

4. Which of the following is the most accurate description of prompt injection in the context of agentic AI security?

a) A technique for improving the quality of agent responses by carefully crafting system prompts b) An attack in which malicious instructions embedded in external content attempt to override an agent’s original directives c) A method for injecting real-time data into an agent’s context window during task execution d) A configuration approach for initializing multiple agents with shared behavioral guidelines

5. According to the four-tier memory architecture described in this chapter, which memory type stores general knowledge about an organization’s data schemas, business rules, and domain-specific terminology?

a) In-Context Memory b) Episodic Memory c) Semantic Memory d) External Retrieval Memory

6. In the Vapi.ai architecture for voice AI agents, which component is responsible for converting the user’s spoken words into text that the language model can process?

a) Text-to-Speech Engine b) Language Model (LLM) c) Speech-to-Text Transcriber d) Function Calling Module

7. A retail company deploys a multi-agent analytics system that runs 24/7, with separate agents handling demand forecasting, supply chain monitoring, and pricing optimization, all coordinated by a central agent. Three months later, the system produces a recommendation that leads to a significant overstock of a seasonal product. Who bears accountability for this decision?

a) The LLM provider whose model generated the recommendation b) Accountability is distributed and must be resolved by the organization’s pre-established governance framework c) The data engineer who built the database the agent queried d) No accountability applies because the decision was made autonomously by AI

8. Which of the following statements about the JPMorgan Chase COIN program most accurately reflects its documented organizational impact?

a) The system replaced the majority of the legal and analytics professionals whose document review work it automated b) The system failed to achieve its document review accuracy targets but was retained for its speed advantages c) The time savings from automation allowed professionals to be redeployed to higher-value strategic work, expanding the analytics function’s overall contribution d) The system was discontinued due to regulatory concerns about automated contract interpretation

9. In the context of agentic AI system design, what is the primary business justification for implementing human-in-the-loop checkpoints rather than allowing fully autonomous operation?

a) Human oversight is required because current AI models are incapable of reasoning about business problems b) Human checkpoints ensure accountability, catch consequential errors before they cause harm, and satisfy regulatory requirements in sensitive domains c) Human involvement is necessary to maintain employment levels in the analytics department d) Fully autonomous operation is technically impossible with current agentic frameworks

10. A business analytics professional working in the agentic AI era is most accurately described as:

a) A data scientist who manually builds statistical models and interprets their outputs b) A system architect who designs, supervises, evaluates, and iteratively improves autonomous analytical agent systems c) A software engineer who develops the large language models that power agent reasoning d) A project manager who coordinates between AI vendors and business stakeholders without technical involvement

14Further Reading and Resources¶

Foundational Texts

Russell, S., & Norvig, P. (2020). Artificial Intelligence: A Modern Approach (4th ed.). Pearson. — The definitive academic reference for AI agent theory and architecture.

Wooldridge, M. (2009). An Introduction to MultiAgent Systems (2nd ed.). Wiley. — Comprehensive treatment of multi-agent system theory and coordination mechanisms.

Industry Reports and Research

McKinsey Global Institute. (2024). The State of AI in 2024: GenAI’s Breakout Year. McKinsey & Company.

Gartner. (2024). Hype Cycle for Artificial Intelligence. Gartner Research. — Annual tracking of AI technology maturity and enterprise adoption.

NIST. (2023). Artificial Intelligence Risk Management Framework (AI RMF 1.0). National Institute of Standards and Technology. — The authoritative U.S. government framework for AI governance.

Technical Frameworks and Documentation

LangChain Documentation: python

.langchain .com — Comprehensive documentation for the LangChain and LangGraph agent frameworks. AutoGen Documentation: microsoft

.github .io /autogen — Microsoft’s multi-agent framework with extensive examples. Vapi.ai Documentation: docs.vapi.ai — Platform documentation for voice AI agent deployment.

OpenAI Assistants API: platform

.openai .com /docs /assistants — Official documentation for OpenAI’s managed agent platform.

Academic Journals

Journal of Artificial Intelligence Research — Peer-reviewed research on AI systems including agent architectures.

Harvard Business Review — Regularly publishes accessible analyses of AI’s organizational impact.

MIT Sloan Management Review — Strong coverage of AI governance, business strategy, and analytics leadership.

Chapter 6 authored by Dr. Ernesto Lee for ISM 6405 Advanced Business Analytics, Florida Atlantic University. All company names, case studies, and platform references are used for educational purposes. The field of agentic AI is evolving rapidly; students are encouraged to supplement this chapter with current industry publications and platform documentation.