Chapter 3: Natural Language Processing

Teaching Machines to Understand, Interpret, and Generate Human Language

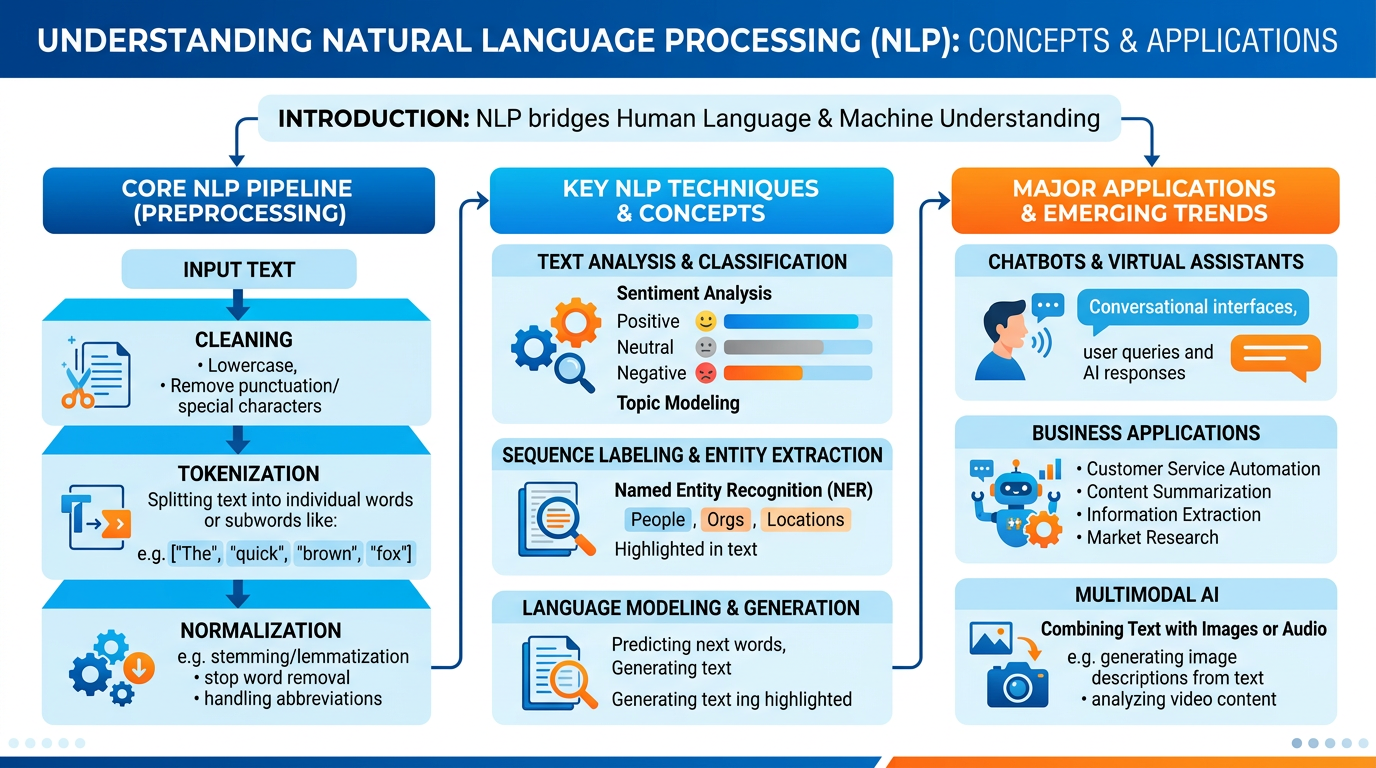

Figure 1:An illustrated overview of Natural Language Processing — from text preprocessing pipelines to sentiment analysis, chatbot architectures, multimodal AI, and real-world business applications in recruitment and customer experience.

“The tongue has the power of life and death, and those who love it will eat its fruit.”

Proverbs 18:21 (NIV)

Language is the most distinctively human capability we possess. Through language, we express ideas, negotiate agreements, build relationships, share knowledge, tell stories, and worship our Creator. Language allows a CEO to cast a vision that inspires thousands of employees, a teacher to unlock understanding in a student’s mind, a pastor to deliver a sermon that transforms hearts, and a customer service representative to turn a frustrated caller into a loyal advocate. Language is not merely a tool for communication — it is the fabric of human civilization itself.

For decades, teaching machines to understand language remained one of the most stubborn challenges in artificial intelligence. Unlike chess or mathematics, where rules are precise and unambiguous, human language is gloriously messy. We use sarcasm, metaphor, irony, and cultural references. We leave sentences incomplete, shift topics mid-conversation, and rely on shared context that would baffle any algorithm. The sentence “I saw her duck” could mean you witnessed a woman lower her head — or you observed her pet waterfowl. Only context, and often deeply human context, reveals which meaning is intended.

Yet in the past decade, the field of Natural Language Processing has achieved breakthroughs that seemed impossible just a few years ago. Today, AI systems can translate between hundreds of languages in real time, generate business reports indistinguishable from human writing, analyze millions of customer reviews to extract actionable insights, and carry on extended conversations that feel remarkably natural. These capabilities are not academic curiosities — they are transforming how businesses operate, compete, and serve their customers.

In this chapter, we will explore NLP from the ground up. You will learn how machines process text — from raw characters to meaningful understanding — and how these capabilities power the chatbots, search engines, translation services, and AI assistants you interact with daily. We will examine how businesses use NLP for sentiment analysis, customer experience, and recruitment. We will explore the frontier of multimodal AI, where systems like Google Gemini combine language understanding with visual perception. And as always, we will consider these technologies through the lens of Christian stewardship, asking how we can use the power of language technology in ways that honor God and serve human flourishing.

1Understanding Natural Language Processing¶

1.1What Is NLP?¶

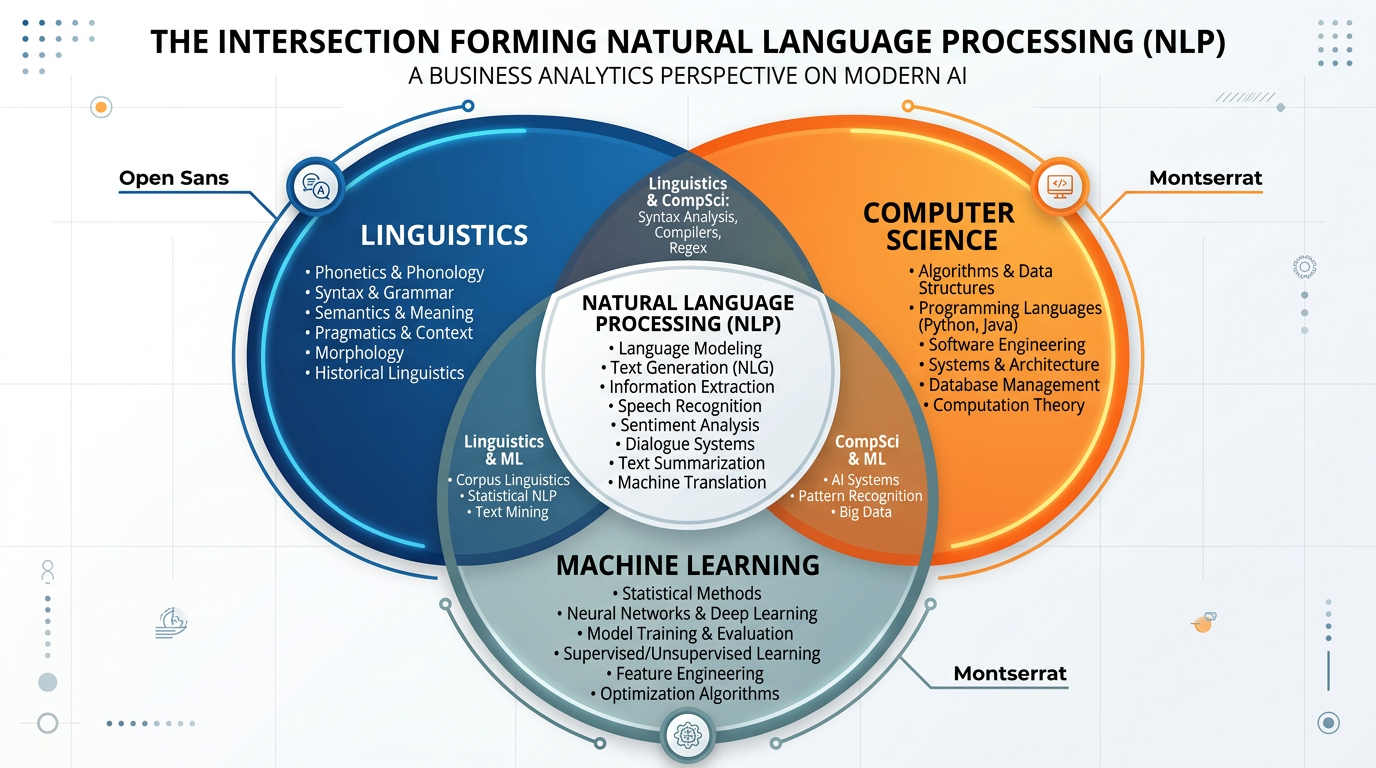

NLP sits at the intersection of several disciplines. Linguists bring understanding of grammar, syntax, and semantics — the structural rules that govern how languages work. Computer scientists contribute algorithms and data structures for processing text efficiently. Machine learning researchers provide the statistical and neural network techniques that allow systems to learn language patterns from massive datasets rather than relying on hand-coded rules.

Figure 2:NLP exists at the intersection of linguistics, computer science, and machine learning — each discipline contributing essential knowledge to the field.

The importance of NLP in the modern business landscape cannot be overstated. Consider these statistics: over 80% of business data is unstructured text — emails, social media posts, customer reviews, contracts, reports, and chat transcripts. Without NLP, this vast reservoir of information remains inaccessible to automated analysis. With NLP, businesses can extract insights, automate responses, detect trends, and make data-driven decisions at a scale no team of human analysts could match.

1.2The Challenge of Human Language¶

Why is language so difficult for machines? To appreciate the magnitude of the NLP challenge, consider the many layers of complexity in human communication:

The same word can have multiple meanings. “Bank” could refer to a financial institution or a river bank. “Light” could mean illumination, low weight, or a shade of color. English alone has over 170,000 words in current use, many with multiple definitions.

The same sentence can be parsed in multiple ways. “I saw the man with the telescope” — did you use a telescope to see him, or did you see a man who was holding a telescope?

Meaning depends on context, speaker intent, and shared knowledge. “Can you pass the salt?” is technically a yes/no question about ability, but pragmatically it is a request. “Nice weather we’re having” during a hurricane is sarcasm.

Language varies across cultures, regions, generations, and social groups. British English, American English, and Indian English use different vocabulary, spellings, and idioms. Slang evolves rapidly. Professional jargon differs across industries.

1.3The Two Pillars of NLP: Understanding and Generation¶

Modern NLP encompasses two complementary capabilities:

NLU focuses on enabling machines to comprehend human language — to extract meaning, intent, and structure from text or speech.

Key NLU Tasks:

Intent Recognition: Determining what a user wants (e.g., “I want to cancel my order” → intent: order_cancellation)

Entity Extraction: Identifying key information (names, dates, locations, product names)

Sentiment Analysis: Determining emotional tone (positive, negative, neutral)

Text Classification: Categorizing documents by topic, urgency, or department

Relationship Extraction: Identifying how entities relate to each other

Business Applications:

Email routing and prioritization

Customer complaint categorization

Contract analysis and due diligence

Social media monitoring

NLG focuses on enabling machines to produce human language — to generate coherent, contextually appropriate text or speech.

Key NLG Tasks:

Text Summarization: Condensing long documents into key points

Content Generation: Creating articles, reports, product descriptions

Translation: Converting text from one language to another

Dialogue Generation: Producing conversational responses

Data-to-Text: Converting structured data into narrative reports

Business Applications:

Automated report writing (financial summaries, analytics reports)

Product description generation for e-commerce

Personalized marketing content

Real-time translation for global operations

2Text Preprocessing: Preparing Language for Machines¶

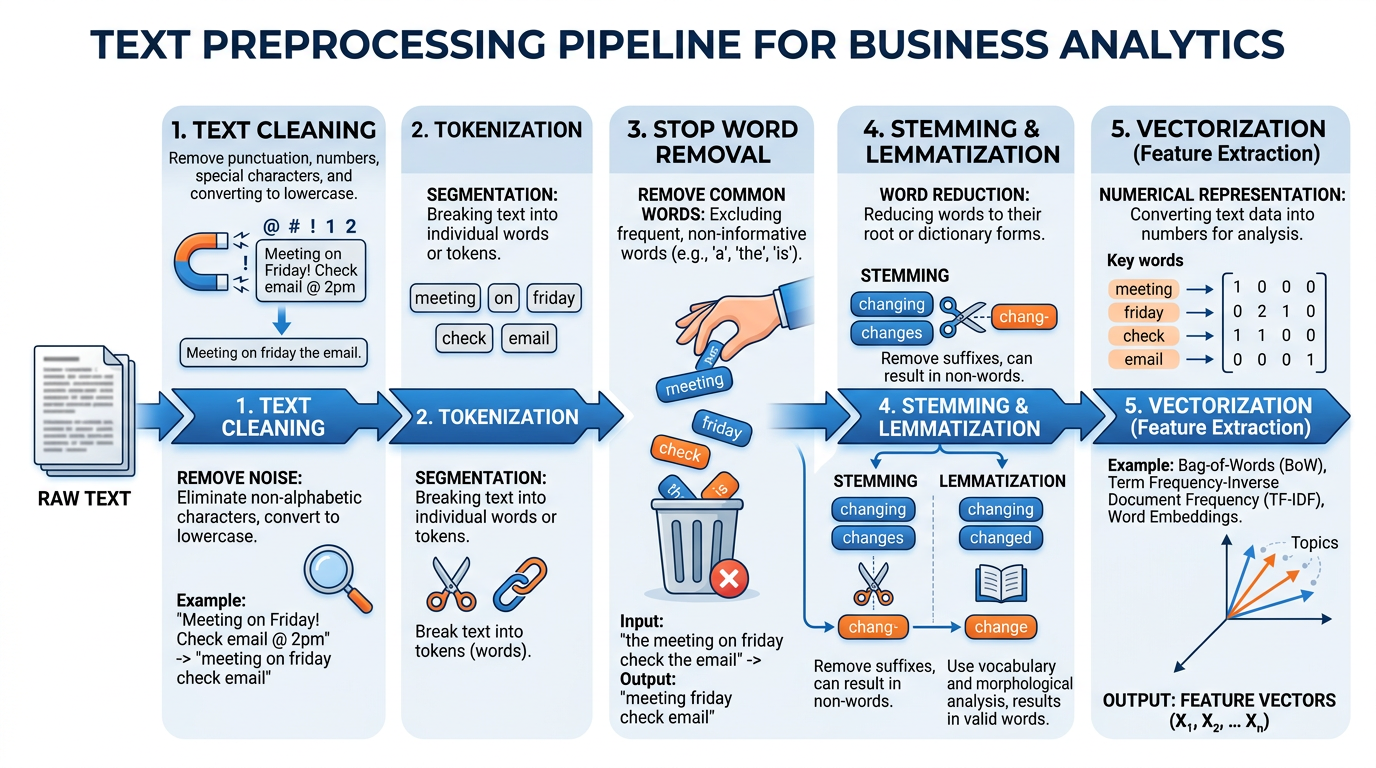

Before any NLP system can understand text, the raw text must be cleaned, normalized, and transformed into a format that algorithms can process. This preprocessing pipeline is a critical — and often underappreciated — step in any NLP application. As the saying goes in data science: garbage in, garbage out. The quality of your preprocessing directly determines the quality of your NLP results.

2.1The Text Preprocessing Pipeline¶

Figure 3:The text preprocessing pipeline transforms raw, messy human text into clean, structured data that NLP algorithms can process effectively.

Let us walk through each stage of the preprocessing pipeline using a practical example. Imagine a business has received the following customer review:

“I LOVED the new iPhone 15 Pro!!! The camera is absolutely AMAZING 📸 — best I’ve ever used. Bought it from @BestBuy on 10/15/2024 for $999. #Apple #iPhone15Pro”

This review is perfectly understandable to a human reader, but it contains numerous challenges for a machine: mixed case, punctuation, emojis, special characters, hashtags, mentions, dates, currency, and abbreviations. The preprocessing pipeline handles each of these systematically.

2.2Step 1: Text Cleaning¶

The first step removes noise — characters and elements that do not contribute to the meaning of the text:

1 2 3 4 5 6 7 8 9 10 11import re raw_text = "I LOVED the new iPhone 15 Pro!!! The camera is absolutely AMAZING 📸 — best I've ever used. Bought it from @BestBuy on 10/15/2024 for $999. #Apple #iPhone15Pro" # Remove URLs, mentions, hashtags, emojis, special characters cleaned = re.sub(r'http\S+|@\S+|#\S+', '', raw_text) # Remove URLs, @mentions, #hashtags cleaned = re.sub(r'[^\w\s]', '', cleaned) # Remove punctuation cleaned = re.sub(r'\s+', ' ', cleaned).strip() # Normalize whitespace print(cleaned) # Output: "I LOVED the new iPhone 15 Pro The camera is absolutely AMAZING best Ive ever used Bought it from on 10152024 for 999"

Text Cleaning Example

2.3Step 2: Tokenization¶

Tokenization seems simple — just split on spaces, right? In practice, it is surprisingly complex:

The most intuitive approach: split text into individual words.

text = "I loved the new iPhone 15 Pro"

tokens = text.split()

# ['I', 'loved', 'the', 'new', 'iPhone', '15', 'Pro']Challenge: What about “New York”? “ice cream”? “don’t”? Word boundaries are not always obvious.

Modern LLMs use subword tokenization (like Byte Pair Encoding), which breaks words into meaningful fragments:

# BPE tokenization of "unhappiness"

tokens = ['un', 'happi', 'ness']

# BPE tokenization of "ChatGPT"

tokens = ['Chat', 'G', 'PT']Advantage: Handles unknown words, technical terms, and multiple languages efficiently. This is how ChatGPT, Claude, and Gemini process text.

For tasks like summarization, text is split into sentences:

text = "Dr. Lee teaches at PBA. He loves AI."

sentences = ['Dr. Lee teaches at PBA.', 'He loves AI.']

# Note: "Dr." doesn't trigger a sentence breakChallenge: Abbreviations (Dr., Mr., Inc.) and decimal numbers (3.14) create false sentence boundaries.

2.4Step 3: Normalization¶

Normalization standardizes text to reduce variation without losing meaning:

Table 1:Common Normalization Techniques

Technique | Example | Purpose |

|---|---|---|

Lowercasing | “AMAZING” → “amazing” | Treats “Apple” and “apple” as the same token |

Stemming | “running”, “runs”, “ran” → “run” | Reduces words to their root form (aggressive, sometimes inaccurate) |

Lemmatization | “better” → “good”, “ran” → “run” | Reduces words to dictionary form (more accurate than stemming) |

Accent Removal | “café” → “cafe” | Standardizes international characters |

Number Normalization | “10/15/2024” → “DATE”, “$999” → “CURRENCY” | Replaces specific values with category tokens |

2.5Step 4: Stop Word Removal¶

Stop words are extremely common words (the, is, at, which, on) that appear frequently but carry little meaning for most analyses. Removing them reduces noise and improves processing efficiency.

1 2 3 4 5 6 7from nltk.corpus import stopwords stop_words = set(stopwords.words('english')) tokens = ['i', 'loved', 'the', 'new', 'iphone', 'pro', 'the', 'camera', 'is', 'amazing'] filtered = [word for word in tokens if word not in stop_words] # ['loved', 'new', 'iphone', 'pro', 'camera', 'amazing']

Stop Word Removal Example

2.6Step 5: Vectorization — From Words to Numbers¶

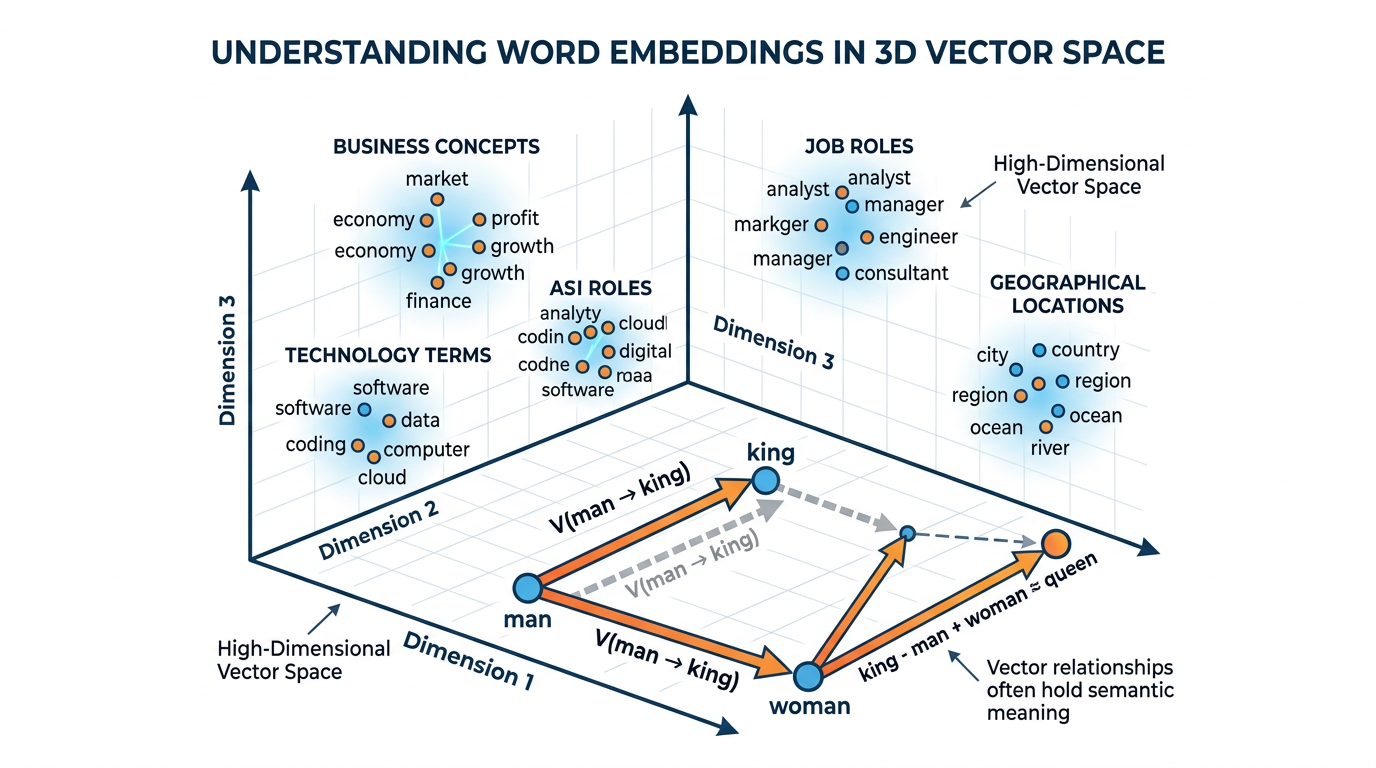

Machines cannot process words directly — they work with numbers. Vectorization converts text tokens into numerical representations (vectors) that capture semantic meaning.

Figure 4:Word embeddings map words into a high-dimensional vector space where semantically similar words are positioned close together. The famous “king - man + woman = queen” analogy illustrates how these vectors capture meaningful relationships.

The simplest approach: count how many times each word appears.

doc1 = "AI is great for business"

doc2 = "Business needs great AI"

# Vocabulary: [AI, is, great, for, business, needs]

# doc1 vector: [1, 1, 1, 1, 1, 0]

# doc2 vector: [1, 0, 1, 0, 1, 1]Limitation: Ignores word order entirely. “Dog bites man” and “Man bites dog” produce the same vector.

Term Frequency–Inverse Document Frequency weights words by how important they are to a specific document relative to the entire collection.

Where:

= frequency of term in document

= total number of documents

= number of documents containing term

Common words (the, is) get low scores; distinctive words get high scores.

Modern approaches like Word2Vec, GloVe, and BERT create dense vector representations where semantic relationships are preserved:

“king” - “man” + “woman” ≈ “queen”

“Paris” - “France” + “Germany” ≈ “Berlin”

These embeddings capture nuanced meaning in 100-1000 dimensional space, far surpassing simple counting methods.

3Sentiment Analysis: Understanding Emotions in Text¶

3.1What Is Sentiment Analysis?¶

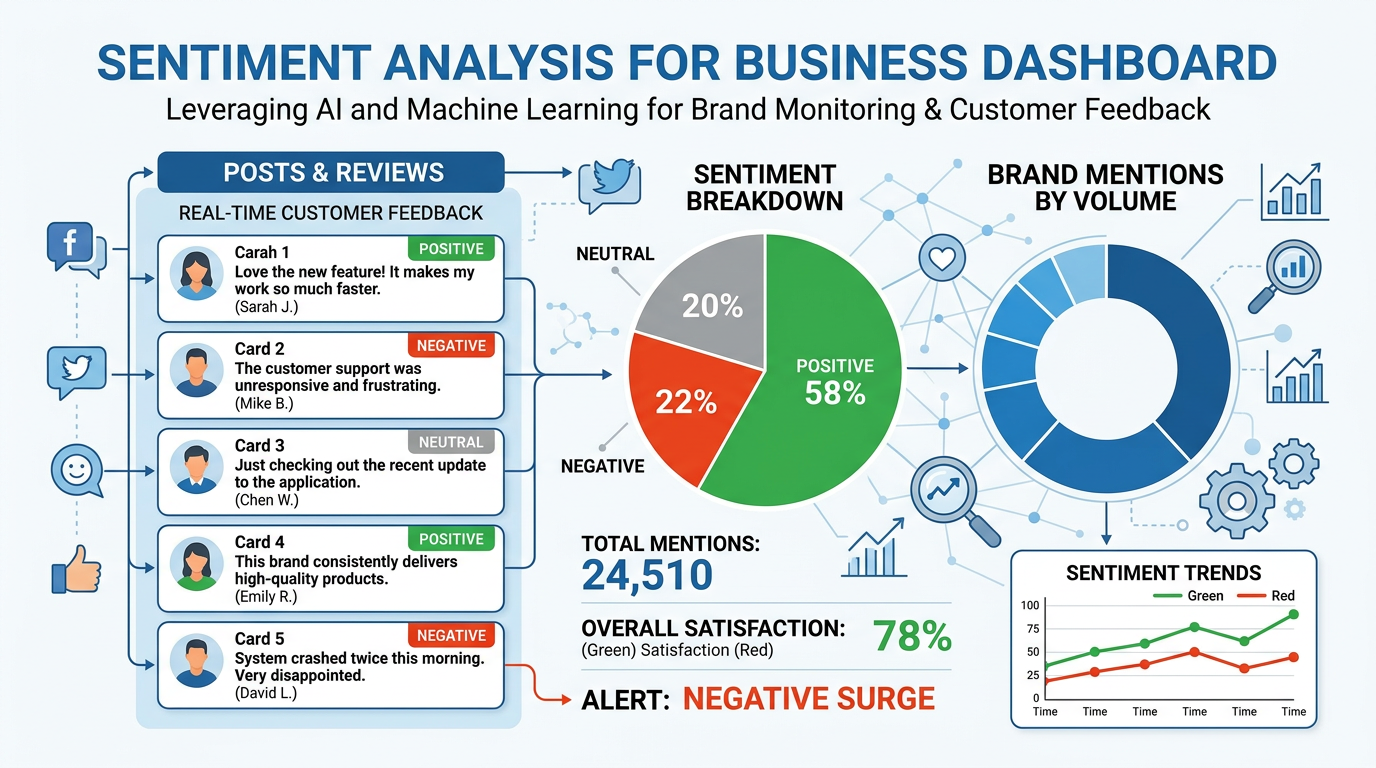

Sentiment analysis is one of the most commercially valuable NLP applications. It allows businesses to monitor brand perception across millions of social media posts, analyze customer reviews to identify product strengths and weaknesses, track employee satisfaction through survey responses, gauge market sentiment from financial news and analyst reports, and detect emerging crises before they escalate.

Figure 5:Sentiment analysis in action — businesses use NLP to automatically classify customer feedback, social media posts, and reviews to track brand perception and identify issues in real time.

3.2Levels of Sentiment Analysis¶

Sentiment analysis operates at multiple levels of granularity:

Classifies an entire document (review, email, article) as positive, negative, or neutral.

Example: “This restaurant has great food, friendly staff, and a beautiful atmosphere.” → Positive

Analyzes individual sentences within a document, recognizing that a single review may contain mixed sentiments.

Example: “The food was excellent (+) but the service was terribly slow (−).”

Identifies sentiment toward specific aspects or features of a product or service.

Example: “Battery life: Positive | Screen quality: Positive | Price: Negative | Customer support: Neutral”

3.3How Sentiment Analysis Works¶

Modern sentiment analysis systems use a combination of approaches:

Rule-based approaches use sentiment lexicons — dictionaries that assign positive or negative scores to words. The word “excellent” might score +3, while “terrible” scores −3. The overall sentiment is calculated by summing scores. These systems are transparent and explainable but struggle with sarcasm, context, and nuance.

Machine learning approaches train classifiers on labeled datasets of text with known sentiments. Algorithms like Naïve Bayes, Support Vector Machines, and Random Forests learn patterns that distinguish positive from negative text. These handle more complexity but require large training datasets.

Deep learning approaches use transformer models (like BERT or GPT) that understand context, word relationships, and nuance. These achieve the highest accuracy but require significant computational resources and can be difficult to interpret.

3.4Real-World Sentiment Analysis: Case Studies¶

Case Study: Airbnb’s Review Analysis System

Airbnb processes millions of guest and host reviews in multiple languages. Their NLP system performs aspect-based sentiment analysis to extract feedback on specific dimensions — cleanliness, accuracy of listing, communication, location, check-in experience, and value. This granular analysis helps hosts understand exactly what to improve, allows Airbnb to identify consistently underperforming listings, enables personalized recommendations based on what aspects matter most to each traveler, and provides early warning of safety or quality issues.

By analyzing sentiment trends over time, Airbnb can detect when a previously excellent host begins to decline — perhaps due to property maintenance issues or changing neighborhood conditions — and intervene proactively.

Case Study: JPMorgan Chase’s COiN Platform

JPMorgan Chase’s Contract Intelligence (COiN) platform uses NLP to analyze legal documents — extracting key terms, obligations, and risks from commercial loan agreements. What once required 360,000 hours of human lawyer time per year now takes seconds. The system performs sentiment-adjacent analysis, identifying clauses that represent risks (negative sentiment from a legal perspective) versus standard terms (neutral). This has reduced contract review errors by an estimated 90% and freed legal professionals to focus on complex negotiations that require human judgment.

3.5The Sarcasm Problem¶

One of the most persistent challenges in sentiment analysis is detecting sarcasm and irony:

4Chatbots and Conversational AI¶

4.1The Rise of the Chatbot¶

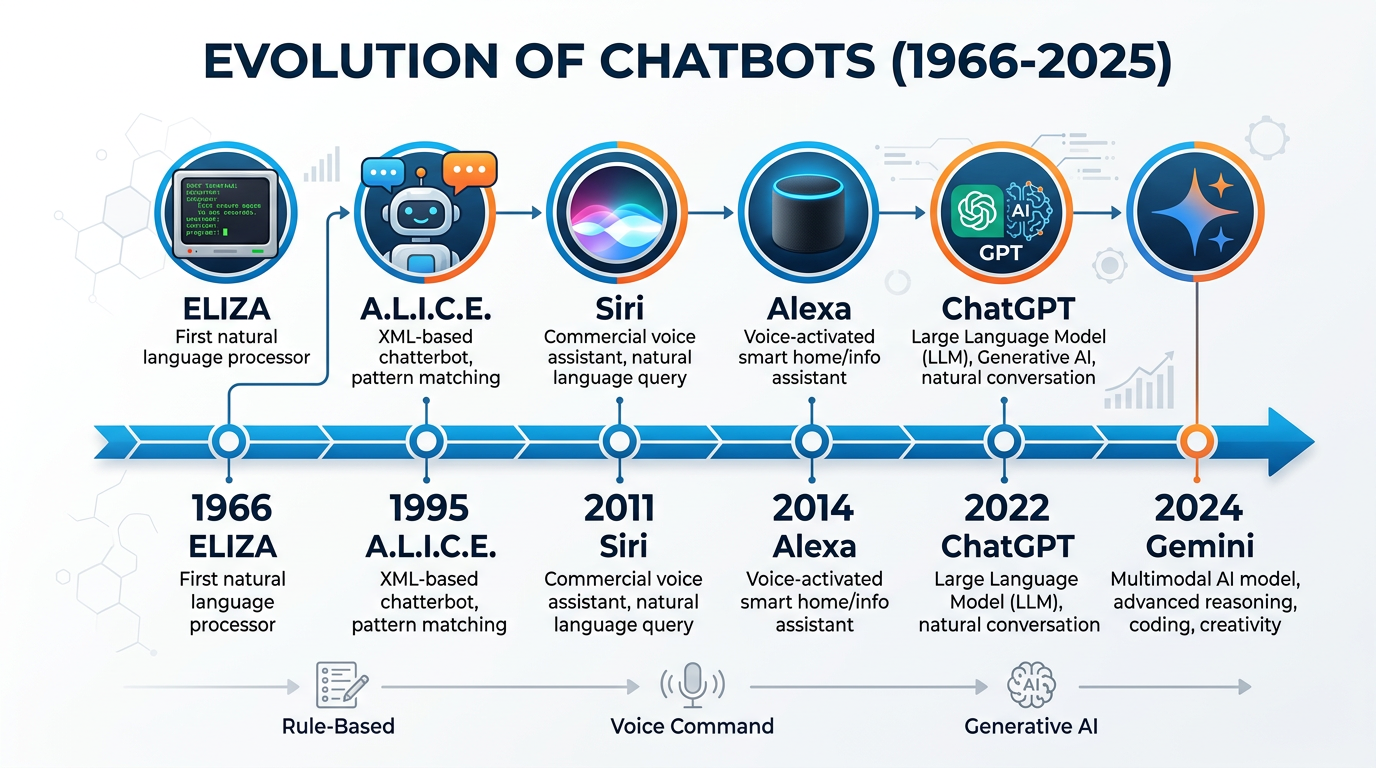

Few NLP applications have impacted business as directly and visibly as chatbots. From the simple FAQ bots on retail websites to sophisticated AI assistants like ChatGPT and Google Gemini, conversational AI has become one of the primary interfaces through which consumers and employees interact with technology.

Figure 6:The evolution of chatbots — from ELIZA’s pattern matching in 1966 to today’s LLM-powered conversational assistants capable of nuanced, context-aware dialogue.

4.2Types of Chatbots¶

How they work: Follow predefined decision trees and keyword matching rules. If the user says X, respond with Y.

Strengths:

Predictable, consistent responses

Easy to build and maintain

No hallucination risk

Low computational cost

Limitations:

Cannot handle unexpected questions

Brittle — small variations in phrasing break them

Cannot learn or improve from conversations

Frustrating user experience for complex queries

Best for: FAQ answering, appointment scheduling, order status checks — structured, repetitive interactions.

How they work: Use machine learning and NLP to understand intent, extract entities, and generate contextually appropriate responses. May be built on platforms like Dialogflow, IBM Watson, or Microsoft Bot Framework.

Strengths:

Handle varied phrasing (“cancel my order” = “I want to return this” = “stop my purchase”)

Learn from conversation data

Can escalate to human agents when uncertain

Support multiple languages

Limitations:

Require training data and ongoing tuning

May not handle completely novel situations

Can still misunderstand complex or ambiguous queries

Best for: Customer service, IT help desks, HR inquiry systems — semi-structured interactions requiring flexibility.

How they work: Built on large language models (GPT-4, Claude, Gemini) that generate responses from vast training data. Can understand complex queries, maintain extended conversations, and produce creative, nuanced responses.

Strengths:

Handle virtually any topic or phrasing

Generate human-quality responses

Maintain conversation context over many turns

Can be customized with system prompts and RAG (Retrieval-Augmented Generation)

Limitations:

Can hallucinate (generate plausible but false information)

Higher computational cost

Less predictable than rule-based systems

May generate inappropriate responses without guardrails

Best for: Knowledge work support, creative brainstorming, complex customer interactions, internal research assistants.

4.3Chatbot Architecture: How Conversations Work¶

Understanding how a chatbot processes a conversation helps business professionals make better decisions about which technology to deploy:

Consider this example conversation with a business chatbot:

Customer: “I ordered a blue sweater last Tuesday but received a green one. I need to exchange it.”

The NLU engine processes this message to identify:

Intent: product_exchange

Entities: product = “sweater,” color_ordered = “blue,” color_received = “green,” order_date = “last Tuesday”

Sentiment: negative (frustrated but not angry)

The dialogue manager uses these extracted elements to look up the order, verify the discrepancy, and generate an appropriate response — perhaps offering a prepaid return label and confirming the correct item will be shipped.

4.4Chatbots in Business: Key Applications¶

Table 2:Business Chatbot Applications by Industry

Industry | Application | Impact | Example |

|---|---|---|---|

Retail | Customer service, product recommendations | 60-80% reduction in routine support tickets | Sephora’s Virtual Artist |

Banking | Account inquiries, fraud alerts, loan applications | 24/7 availability, $7.3B in projected savings by 2025 | Bank of America’s Erica |

Healthcare | Symptom checking, appointment scheduling | Reduced wait times, improved triage | Babylon Health |

HR/Recruiting | Candidate screening, FAQ answering, onboarding | 75% reduction in time-to-screen | Mya Systems |

Education | Tutoring, assignment help, administrative queries | Personalized learning at scale | Georgia State’s “Pounce” bot |

5Multimodal AI: Beyond Text¶

5.1The Multimodal Revolution¶

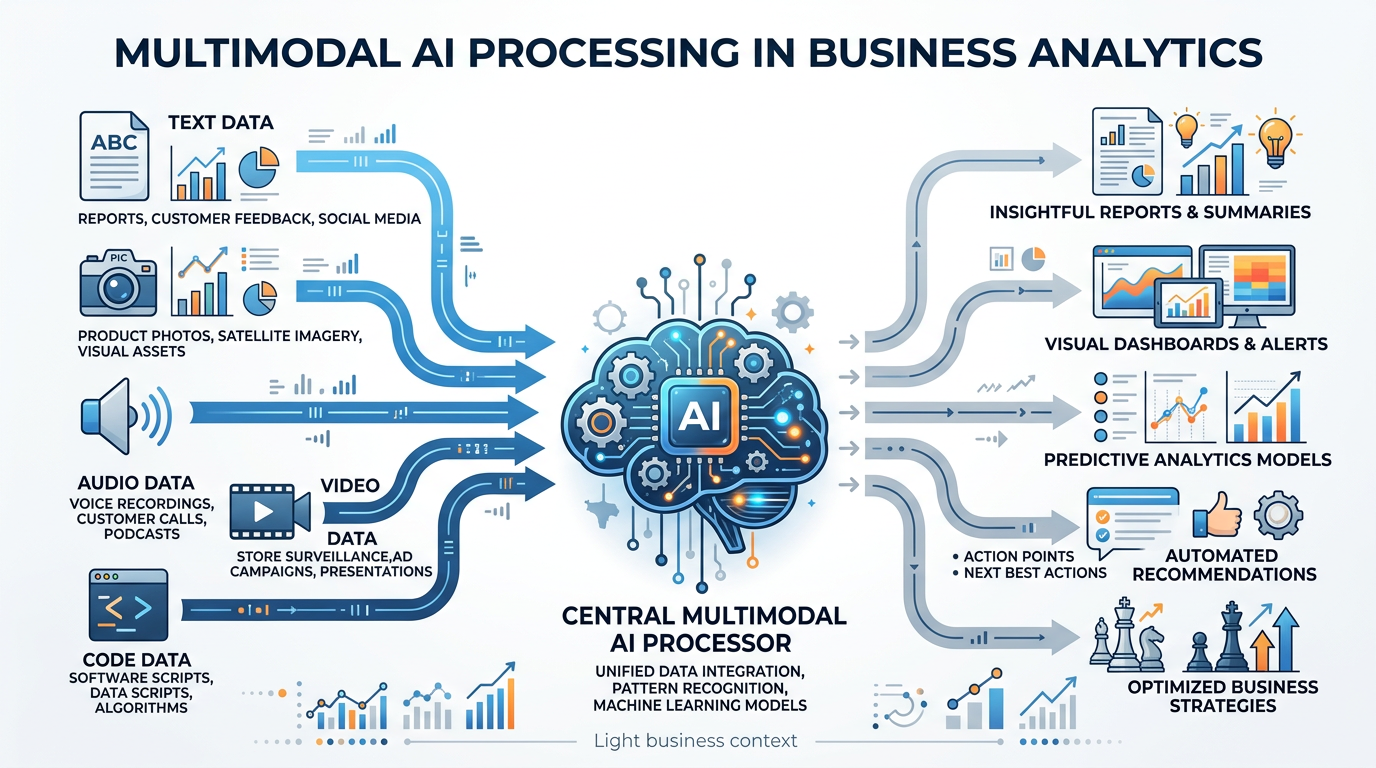

The release of multimodal AI systems — most notably Google’s Gemini — represents a fundamental shift in AI capabilities. For decades, AI systems were specialists: a text model could understand language but not images; a computer vision model could analyze photos but not read text; a speech model could transcribe audio but not understand visual context. Multimodal AI breaks down these barriers.

Figure 7:Multimodal AI systems like Google Gemini can process and reason across text, images, audio, video, and code simultaneously — enabling entirely new categories of business applications.

5.2Google Gemini: A Multimodal Case Study¶

Google’s Gemini (released December 2023, with Gemini 2.0 following in 2025) is one of the most capable multimodal AI systems available. Understanding its capabilities provides insight into where the entire field is heading:

Summarize documents, write reports, answer questions

Support for 100+ languages

Code generation and debugging

Creative writing and brainstorming

Describe, analyze, and reason about images

Read text within images (OCR)

Compare multiple images

Identify objects, scenes, and patterns

Transcribe speech in multiple languages

Understand tone and emotion in voice

Process music and environmental sounds

Real-time translation of spoken language

Analyze video content frame by frame

Answer questions about video content

Extract key moments and summaries

Understand spatial and temporal relationships

5.3Business Applications of Multimodal AI¶

The ability to process multiple modalities simultaneously opens entirely new categories of business applications:

Application 1: Intelligent Document Processing

Traditional document processing required separate systems for text extraction (OCR), layout analysis, and content understanding. Multimodal AI handles all three simultaneously. A system like Gemini can look at a scanned invoice, read the text, understand the layout (recognizing which numbers are prices vs. dates), extract structured data, and flag anomalies — all in one pass.

Business Impact: Insurance companies use multimodal AI to process claims that include photographs of damage alongside written descriptions. The AI can cross-reference the visual damage with the written claim, flagging discrepancies that might indicate fraud.

Application 2: Visual Quality Control

Manufacturing companies are deploying multimodal AI for quality control. Rather than programming specific defect patterns, these systems can be shown examples of good and bad products and learn to identify anomalies. When a defect is detected, the system can generate a natural language report describing the issue, its probable cause, and recommended corrective action.

Business Impact: A semiconductor manufacturer reduced defect escape rates by 40% after deploying multimodal AI inspection, which could detect subtle defects that single-modality systems missed.

Application 3: Multimodal Customer Support

Customers can now send a photo of a broken product along with a text description of the problem. A multimodal AI assistant analyzes both the image and the text, identifies the product and the issue, and provides troubleshooting instructions or initiates a replacement — all without human intervention.

Business Impact: Companies like Samsung and Apple are implementing multimodal support systems that reduce average resolution time by 50% for issues that can be diagnosed visually.

6NLP in Recruitment and Human Resources¶

6.1The AI Recruitment Revolution¶

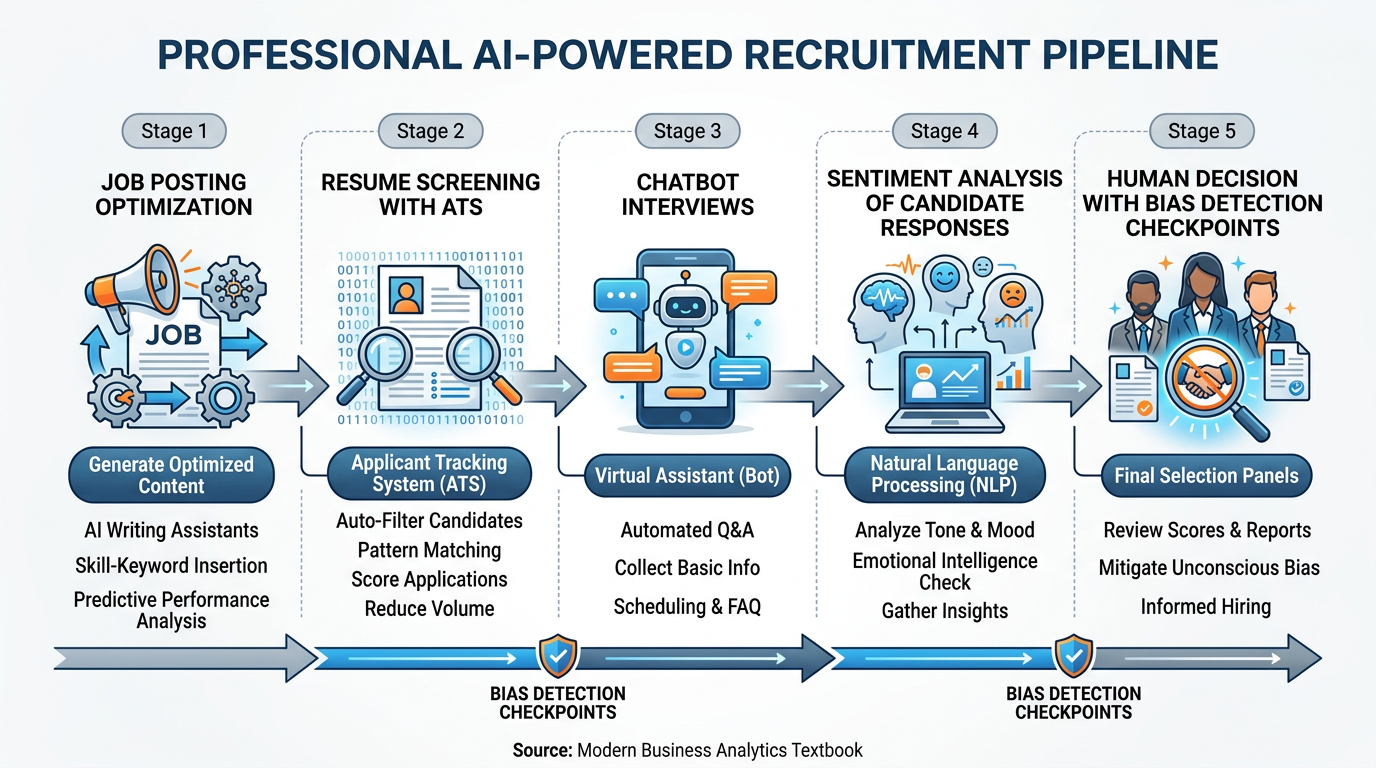

Figure 8:AI-powered recruitment pipelines use NLP at every stage — from optimizing job descriptions to screening resumes, conducting initial interviews via chatbot, and analyzing candidate responses for fit and competency.

NLP is transforming every stage of the recruitment process. For businesses that receive hundreds or thousands of applications for a single position, AI-powered recruitment tools are no longer a luxury — they are a necessity for efficient hiring. However, these tools also introduce significant ethical challenges that require careful oversight.

6.2NLP Applications Across the Recruitment Pipeline¶

1. Job Description Optimization

NLP tools like Textio and Gender Decoder analyze job postings for language that may unintentionally discourage diverse applicants. Research has shown that certain words and phrases — “aggressive,” “dominant,” “rockstar” — disproportionately appeal to male candidates, while “collaborative,” “supportive,” “nurturing” appeal more to female candidates. NLP-optimized job descriptions have been shown to increase diverse applicant pools by 25-40%.

2. Resume Screening and Parsing

AI-powered Applicant Tracking Systems (ATS) use NLP to parse resumes — extracting names, education, skills, work experience, and certifications into structured data fields. These systems can process thousands of resumes in minutes, ranking candidates based on how well their qualifications match the job requirements.

3. Chatbot Interviews

Initial screening interviews are increasingly conducted by AI chatbots. These systems ask standardized questions, evaluate responses for relevance and completeness, assess communication skills, and identify candidates who should advance to human interviews.

4. Sentiment and Language Analysis

Some advanced recruitment platforms analyze the language patterns, word choice, and sentiment in candidate responses to assess personality traits, cultural fit, and communication skills. Video interview platforms like HireVue have used NLP combined with facial expression analysis — though this practice has faced significant criticism and regulatory scrutiny.

6.3Ethical Concerns in AI Recruitment¶

6.4Best Practices for Ethical AI Recruitment¶

Conduct regular bias audits of AI recruitment tools. Compare outcomes across demographic groups. If the system consistently advances fewer candidates from certain groups, investigate and correct the bias.

Never let AI make final hiring decisions autonomously. Use AI for efficiency in screening, but ensure human recruiters review AI recommendations and make the final call.

Inform candidates that AI is used in the screening process. Provide clear information about what the AI evaluates and how candidates can request human review.

Regularly test your AI systems with diverse test cases. Ensure the system performs equitably across genders, ethnicities, age groups, and educational backgrounds.

7NLP in Customer Experience¶

7.1Transforming Customer Interactions¶

Beyond recruitment, NLP is fundamentally reshaping how businesses interact with customers across every touchpoint. From the moment a customer searches for a product to post-purchase support, NLP technologies are working behind the scenes.

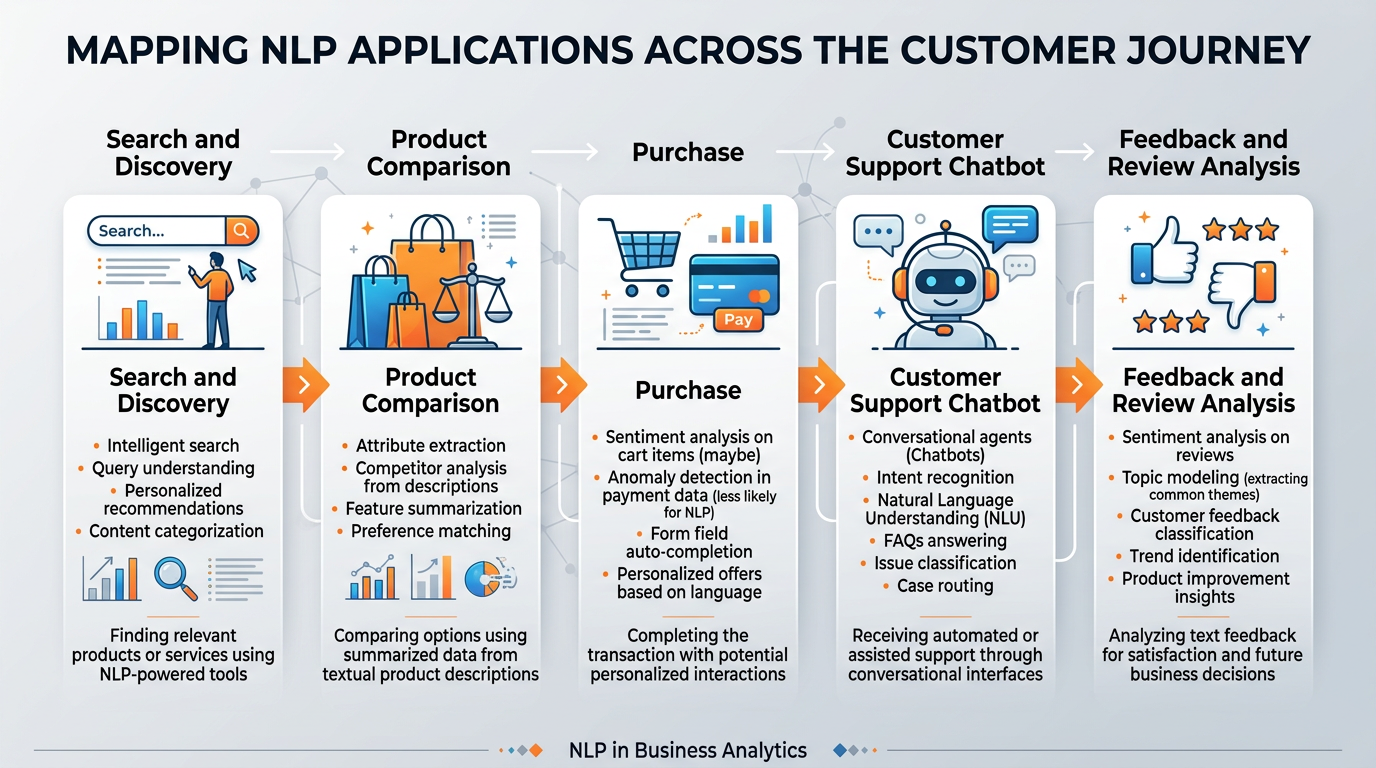

Figure 9:NLP touches every stage of the customer journey — from intelligent search and product recommendations to conversational support and feedback analysis.

7.2Search and Discovery¶

Modern e-commerce search engines use NLP to understand customer intent rather than just matching keywords. When a customer searches for “comfortable shoes for standing all day,” an NLP-powered search engine understands the intent (shoes with cushioning and arch support for extended wear) and returns relevant results — even if the product descriptions do not contain the exact phrase “standing all day.”

Semantic search — search that understands meaning rather than just matching words — represents a major advance over traditional keyword search. Technologies like Google’s BERT (Bidirectional Encoder Representations from Transformers) power these capabilities, understanding that “affordable laptop for college” and “budget student computer” express essentially the same need.

7.3Voice of the Customer (VoC) Analytics¶

Organizations implementing VoC analytics powered by NLP have reported significant benefits: 25-40% reduction in customer churn through early detection of dissatisfaction, 15-30% improvement in product development cycles by identifying feature requests and complaints faster, 50% reduction in time to identify emerging issues compared to manual review, and measurable improvements in Net Promoter Score (NPS) through data-driven service improvements.

7.4Email and Ticket Classification¶

NLP automatically categorizes incoming customer emails and support tickets, routing them to the appropriate team, flagging urgent issues, and even suggesting responses. A major airline, for instance, might receive 100,000 customer emails per day during a disruption event. NLP systems can instantly categorize these by issue type (rebooking, refund, baggage, complaint), urgency level, and customer tier — ensuring that high-priority issues receive immediate attention.

7.5Real-Time Translation¶

For global businesses, NLP-powered translation enables real-time customer support across languages. A customer in Tokyo can write in Japanese and receive responses in Japanese, while the support agent in Dublin sees the conversation in English. Modern neural machine translation (NMT) systems, like Google Translate’s transformer-based engine, achieve near-human quality for many language pairs.

8The Business Value of NLP: A Strategic Framework¶

8.1Measuring NLP ROI¶

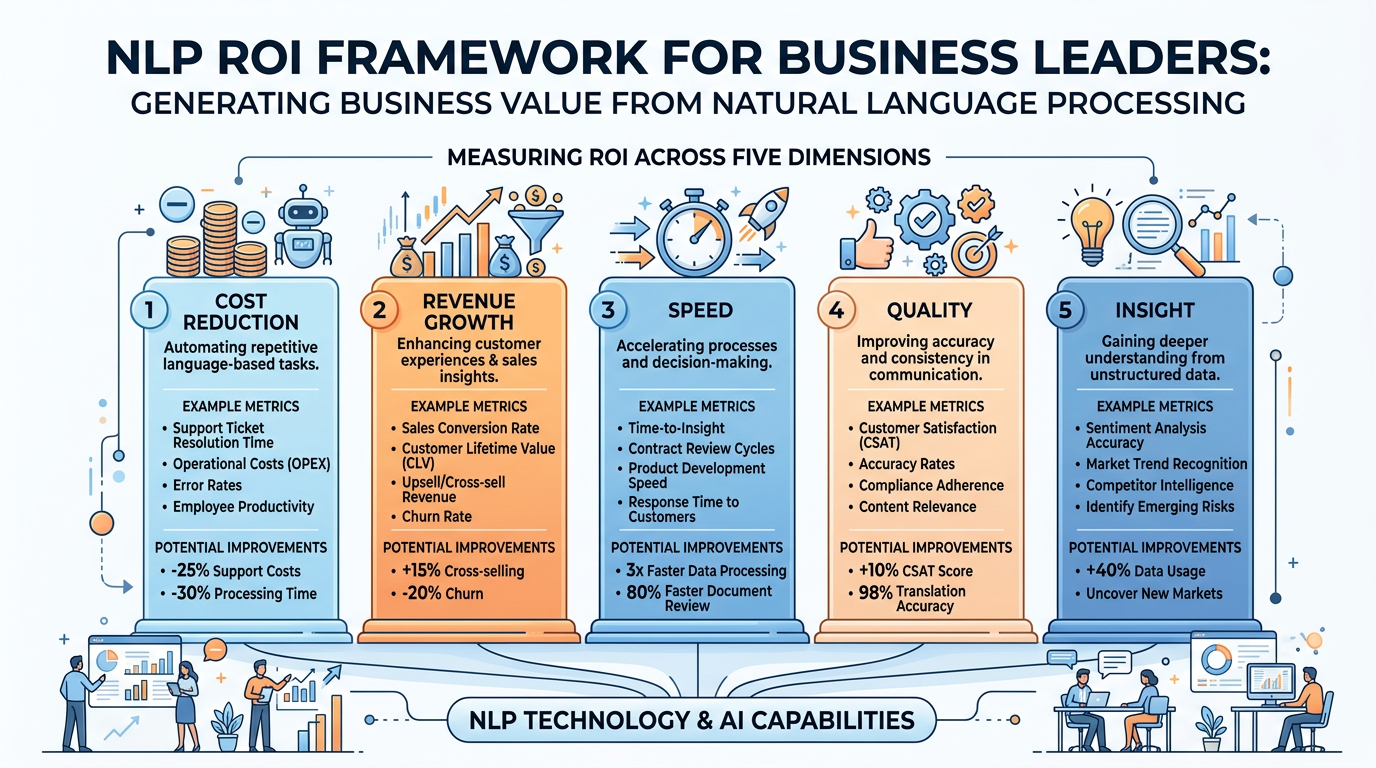

For business leaders considering NLP investments, a structured approach to measuring return on investment is essential:

Figure 10:A strategic framework for measuring the return on investment of NLP implementations across five key business dimensions.

Table 3:NLP ROI Framework

ROI Dimension | Metrics | Typical Impact |

|---|---|---|

Cost Reduction | Support tickets resolved without human intervention, processing time per document | 30-60% reduction in routine support costs |

Revenue Growth | Conversion rate improvement from better search, upsell from recommendations | 10-25% improvement in conversion rates |

Speed | Time to process applications, time to resolve customer issues | 50-80% reduction in processing time |

Quality | Error rates in document processing, consistency of customer responses | 40-70% reduction in errors |

Insight | Number of actionable insights from unstructured data, trend detection speed | From weeks to hours for trend identification |

8.2Building vs. Buying NLP Solutions¶

When to build:

Your use case is highly specialized (industry-specific terminology, proprietary data)

You need complete control over the model and data

You have in-house ML engineering talent

Data privacy requirements prevent using third-party APIs

Considerations:

High upfront investment (5M+ for enterprise-grade systems)

Requires ongoing maintenance and model retraining

6-18 month development timeline typical

Need for specialized talent (NLP engineers, data scientists)

When to buy:

Your use case is common (customer service, sentiment analysis, translation)

You need quick deployment (weeks, not months)

You want predictable costs (subscription or per-API-call pricing)

You lack in-house ML expertise

Popular platforms:

Google Cloud Natural Language AI

AWS Comprehend

Microsoft Azure Text Analytics

IBM Watson Natural Language Understanding

OpenAI API

Anthropic Claude API

Considerations:

Monthly costs scale with usage (50K+/month typical for enterprise)

Less customization than custom-built solutions

Data sent to third-party servers (privacy implications)

Vendor lock-in risk

9Faith Integration: The Ethics of Language Technology¶

9.1Stewardship of the Gift of Language¶

As Christians, we understand that language is a gift from God — one of the defining characteristics of beings created in His image. The ability to communicate complex thoughts, express emotions, share stories, and worship through words is a profound expression of what it means to be human.

When we build systems that process and generate language, we are, in a sense, working with one of the most sacred aspects of human experience. This calls for particular thoughtfulness and responsibility.

9.2The Future of Human Communication in an AI World¶

The rapid advancement of NLP raises profound questions: If AI can write emails, reports, and even sermons, what becomes of human authorship? If chatbots can carry on conversations indistinguishable from human dialogue, what does that mean for authentic human connection? If sentiment analysis can detect emotions more accurately than human intuition, what role does empathy play?

These questions do not have simple answers, but as students at a Christian university, you are uniquely positioned to engage with them thoughtfully. The world needs business leaders who can harness NLP’s power while preserving the irreplaceable value of genuine human communication — leaders who understand that technology should serve relationship, not replace it.

10Module 3 Discussion: NLP in Customer Experience¶

11Module 3 Written Analysis: NLP Competitive Analysis¶

12Module 3 Reflection: The Future of Human Communication¶

13Module 3 Hands-On Activity 1: Voice-Powered AI Research with Gemini¶

14Module 3 Hands-On Activity 2: Building an Interview Prep Assistant with NotebookLM¶

15Chapter Summary¶

In this chapter, we explored the rich and rapidly evolving field of Natural Language Processing — the technology that enables machines to understand, interpret, and generate human language. We began with the fundamentals of text preprocessing, learning how raw text is cleaned, tokenized, normalized, and vectorized before any NLP model can process it. We examined sentiment analysis and its powerful applications in business intelligence, from brand monitoring to customer feedback analysis.

We explored the evolution of chatbots from simple rule-based systems to sophisticated LLM-powered assistants, understanding the architecture that powers modern conversational AI. We ventured into the frontier of multimodal AI, examining how systems like Google Gemini combine language understanding with visual, audio, and video processing to enable entirely new categories of business applications.

We critically examined NLP’s role in recruitment and human resources, celebrating its efficiency gains while confronting the serious ethical challenges of algorithmic bias and fairness. We mapped NLP applications across the customer journey, from intelligent search to real-time translation.

Throughout, we maintained our commitment to viewing these technologies through the lens of Christian stewardship. Language is one of God’s most precious gifts to humanity — the medium through which we express love, share truth, build community, and worship our Creator. As we develop and deploy technologies that process and generate language, we carry a special responsibility to ensure these tools serve human flourishing, uphold truth, and honor the dignity of every person.

16Glossary¶

Natural Language Processing (NLP) A subfield of AI focused on enabling computers to understand, interpret, generate, and respond to human language in meaningful ways.

Natural Language Understanding (NLU) The branch of NLP that focuses on machine comprehension — extracting meaning, intent, and structure from text or speech.

Natural Language Generation (NLG) The branch of NLP that focuses on producing human language — generating coherent, contextually appropriate text or speech.

Tokenization The process of breaking text into individual units called tokens, typically words, subwords, or characters.

Stemming An aggressive text normalization technique that reduces words to their root form by stripping suffixes (e.g., “running” → “run”).

Lemmatization A more accurate text normalization technique that reduces words to their dictionary form using linguistic knowledge (e.g., “better” → “good”).

Stop Words Extremely common words (the, is, at, which) that are often removed during preprocessing because they carry little analytical meaning.

Vectorization The process of converting text tokens into numerical representations (vectors) that machine learning algorithms can process.

Word Embeddings Dense vector representations of words in a high-dimensional space where semantic relationships between words are preserved.

TF-IDF Term Frequency–Inverse Document Frequency — a statistical measure that evaluates how important a word is to a document in a collection by balancing frequency with distinctiveness.

Sentiment Analysis The use of NLP to identify and quantify subjective information in text, determining whether expressed opinions are positive, negative, or neutral.

Chatbot A software application that uses NLP to conduct conversations with human users through text or voice interfaces.

Multimodal AI AI systems capable of processing, understanding, and generating content across multiple data types (text, images, audio, video) within a single model.

Voice of the Customer (VoC) A research methodology that captures customer expectations and feedback, often automated through NLP analysis of reviews, surveys, and social media.

Applicant Tracking System (ATS) Software used by HR departments that employs NLP to parse resumes, screen candidates, and manage the recruitment pipeline.

Semantic Search Search technology that understands the meaning and intent behind queries rather than simply matching keywords.

Byte Pair Encoding (BPE) A subword tokenization algorithm used by modern LLMs that iteratively merges the most frequent pairs of characters or character sequences.

Named Entity Recognition (NER) An NLP task that identifies and classifies named entities in text into categories such as person names, organizations, locations, and dates.

Intent Recognition The NLP task of determining what a user wants to accomplish from their natural language input, commonly used in chatbot and virtual assistant systems.

In the next chapter, we move from how machines understand language to the powerful engines that drive modern AI capabilities. Chapter 4: Machine Learning & Large Language Models explores the world of big data, data centers, and the large language models — ChatGPT, Claude, Gemini, Perplexity, and Jasper — that are reshaping business across every industry.