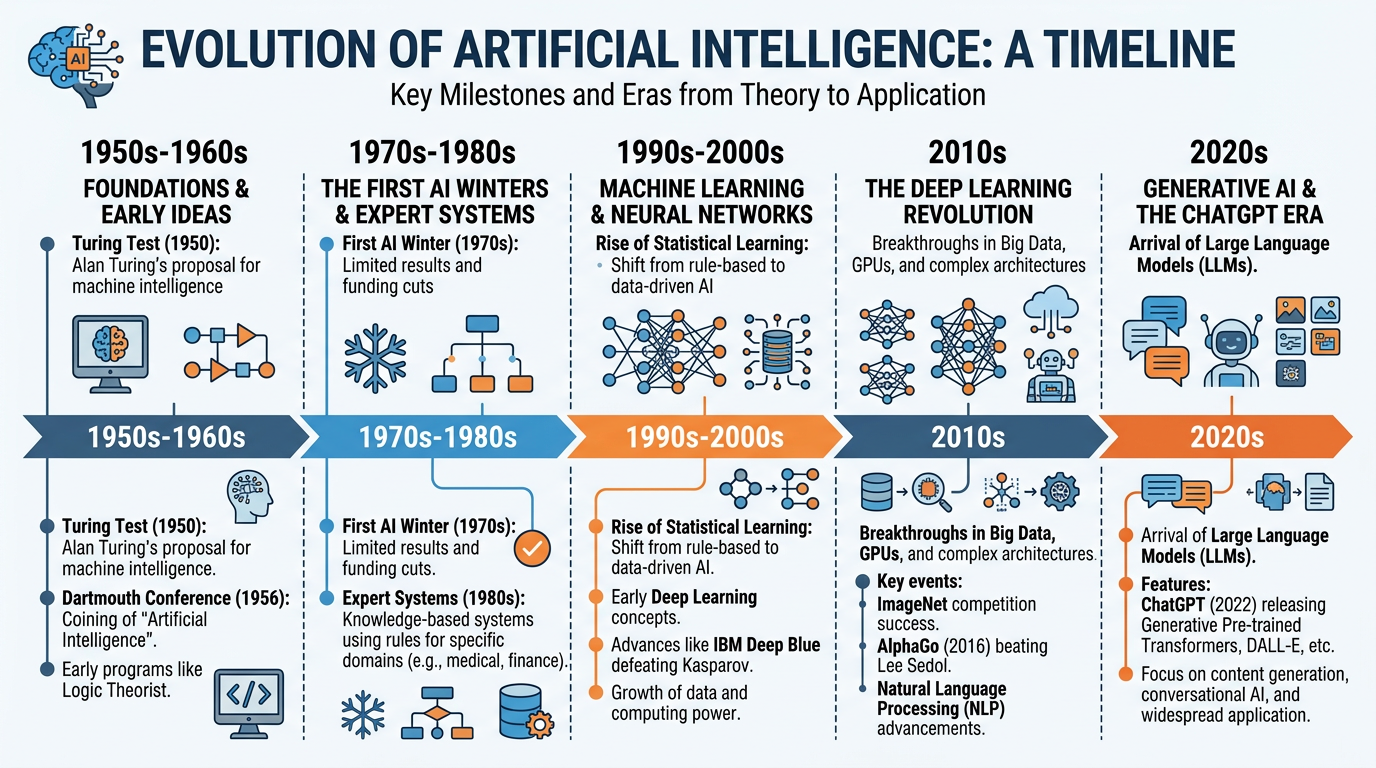

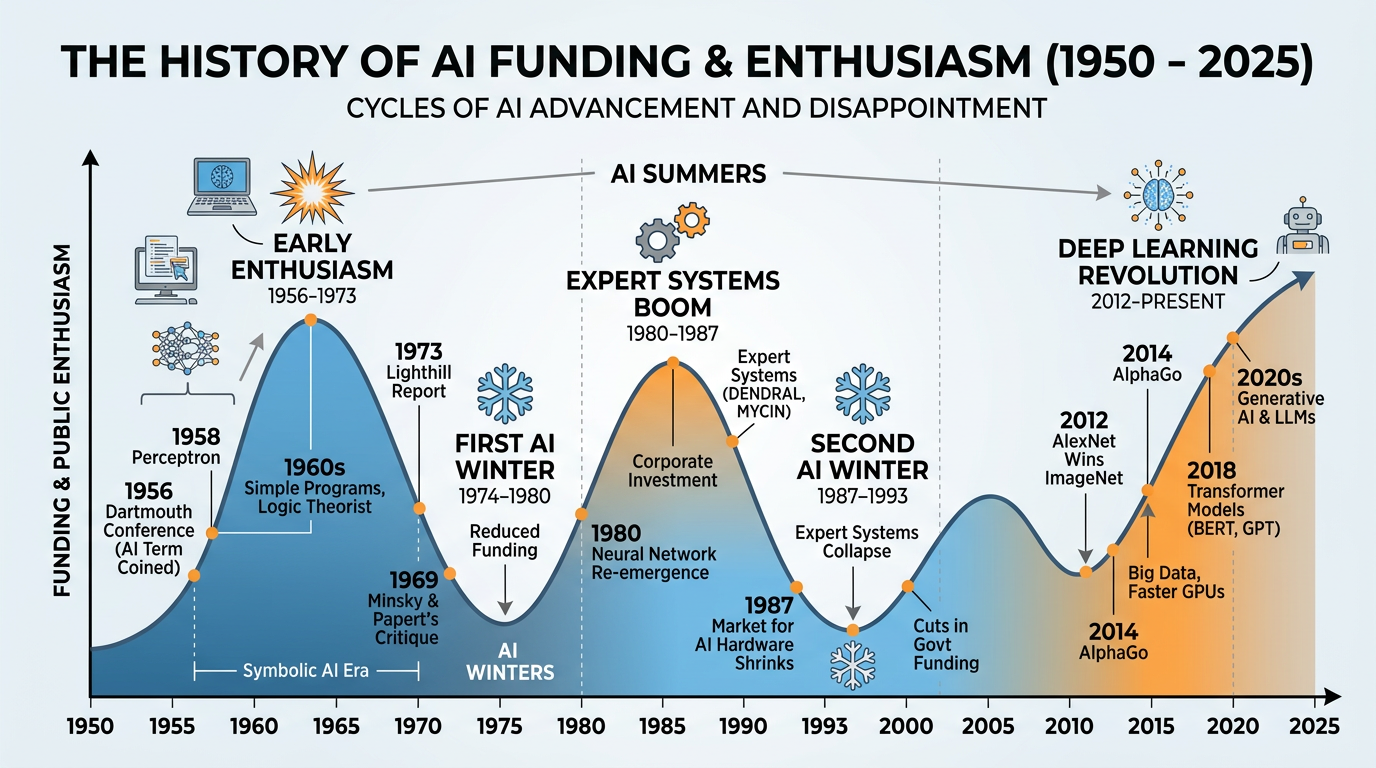

Figure 1:A visual timeline of AI’s evolution — from the birth of the field in the 1950s through AI winters and summers to the deep learning revolution transforming business today.

“For everything there is a season, and a time for every matter under heaven.”

Ecclesiastes 3:1 (ESV)

To understand where artificial intelligence is going, you must first understand where it has been. The story of AI is not a smooth, linear progression from primitive systems to powerful deep learning models. It is a dramatic narrative of soaring ambitions and crushing disappointments, of brilliant breakthroughs and decade-long stagnations, of promises made and broken — and ultimately, of promises kept beyond anyone’s wildest expectations.

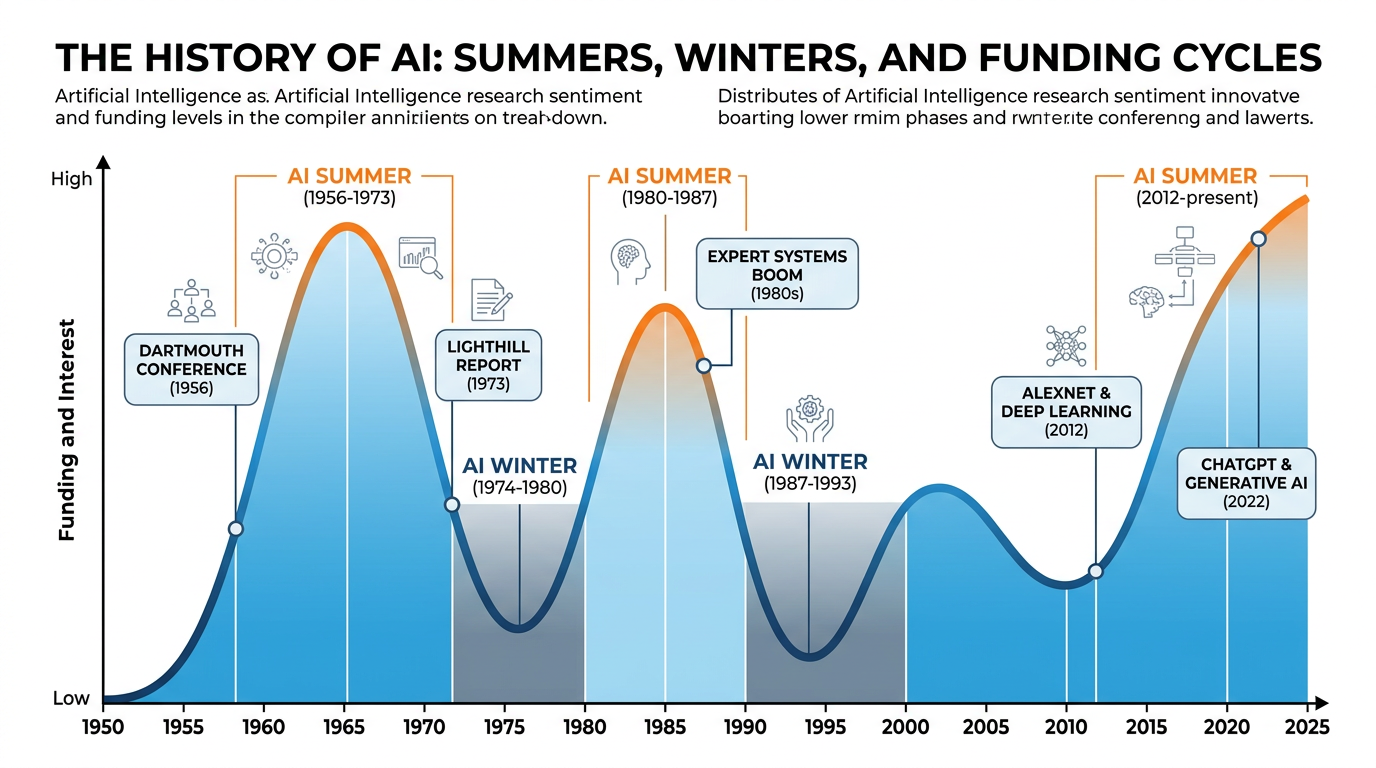

This history matters for business professionals because it provides essential context for evaluating today’s AI claims. We are living through what many call the “AI summer” — a period of extraordinary investment, rapid advancement, and sweeping promises about AI’s transformative potential. Understanding previous cycles of hype and disappointment helps you distinguish genuine business opportunities from overhyped technologies, make better investment decisions about AI adoption, communicate more credibly with technical teams and stakeholders, and appreciate why certain AI capabilities that seem simple are actually monumental achievements.

As we trace this story, we will see recurring themes: the gap between what AI researchers promise and what they deliver, the critical role of data and computing power in enabling breakthroughs, the importance of patience and persistence in scientific progress, and the ways in which God’s creation — particularly the human brain — has inspired humanity’s most ambitious technological endeavors.

1The Birth of Artificial Intelligence (1940s–1956)¶

1.1Alan Turing and the Foundation of Computing¶

The story of AI begins with one of the most brilliant minds of the twentieth century: Alan Turing. A British mathematician and logician, Turing made foundational contributions to computer science, mathematics, and — through his code-breaking work at Bletchley Park during World War II — to the Allied victory itself.

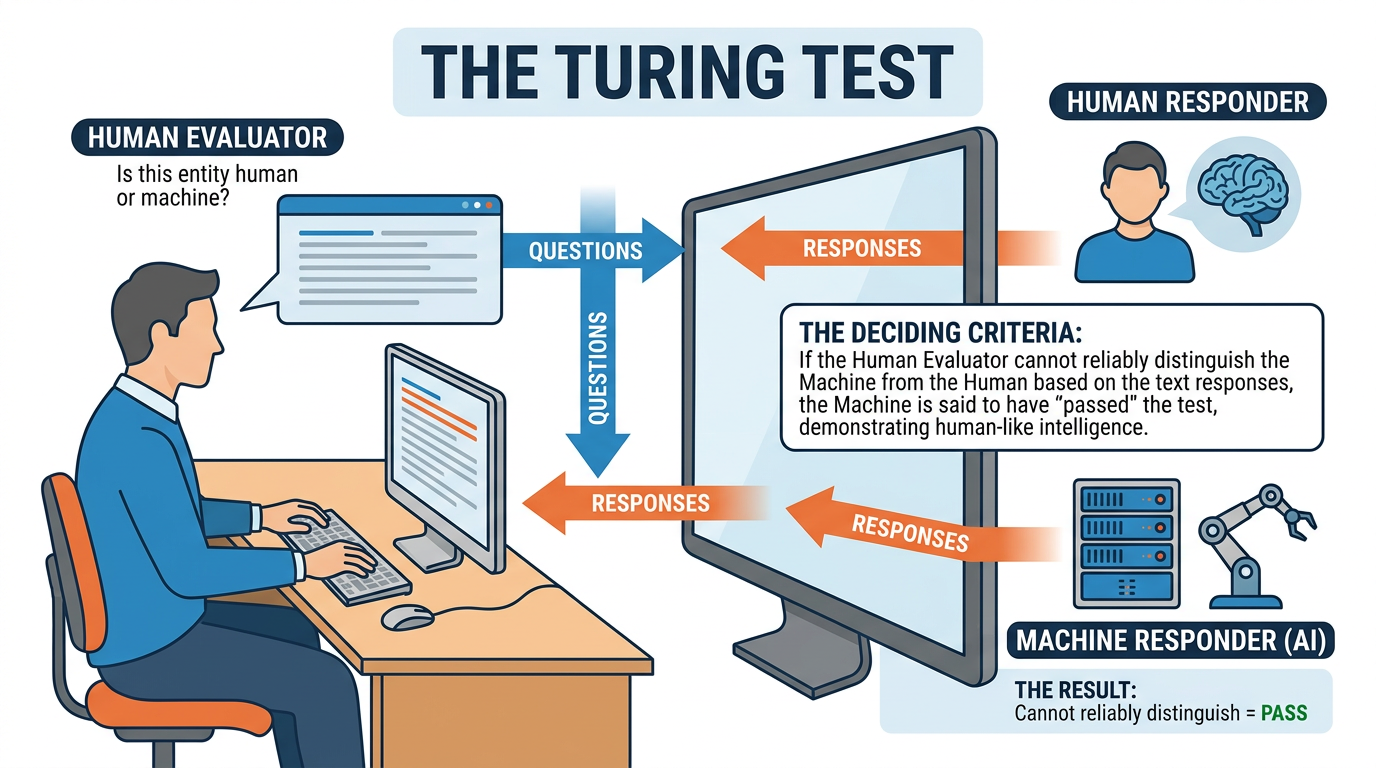

Figure 2:The Turing Test — a human evaluator converses with both a human and a machine through text. If the evaluator cannot reliably tell which is the machine, it is said to have passed the test.

In 1950, Turing published his landmark paper “Computing Machinery and Intelligence” in the journal Mind. Rather than attempting to define “intelligence” directly — a philosophical quagmire — Turing proposed a practical test. He framed the central question not as “Can machines think?” but as “Can machines do what we (as thinking entities) can do?” This pragmatic approach transformed an abstract philosophical debate into a concrete, testable proposition.

Turing’s paper also anticipated many of the arguments that would be raised against AI over the following decades. He addressed objections ranging from “machines can’t be conscious” to “machines can never surprise us” to theological objections about the uniqueness of the human soul. His responses remain remarkably relevant today.

1.2Other Pioneers of Early AI¶

While Turing laid the theoretical foundation, other researchers were building the first AI systems:

Table 1:Early AI Pioneers and Contributions

Pioneer | Contribution | Year | Significance |

|---|---|---|---|

Warren McCulloch & Walter Pitts | Mathematical model of artificial neurons | 1943 | First formal model of a neural network |

Claude Shannon | Information theory; chess-playing program | 1949-1950 | Theoretical foundation for digital computing and AI |

Alan Turing | “Computing Machinery and Intelligence” paper | 1950 | Proposed the Turing Test; framed AI as a field |

Arthur Samuel | Checkers-playing program that learned from experience | 1952 | Coined the term “machine learning” |

John McCarthy | Organized the Dartmouth Conference; coined “Artificial Intelligence” | 1956 | Formally launched AI as a field of study |

Allen Newell & Herbert Simon | Logic Theorist — first AI program | 1956 | Proved mathematical theorems automatically |

Frank Rosenblatt | The Perceptron — first trainable neural network | 1958 | Foundation for modern deep learning |

1.3The Dartmouth Conference (1956): AI Gets Its Name¶

In the summer of 1956, John McCarthy, a young mathematics professor at Dartmouth College, organized a workshop that would define a new field. The proposal stated:

“We propose that a 2 month, 10 man study of artificial intelligence be carried out during the summer of 1956 at Dartmouth College in Hanover, New Hampshire. The study is to proceed on the basis of the conjecture that every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it.”

The Dartmouth Conference brought together the leading minds in computing and cognitive science, including Marvin Minsky, Nathaniel Rochester, and Claude Shannon. While the workshop did not achieve the ambitious goal of creating intelligent machines in two months, it accomplished something equally important: it established AI as a recognized academic discipline and gave it a name.

2The Early Enthusiasm (1956–1974): The First AI Summer¶

2.1Symbolic AI and Expert Systems¶

The first generation of AI researchers pursued what is now called symbolic AI (also known as “Good Old-Fashioned AI” or GOFAI). This approach attempted to replicate intelligence by encoding human knowledge as symbols and rules that computers could manipulate.

Early symbolic AI programs achieved impressive results within narrow domains:

Logic Theorist (1956): Created by Newell and Simon, this program proved 38 of the 52 theorems in Chapter 2 of Principia Mathematica — in some cases finding more elegant proofs than the human authors.

ELIZA (1966): Created by Joseph Weizenbaum at MIT, ELIZA simulated a Rogerian psychotherapist by using pattern matching to rephrase users’ statements as questions. While Weizenbaum intended it as a demonstration of the superficiality of human-machine communication, many users became emotionally attached to the program — foreshadowing today’s debates about AI chatbot relationships.

SHRDLU (1970): Terry Winograd’s program could understand and manipulate objects in a simple “blocks world” by parsing natural language commands. It demonstrated impressive language understanding — but only within its tiny domain.

2.2The Overconfidence Problem¶

Early AI researchers were extraordinarily optimistic — and their predictions proved extraordinarily wrong:

Table 2:Bold Predictions vs. Reality

Researcher | Prediction | Year | What Actually Happened |

|---|---|---|---|

Herbert Simon | “Within 20 years, machines will be capable of doing any work a man can do” | 1965 | AGI remains unachieved 60 years later |

Marvin Minsky | “Within a generation, the problem of creating AI will be substantially solved” | 1967 | The first AI winter began within a decade |

Herbert Simon | “In 10 years, a computer will be chess champion of the world” | 1957 | It took 40 years (Deep Blue, 1997) |

MIT AI Lab | Computer vision: “a summer project” for undergraduates | 1966 | Computer vision remained unsolved for 50+ years |

3The First AI Winter (1974–1980)¶

3.1Why AI Failed to Deliver¶

By the early 1970s, the gap between AI’s promises and its results had become impossible to ignore. Several fundamental problems emerged:

Many AI problems involve searching through enormous spaces of possibilities. As problems grow larger, the number of possible solutions explodes exponentially. Early AI programs that worked on simple problems became hopelessly slow on realistic ones.

Example: A program that could plan a route through 10 cities (3.6 million possibilities) could not handle 20 cities (2.4 quintillion possibilities) with the same approach.

Symbolic AI required humans to manually encode knowledge as rules. This worked for narrow, well-defined domains but was impractical for the vast, messy, common-sense knowledge that humans use effortlessly.

Example: Teaching a computer that “water flows downhill” is easy. Teaching it all the things a five-year-old knows about how the physical world works requires millions of such rules.

How does an AI know what changes and what stays the same when an action is taken? Humans handle this effortlessly (if you move a cup of coffee, you know the table stays put). For AI systems, specifying what doesn’t change was as hard as specifying what does.

The computers of the 1970s lacked the processing power and memory to handle the kinds of problems AI researchers wanted to solve. Moore’s Law would eventually solve this — but “eventually” was decades away.

3.2The Lighthill Report (1973)¶

The death blow to the first AI era came from James Lighthill, a British mathematician commissioned by the UK’s Science Research Council to evaluate AI research. His report was devastating:

“In no part of the field have the discoveries made so far produced the major impact that was then promised.”

Lighthill argued that AI’s impressive demonstrations on “toy problems” would not scale to real-world complexity. The report led to the near-total elimination of AI funding in the United Kingdom and severely damaged funding worldwide.

Figure 3:The boom-and-bust cycles of AI development. Periods of high investment and optimism (summers) alternate with periods of reduced funding and disillusionment (winters).

4The Expert Systems Boom and Bust (1980–1993)¶

4.1The Second AI Summer: Expert Systems¶

AI experienced a dramatic revival in the 1980s with expert systems — programs that captured the knowledge of human domain experts in rule-based systems.

The most famous expert system was MYCIN, developed at Stanford in the 1970s and deployed in the 1980s. MYCIN diagnosed bacterial infections and recommended antibiotics with 69% accuracy — outperforming many non-specialist physicians. Other notable expert systems included:

XCON (R1): Configured computer systems for Digital Equipment Corporation, saving the company an estimated $40 million per year

DENDRAL: Identified chemical compounds from mass spectrometry data

PROSPECTOR: Assisted in geological exploration and mineral discovery

4.2The Expert Systems Industry¶

Expert systems spawned a billion-dollar industry almost overnight. Companies like IntelliCorp, Teknowledge, and Carnegie Group sold expert system development tools and consulting services. Japan launched its ambitious Fifth Generation Computer project in 1982, investing $850 million to build AI-powered computers. The British government, stung by the Lighthill report’s consequences, launched the Alvey Programme to revive AI research.

Table 3:The Expert Systems Market

Year | Global Expert Systems Market | Key Development |

|---|---|---|

1980 | $10 million | R1/XCON deployed at DEC |

1983 | $100 million | Japan launches Fifth Generation project |

1985 | $1 billion | Peak of the expert systems boom |

1987 | $2 billion | Market begins to slow |

1990 | Declining | Japan quietly scales back Fifth Generation |

1993 | Collapsed | Most expert system companies bankrupt |

4.3The Second AI Winter (1987–1993)¶

Expert systems ultimately failed to deliver on their promise for several reasons:

Brittleness. Expert systems worked well within their narrow domains but failed catastrophically when encountering situations slightly outside their training. Unlike human experts, they could not apply common sense or adapt to novel circumstances.

Maintenance nightmare. As rules accumulated (some systems had 10,000+ rules), updating and maintaining them became impossibly complex. Rules could interact in unexpected ways, and debugging was extremely difficult.

Knowledge acquisition bottleneck. Extracting knowledge from human experts and encoding it as rules was slow, expensive, and imperfect. Experts often couldn’t articulate their tacit knowledge — the intuitive understanding they relied on but couldn’t express in words.

Hardware dependency. Many expert systems ran on expensive, specialized Lisp machines that were rendered obsolete by rapidly improving conventional computers.

By 1993, most expert system companies had gone bankrupt, Japan’s Fifth Generation project had quietly fizzled, and “artificial intelligence” had once again become a term that researchers avoided in funding proposals.

5The Neural Network Renaissance (1980s–2000s)¶

5.1The Return of Neural Networks¶

While expert systems dominated the headlines, a quieter revolution was underway. Researchers were revisiting an approach that had been largely abandoned since the 1960s: neural networks.

The Perceptron and Its Limits¶

The story of neural networks begins with Frank Rosenblatt’s Perceptron (1958), a simple neural network that could learn to classify inputs into categories. The perceptron could learn to distinguish between different shapes or classify simple patterns — and it learned from examples, rather than being explicitly programmed.

The perceptron generated enormous excitement. The New York Times headline read: “New Navy Device Learns By Doing” and described it as “the embryo of an electronic computer that [the Navy] expects will be able to walk, talk, see, write, reproduce itself, and be conscious of its existence.”

But in 1969, Marvin Minsky and Seymour Papert published Perceptrons, a mathematical analysis demonstrating that single-layer perceptrons could not solve certain simple problems — most notably the XOR (exclusive or) function. While the limitation applied only to single-layer networks, the book was widely interpreted as a death sentence for neural networks. Funding dried up, and researchers moved to other approaches.

The Backpropagation Breakthrough (1986)¶

The revival came in 1986 when David Rumelhart, Geoffrey Hinton, and Ronald Williams published their work on backpropagation — an algorithm for training multi-layer neural networks. Backpropagation solved the problem Minsky had identified: by adding hidden layers between input and output, and using the backpropagation algorithm to adjust the weights of connections, neural networks could learn to solve complex, non-linear problems.

5.2Statistical AI and Machine Learning (1990s)¶

The 1990s saw a shift away from both symbolic AI and neural networks toward statistical and probabilistic approaches. Rather than trying to encode knowledge explicitly or mimic the brain, researchers focused on building systems that could learn patterns from large amounts of data using statistical methods.

Key developments included:

Support Vector Machines (SVMs): Powerful classification algorithms that found optimal decision boundaries between categories

Bayesian Networks: Probabilistic models that could reason under uncertainty

Hidden Markov Models: Sequential models used for speech recognition and bioinformatics

Random Forests and Ensemble Methods: Combining multiple models for better predictions

This era also saw AI achieve high-profile milestones:

6The Deep Learning Revolution (2006–Present)¶

6.1What Changed: Data, Compute, and Algorithms¶

The current AI revolution — driven by deep learning — emerged from the convergence of three factors that finally came together in the early 2010s:

The internet, smartphones, social media, and IoT devices generated unprecedented volumes of data. ImageNet (2009) provided 14 million labeled images for training computer vision models. Wikipedia, web pages, and digitized books provided trillions of words for language models.

GPUs (Graphics Processing Units), originally designed for video games, turned out to be ideal for the matrix operations required by neural networks. NVIDIA’s CUDA platform (2007) made GPU computing accessible. Later, Google developed custom TPUs (Tensor Processing Units) specifically for AI workloads.

Key innovations unlocked deep learning’s potential: better activation functions (ReLU), training techniques (dropout, batch normalization), new architectures (CNNs, RNNs, LSTMs), and — most importantly — the transformer architecture (2017).

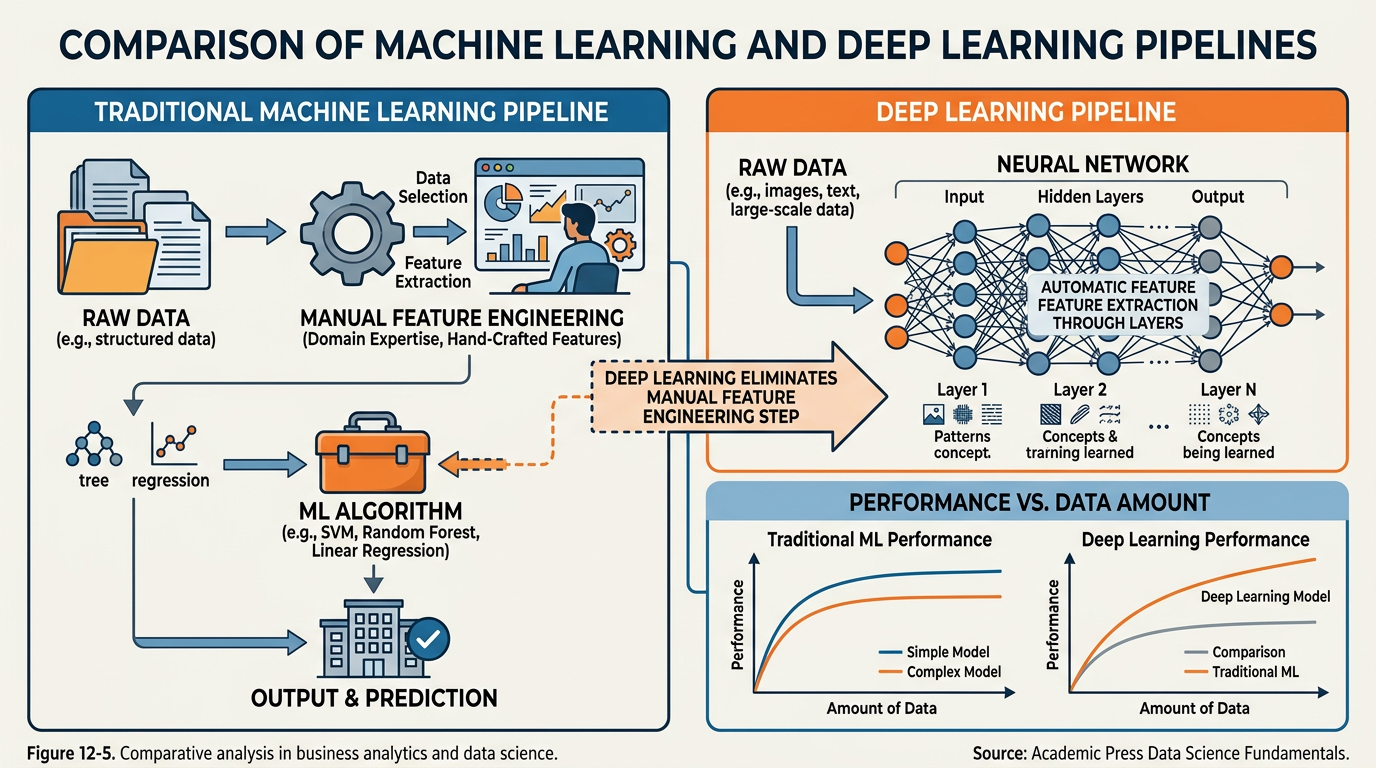

Figure 4:Traditional Machine Learning requires manual feature engineering by domain experts, while Deep Learning automatically learns features from raw data through successive neural network layers — a key advantage that enabled the current AI revolution.

6.2Geoffrey Hinton and the Deep Learning Breakthrough¶

Geoffrey Hinton, often called the “godfather of deep learning,” played a pivotal role in the revolution. Along with his students, Hinton had been developing and refining neural network techniques for decades — even during the periods when the approach was deeply unfashionable.

The turning point came in 2012, when Hinton’s student Alex Krizhevsky entered the ImageNet Large Scale Visual Recognition Challenge (ILSVRC) with a deep convolutional neural network called AlexNet. The results were staggering:

Table 4:ImageNet Challenge: Before and After AlexNet

Year | Best Error Rate | Approach | Improvement |

|---|---|---|---|

2010 | 28.2% | Traditional computer vision (hand-crafted features) | Baseline |

2011 | 25.8% | Traditional computer vision | Small improvement |

2012 | 16.4% | AlexNet (Deep CNN) | Massive 10-point leap |

2014 | 6.7% | GoogLeNet / VGGNet | Continued improvement |

2015 | 3.6% | ResNet (152 layers) | Surpassed human performance (~5%) |

AlexNet’s victory was not incremental — it was a paradigm shift. The error rate dropped by nearly 10 percentage points in a single year, compared to the 2-3 point improvements that had been typical. The AI research community took notice, and the deep learning revolution began in earnest.

6.3Computer Vision: Teaching Machines to See¶

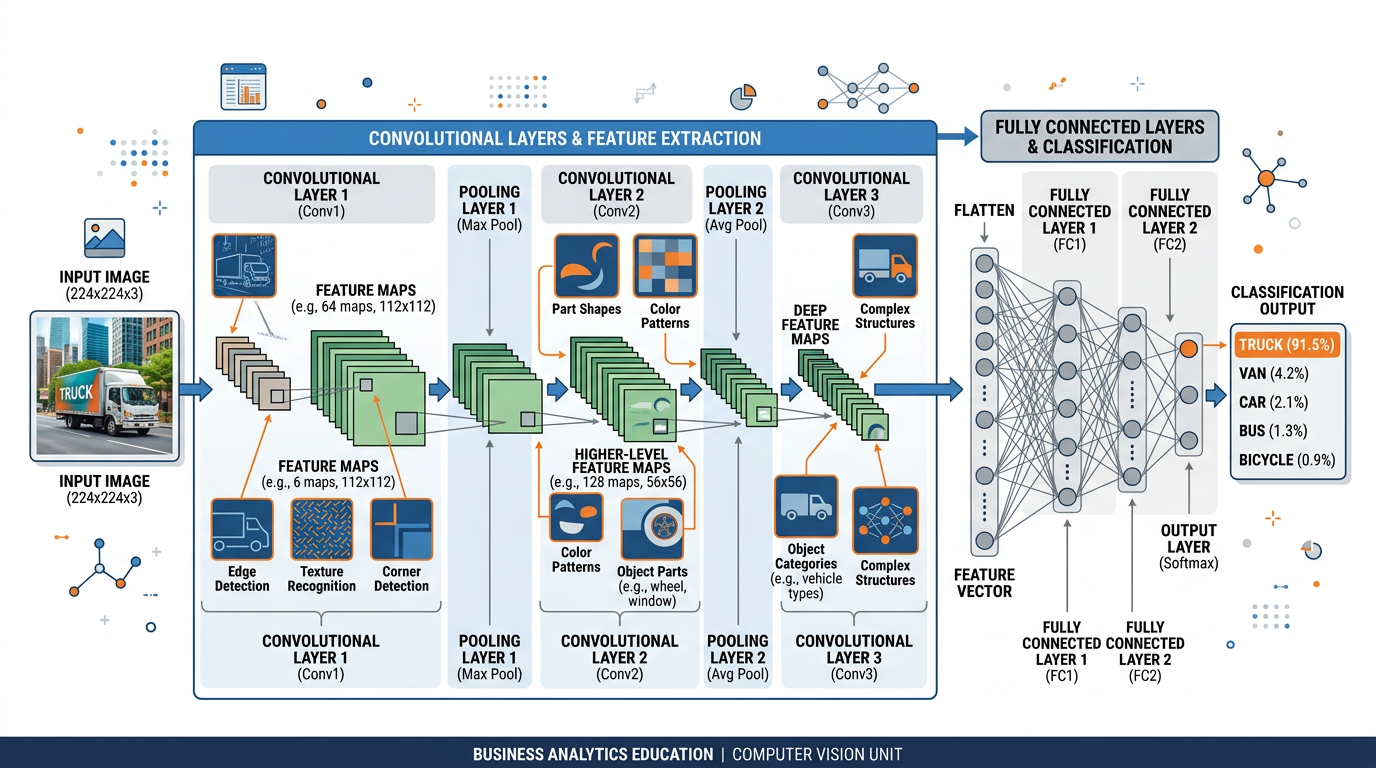

Figure 5:How a Convolutional Neural Network (CNN) processes an image: raw pixels flow through convolutional layers that detect features (edges, textures, shapes), pooling layers that compress information, and fully connected layers that produce the final classification.

Convolutional Neural Networks (CNNs) are the deep learning architecture that enabled the computer vision revolution. Inspired by the visual cortex of the human brain, CNNs process images through a hierarchy of layers:

Convolutional layers apply filters to detect basic features (edges, corners, textures)

Pooling layers downsample the data, reducing dimensionality while preserving important features

Deeper layers combine basic features into increasingly complex patterns (eyes, faces, objects)

Fully connected layers make the final classification decision

Business Applications of Computer Vision¶

Visual search: Customers photograph products to find where to buy them (Google Lens, Pinterest)

Inventory management: Cameras automatically track shelf stock levels

Customer analytics: Foot traffic analysis, heat maps, dwell time measurement

Self-checkout: Computer vision identifies products without barcodes

Loss prevention: Detecting theft in real-time through video analysis

Quality control: Automated inspection of products on assembly lines detecting defects invisible to the human eye

Predictive maintenance: Visual analysis of equipment wear patterns

Safety monitoring: Detecting when workers are in unsafe positions or not wearing protective equipment

Process optimization: Analyzing production line video to identify bottlenecks

Medical imaging: Detecting tumors, fractures, and diseases in X-rays, MRIs, and CT scans

Pathology: Analyzing tissue samples for cancer cells

Dermatology: AI-powered skin cancer screening from smartphone photos

Surgical assistance: Computer vision guides robotic surgery systems

Drug development: Analyzing cellular responses to drug compounds

Crop monitoring: Drone-based detection of disease, pests, and nutrient deficiency

Yield prediction: Satellite imagery analysis for harvest forecasting

Weed detection: Precision herbicide application using computer vision

Livestock monitoring: Health and behavior tracking for individual animals

6.4Natural Language Processing: Teaching Machines to Read and Write¶

NLP has undergone a dramatic transformation thanks to deep learning. Traditional NLP relied on handcrafted rules and statistical methods. Modern NLP, powered by transformer-based models, can perform tasks that seemed impossible just a decade ago:

Key NLP Milestones:

Table 5:NLP Evolution

Era | Approach | Capabilities | Limitations |

|---|---|---|---|

Rule-Based (1960s-1990s) | Handcrafted grammar rules and dictionaries | Simple parsing, keyword search | Rigid, brittle, couldn’t handle ambiguity |

Statistical (1990s-2010s) | Statistical models trained on text corpora | Machine translation, spam detection, basic sentiment | Required extensive feature engineering |

Deep Learning (2013-2017) | Word embeddings, RNNs, LSTMs | Improved translation, question answering | Struggled with long-range dependencies |

Transformer Era (2017-Present) | Attention-based architectures, LLMs | Human-level text generation, translation, summarization, coding | Hallucination, bias, context window limits |

The Transformer Revolution¶

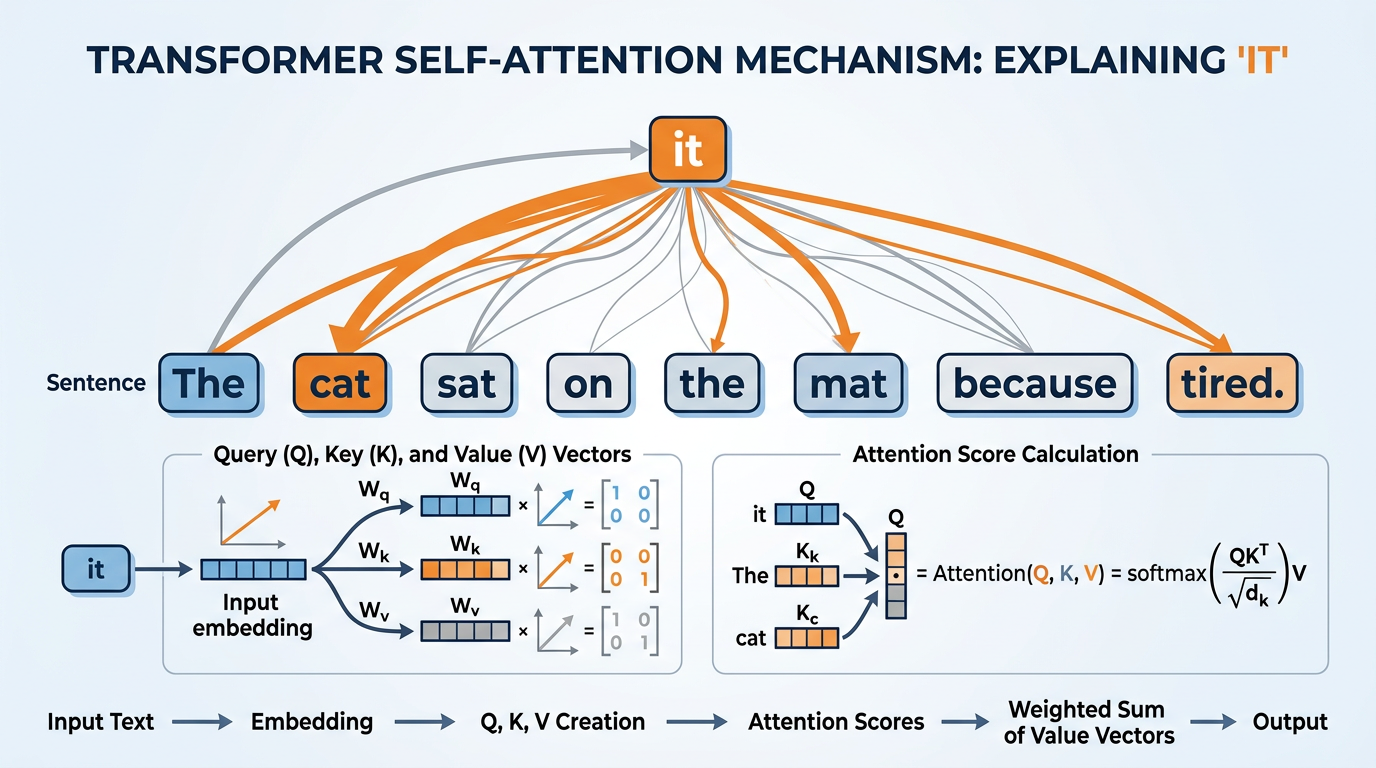

The 2017 paper “Attention Is All You Need” by Vaswani et al. introduced the transformer architecture, which fundamentally changed NLP and eventually all of AI. The key innovation was the self-attention mechanism, which allows the model to consider the relationships between all words in a sentence simultaneously, rather than processing them one at a time.

Figure 6:The self-attention mechanism in action — the transformer model learns which words in a sentence are most relevant to each other, enabling it to understand context and meaning far better than previous approaches.

This architecture enabled:

BERT (2018): Google’s bidirectional model that understood language context in both directions, achieving state-of-the-art results on virtually every NLP benchmark

GPT-2 (2019): OpenAI’s text generation model, so capable that OpenAI initially withheld it from public release over concerns about misuse

GPT-3 (2020): With 175 billion parameters, GPT-3 demonstrated remarkable few-shot learning abilities

ChatGPT (2022): Fine-tuned with human feedback, making LLM technology accessible to the general public

GPT-4 (2023), Claude 3, Gemini Ultra: Multimodal models capable of processing text, images, audio, and code

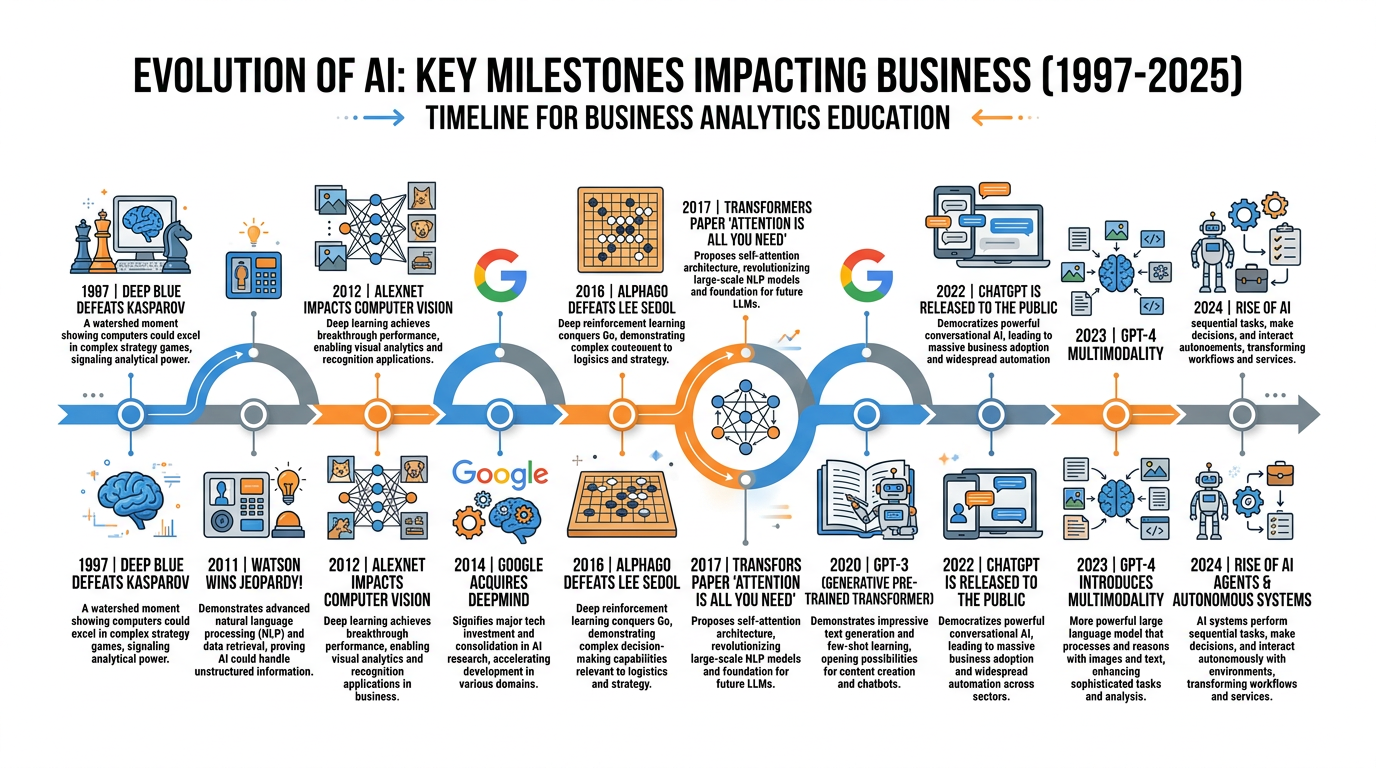

6.5AI Milestones That Shaped Business¶

The following timeline highlights the key moments where AI capabilities crossed thresholds that made them commercially viable:

Figure 7:Key AI milestones that transformed business possibilities. Each breakthrough opened new categories of commercial applications.

Table 6:Landmark AI Achievements

Year | Milestone | Significance for Business |

|---|---|---|

1997 | Deep Blue beats Kasparov at chess | Proved AI could match human expertise in specific domains |

2011 | IBM Watson wins Jeopardy! | Demonstrated AI understanding of natural language and general knowledge |

2012 | AlexNet wins ImageNet | Launched the deep learning revolution; computer vision became commercially viable |

2014 | Google acquires DeepMind ($500M) | Signaled Big Tech’s massive bet on AI |

2016 | AlphaGo defeats world Go champion | AI mastered a game thought to require human intuition; reinforcement learning proved powerful |

2017 | Transformer architecture published | Foundation for all modern language models and generative AI |

2020 | GPT-3 released | Demonstrated that language models could perform many tasks without specific training |

2022 | ChatGPT launches | Fastest-growing consumer app in history; made generative AI mainstream |

2023 | GPT-4 and multimodal models | AI that can process text, images, audio, and code together |

2024 | AI agents and autonomous workflows | AI systems that can plan, use tools, and complete multi-step tasks |

7Understanding Neural Networks: A Business-Friendly Explanation¶

7.1How Neural Networks Learn¶

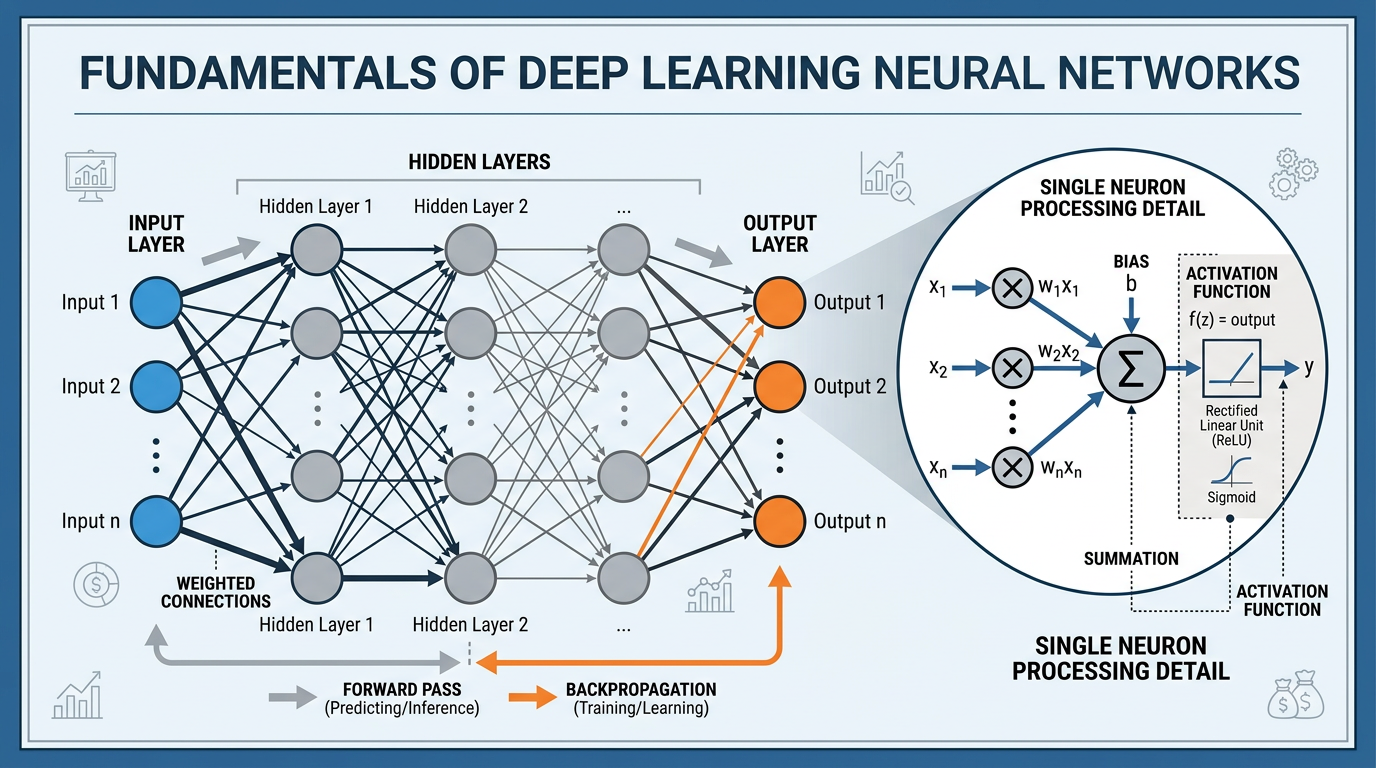

Figure 8:A detailed view of a neural network’s architecture, with a close-up of how a single artificial neuron processes its inputs — multiplying by weights, adding bias, and applying an activation function to produce output.

You don’t need to implement neural networks to be an effective business leader — but you do need to understand conceptually how they work. Here is a business-friendly explanation:

Imagine you’re training a new employee to evaluate loan applications.

You give them examples (historical loan applications with outcomes: approved/denied and whether the borrower repaid). This is the training data.

They start with guesses. At first, they have no idea which factors matter. They might weight income, debt, and employment history equally. These initial weights are like the initial parameters of a neural network.

They make predictions and get feedback. For each application, they predict whether to approve. You tell them if they were right or wrong. This is the training loop.

They adjust their approach. After seeing many examples, they learn which factors are most predictive. They might discover that debt-to-income ratio matters most, followed by payment history, while zip code matters little. This adjustment is backpropagation — the network adjusts its weights to reduce errors.

They develop intuition. After thousands of examples, they develop pattern recognition that they might not even be able to articulate fully. A deep neural network does the same — it develops representations of the data that capture complex, non-obvious relationships.

You test them on new cases. Finally, you evaluate their performance on applications they’ve never seen. This is the validation/test phase, and it tells you how well their learning will generalize to new situations.

7.2Deep Learning Architectures for Business¶

Different business problems require different neural network architectures:

Table 7:Neural Network Architectures and Business Applications

Architecture | What It’s Good At | Business Applications |

|---|---|---|

Feedforward Neural Networks | Classification, prediction from structured data | Credit scoring, demand forecasting, customer churn prediction |

Convolutional Neural Networks (CNNs) | Image and visual data processing | Quality control, medical imaging, facial recognition, autonomous vehicles |

Recurrent Neural Networks (RNNs/LSTMs) | Sequential data and time series | Stock prediction, speech recognition, language translation |

Transformers | Language understanding and generation, multimodal processing | ChatGPT, search engines, document analysis, code generation |

Generative Adversarial Networks (GANs) | Creating realistic synthetic data and images | Product design, data augmentation, creative content |

Autoencoders | Anomaly detection, data compression | Fraud detection, recommendation systems, data denoising |

8The Business Impact of AI’s Evolution¶

8.1From Research to Revenue¶

The transition of AI from academic curiosity to business necessity has accelerated dramatically:

Table 8:Global AI Market Growth

Year | Global AI Market Size | Key Driver |

|---|---|---|

2015 | $3.2 billion | Early enterprise adoption of ML |

2018 | $21.5 billion | Cloud AI services (AWS, Azure, GCP) |

2020 | $62.4 billion | Pandemic-accelerated digital transformation |

2022 | $119.8 billion | ChatGPT and generative AI explosion |

2024 | $196.6 billion | Enterprise AI adoption across industries |

2030 (est.) | $1.3 trillion | AI integration into all business functions |

8.2Case Study: AlphaGo and the Business of Intuition¶

In March 2016, DeepMind’s AlphaGo defeated Lee Sedol, one of the greatest Go players in history, 4 games to 1. This was considered a landmark far more significant than Deep Blue’s chess victory, because Go was thought to require human intuition. The game has more possible board positions than atoms in the universe — brute-force search was impossible.

AlphaGo learned Go through a combination of supervised learning (studying millions of human games) and reinforcement learning (playing millions of games against itself). In Game 2, AlphaGo made a move — Move 37 — that stunned the Go world. Expert commentators initially thought it was a mistake. It turned out to be brilliant — a creative, counter-intuitive play that no human had ever conceived.

8.3Case Study: How Netflix Uses Deep Learning¶

Netflix’s recommendation engine is one of the most successful AI applications in business history, estimated to save the company $1 billion per year by reducing churn. The system uses deep learning to analyze:

Viewing history: What you’ve watched, when, and for how long

Browsing behavior: What you’ve searched for, scrolled past, or hovered over

Content features: Genre, actors, directors, themes, pacing, visual style

Contextual data: Time of day, device type, day of week

Similar user behavior: What viewers with similar tastes watch

The deep learning models learn complex, non-obvious relationships — for example, that people who watched a specific documentary on a Tuesday evening are likely to enjoy a particular foreign thriller on weekend mornings. These patterns are too subtle and numerous for humans to identify, but deep learning excels at finding them.

9AI Summers and Winters: Lessons for Today¶

Figure 9:The dramatic cycles of AI funding and enthusiasm over seven decades — from early optimism through two AI winters to the current deep learning boom. Understanding these cycles helps business leaders evaluate today’s AI investments with historical perspective.

9.1Are We in an AI Bubble?¶

The current period of AI investment is unprecedented. In 2023 alone, venture capital firms invested over $50 billion in AI startups. Major tech companies are spending tens of billions on AI infrastructure. The question every business student should be asking is: Are we in another AI bubble?

AI is generating real revenue — not just research papers

ChatGPT reached 100M users in 2 months — genuine consumer demand

AI is already deployed across industries (not just demos)

Massive training data and compute are already available

Major companies report measurable ROI from AI investments

Unlike previous eras, AI tools are accessible to small businesses

Overvaluation: Many AI companies are valued at 100x+ revenue

Commodity risk: Basic AI capabilities are becoming free/cheap

Energy costs: Training and running large models is extremely expensive

Regulation: EU AI Act and other regulations may slow adoption

Hallucination problem: AI unreliability limits high-stakes applications

Talent shortage: Not enough skilled AI workers to meet demand

Historical pattern: Every previous AI boom has ended in a bust

10The Future: What’s Next?¶

As we look ahead, several trends are shaping the next phase of AI’s evolution:

Multimodal AI: Systems that seamlessly process text, images, audio, video, and code together (already emerging with GPT-4, Gemini, and Claude)

AI Agents: Systems that can plan, reason, use tools, and complete multi-step tasks autonomously

Edge AI: Running AI models on local devices (phones, cars, IoT sensors) rather than in the cloud

Specialized AI: Custom models trained for specific industries and tasks

AI Regulation: Governments worldwide developing frameworks to govern AI development and deployment

Quantum AI: Quantum computing potentially enabling AI capabilities impossible on classical computers

“Call to me and I will answer you and tell you great and unsearchable things you do not know.”

Jeremiah 33:3 (NIV)

As Christians, we can marvel at the creativity and intelligence that God has given humanity — the ability to create systems that learn, perceive, and generate. The history of AI is, in many ways, a testament to the restless curiosity and persistent ingenuity that are part of our nature as beings made in God’s image. Our calling is to direct these extraordinary capabilities toward purposes that honor God, serve our neighbors, and promote human flourishing.

11Chapter Summary¶

The history of AI teaches us essential lessons for the business world:

AI has evolved through cycles of optimism and disappointment — the current era is transformative but not immune to setbacks.

Three factors converged to enable the deep learning revolution: massive data, powerful hardware (especially GPUs), and algorithmic breakthroughs.

Neural networks, inspired by the human brain, learn from data through a process of making predictions, receiving feedback, and adjusting — similar to how humans learn from experience.

Computer vision has achieved and surpassed human-level performance in specific tasks, enabling applications from medical imaging to autonomous driving.

Natural language processing, powered by the transformer architecture, has progressed from basic keyword matching to human-level text understanding and generation.

The transformer architecture (2017) is the breakthrough behind modern LLMs like ChatGPT, Claude, and Gemini.

Business adoption of AI has accelerated dramatically, with the global market projected to reach $1.3 trillion by 2030.

History teaches caution alongside optimism — evaluate AI investments based on demonstrated results, not hype.

Turing Test A test of machine intelligence proposed by Alan Turing (1950) in which a human evaluator converses with both a human and a machine; if the evaluator cannot reliably distinguish them, the machine is said to have passed.

Symbolic AI An approach to AI that represents knowledge using human-readable symbols and manipulates them using formal rules, dominant from the 1950s through 1980s.

Expert System An AI program that encodes the decision-making knowledge of human experts in a specific domain using rule-based systems.

AI Winter A period of reduced funding, interest, and progress in AI research, typically following a cycle of inflated expectations and underdelivered promises.

Neural Network A computational model inspired by the brain, consisting of interconnected layers of artificial neurons that learn patterns from data.

Deep Learning A subset of machine learning using neural networks with many layers to learn hierarchical representations of data.

Backpropagation An algorithm for training neural networks by propagating errors backward through the network and adjusting weights to minimize those errors.

Convolutional Neural Network (CNN) A neural network architecture specialized for processing visual data, using convolutional filters to detect features at multiple levels of abstraction.

Transformer A neural network architecture based on self-attention mechanisms, introduced in 2017, that forms the foundation of modern large language models.

Computer Vision A field of AI enabling computers to interpret visual information from images and video.

Natural Language Processing (NLP) A field of AI focused on enabling computers to understand, interpret, and generate human language.

ImageNet A large-scale dataset of labeled images used as a benchmark for computer vision research; the ImageNet Challenge catalyzed the deep learning revolution.

GPU (Graphics Processing Unit) Originally designed for rendering graphics, GPUs became the primary hardware for training deep learning models due to their ability to perform massive parallel computations.

Self-Attention Mechanism The core innovation of the transformer architecture that allows the model to weigh the importance of different parts of the input relative to each other.

Model Parameters The numerical values (weights and biases) within a neural network that are adjusted during training; modern LLMs have billions to trillions of parameters.

Reinforcement Learning A ML approach where an agent learns optimal behavior through trial and error, receiving rewards or penalties from its environment.

12Module 2 Activities¶

12.1Discussion: AI History and Business Strategy¶

12.2Written Analysis: AI Technology Assessment¶

12.3Reflection: God, Creativity, and Machine Intelligence¶

12.4Hands-On Activity 1: AI Timeline Research Project¶

12.5Hands-On Activity 2: Neural Network Simulation¶

Having traced AI’s evolution from Turing’s visionary ideas to the deep learning revolution, we are now ready to dive deep into one of AI’s most transformative capabilities. In Chapter 3, we will explore Natural Language Processing — the technology that enables machines to understand, interpret, and generate human language, powering everything from chatbots to search engines to the large language models reshaping business today.