Generative AI in Teaching, Learning, and Research

How Large Language Models Are Rewriting the Academic Contract

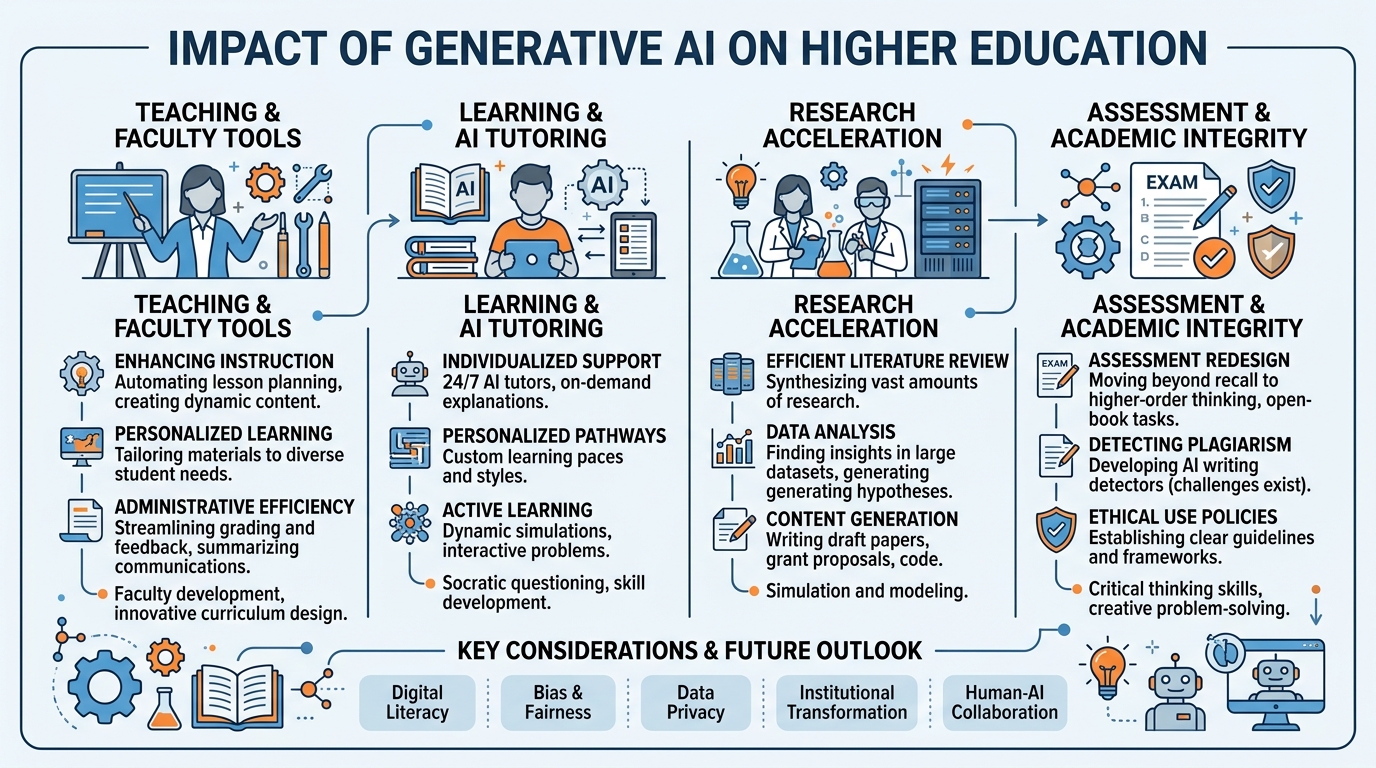

Figure 1:Chapter 2 Overview: Generative AI is reshaping every dimension of the academic enterprise — from how faculty design courses and assess learning, to how students write and research, to how scholars analyze data and review literature. This chapter maps the landscape of application, evidence, and challenge.

When ChatGPT became publicly available on November 30, 2022, higher education had approximately 72 hours before students started submitting AI-generated work. The initial institutional response — a wave of AI detection tools, updated syllabus language, and debate about returning to handwritten blue-book exams — captured the sector’s anxiety but missed the deeper transformation underway.

Generative AI is not primarily a cheating problem. It is a pedagogical revolution that is forcing a reckoning with fundamental questions that higher education has long deferred: What is the purpose of a written assignment? What cognitive processes do we actually want students to develop? What does learning look like when a student has an infinitely patient, encyclopedically informed AI tutor available at any hour? And what is the unique value of human instruction, mentorship, and intellectual community in an AI-abundant world?

This chapter examines generative AI’s impact on each major domain of academic life: teaching and course design, student learning, academic research, and the assessment systems that mediate between them.

12.1 Understanding Generative AI: A Primer for Educators¶

Before examining AI’s educational applications, educators need a working understanding of what generative AI actually is — and what it is not. Misconceptions in both directions (AI as omniscient oracle vs. AI as sophisticated autocomplete) lead to poor decisions about adoption and governance.

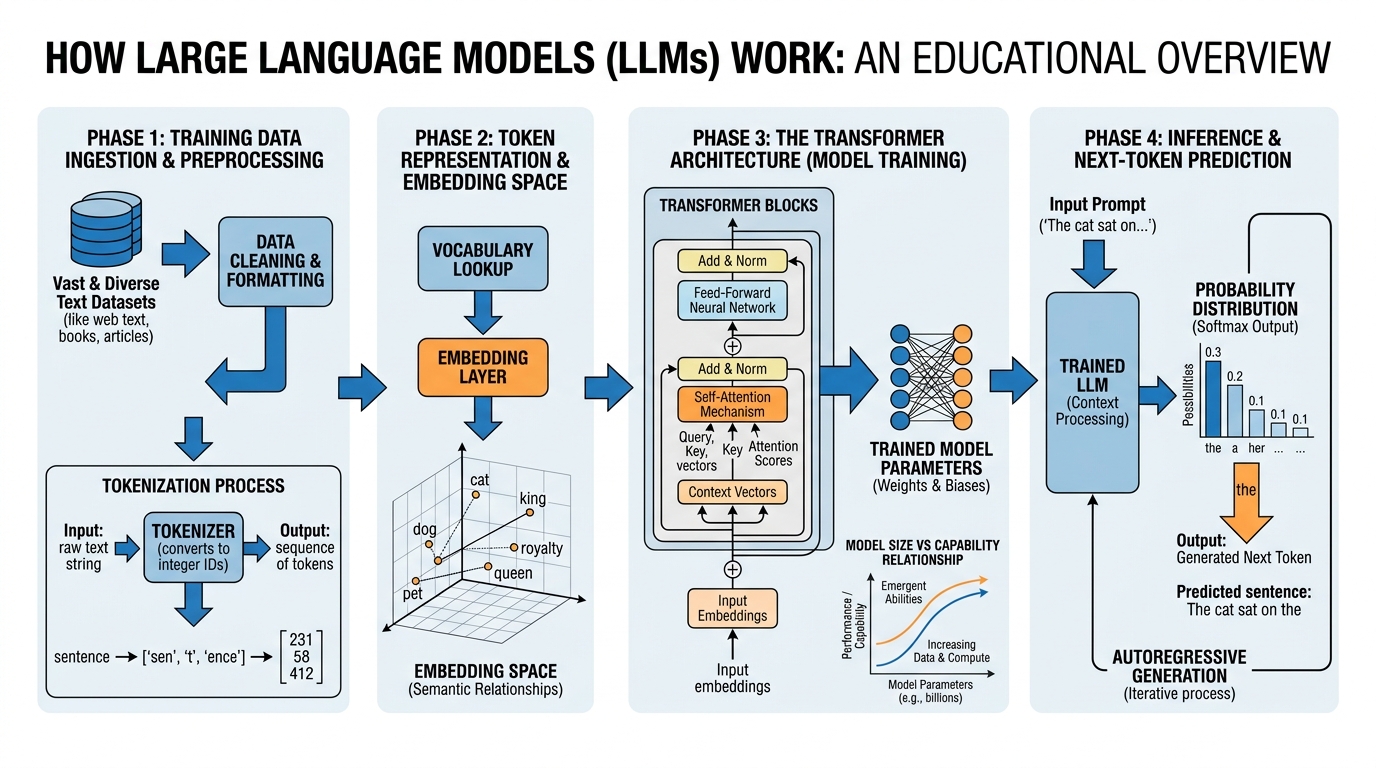

Figure 2:Figure 2.2: How Large Language Models (LLMs) work — from training data and transformer architecture to the inference process that generates responses. Understanding these mechanisms helps educators make more informed decisions about when AI is reliable, when it hallucinates, and what kinds of tasks it handles well or poorly.

1.12.1.1 The Core Mechanism: Prediction at Scale¶

Large Language Models like GPT-4, Claude, Gemini, and Llama are trained on vast corpora of text — billions of documents from the internet, books, academic papers, code repositories, and other sources. Through a process called self-supervised learning, these models learn to predict the next token (roughly, the next word-fragment) in a sequence, given all preceding tokens. Repeated across trillions of training examples, this seemingly simple objective produces systems with remarkable capabilities for text generation, reasoning, translation, summarization, and more.

The transformer architecture, introduced in the landmark 2017 paper “Attention Is All You Need” by Vaswani et al., enabled the scaling that made modern LLMs possible. The key innovation was the attention mechanism, which allows models to relate any part of an input sequence to any other part — capturing long-range dependencies in language that earlier recurrent architectures struggled to handle.

For educators, the critical insight is that LLMs are fundamentally statistical pattern matchers operating at extraordinary scale. They do not “understand” text the way humans do — they have no grounded experience, no embodied perception, no persistent memory across conversations. What they do have is an extraordinary capacity to generate fluent, contextually appropriate text that reflects the statistical regularities of their training data.

1.22.1.2 Capabilities and Limitations¶

LLMs excel at:

| Capability | Examples |

|---|---|

| Text generation | Drafting essays, emails, reports, proposals |

| Summarization | Condensing long documents, literature reviews |

| Translation | Cross-language communication, code translation |

| Explanation | Breaking down complex concepts at multiple levels |

| Code generation | Writing, debugging, explaining code |

| Brainstorming | Generating ideas, outlines, counterarguments |

| Feedback | Commenting on drafts, identifying weaknesses |

| Question answering | Responding to factual and conceptual questions |

LLMs struggle with:

| Limitation | Why It Matters in Education |

|---|---|

| Hallucination | Generates plausible-sounding false information; problematic for research and factual claims |

| Knowledge cutoffs | Does not know about events after training data cutoff |

| Reasoning limits | Struggles with multi-step mathematical reasoning, formal logic |

| No memory | Cannot remember previous conversations by default |

| Bias | Reflects biases in training data, including racial, gender, and cultural biases |

| Confidentiality | Inputs to commercial AI may be used for training or accessed by third parties |

1.32.1.3 The Generative AI Landscape in Higher Education (2025-2026)¶

The generative AI tools available to higher education in 2025-2026 span a wide spectrum of integration levels:

Consumer AI tools (ChatGPT, Claude, Gemini, Copilot) are accessible to anyone with an internet connection, require no institutional procurement, and are used by students and faculty independently of institutional systems.

Institutional AI platforms are enterprise agreements that provide AI capabilities with enhanced privacy protections, data governance controls, and often specialized higher education features. Microsoft 365 Copilot for Education, Google Workspace AI, and Anthropic’s Claude for Enterprise are examples.

LMS-integrated AI is built into Canvas, Blackboard, and Moodle — providing AI assistance for grade feedback, rubric generation, quiz creation, and accessibility features within the existing course management workflow.

Specialized EdTech AI includes purpose-built educational AI applications: Khanmigo (Khan Academy’s AI tutor), Cognii (AI writing feedback), Packback (AI-powered discussion facilitation), and dozens of discipline-specific tools.

Research AI includes tools like Elicit, ResearchRabbit, Semantic Scholar, and Consensus that use AI to help researchers find, evaluate, and synthesize academic literature.

22.2 AI-Enhanced Teaching: New Possibilities for Faculty¶

Generative AI is creating genuine new possibilities for teaching — not just efficiency gains but qualitatively different instructional experiences that were previously impossible at scale.

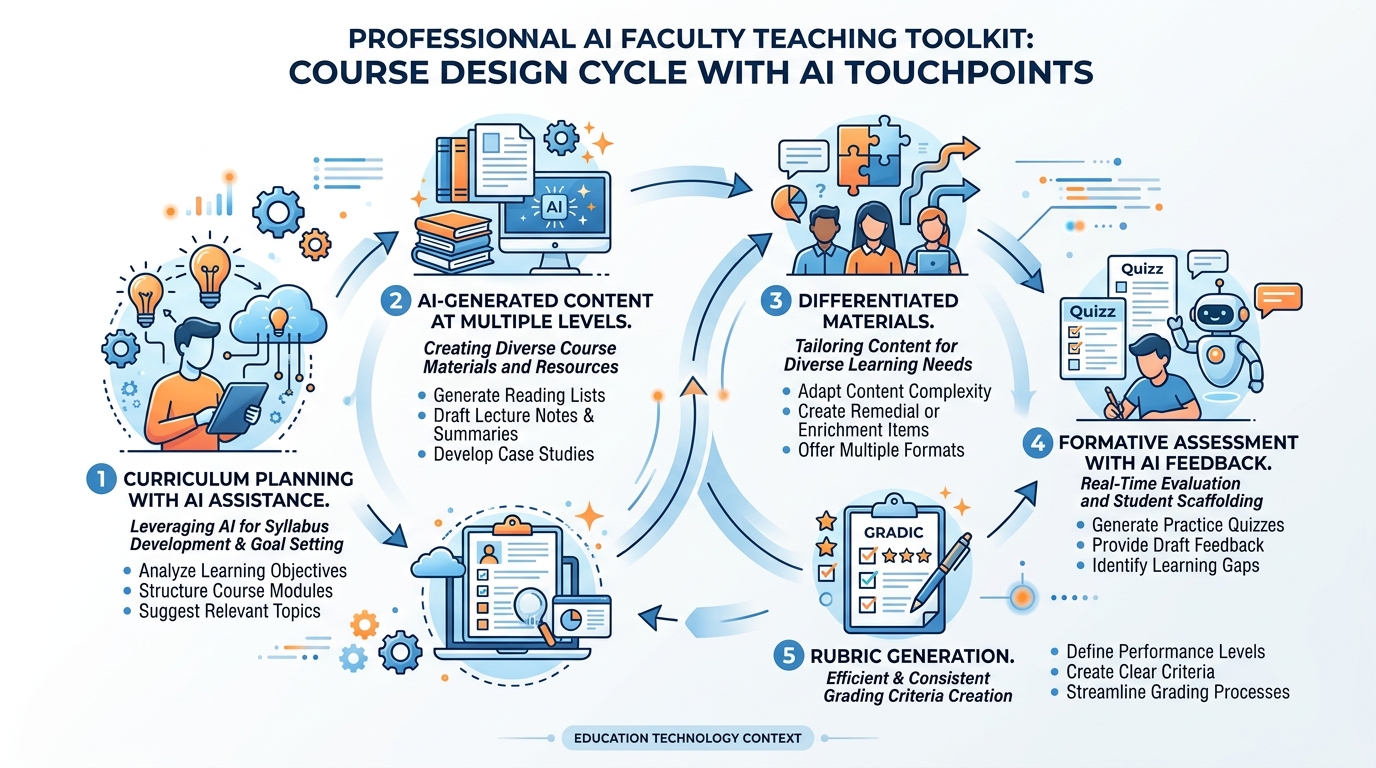

Figure 3:Figure 2.3: The faculty AI toolkit — how generative AI can support educators across the full course design and delivery cycle. The most impactful applications involve AI as a force multiplier for personalization and feedback at scale, tasks that have always been pedagogically valuable but prohibitively time-consuming.

2.12.2.1 Course Design and Curriculum Development¶

Faculty spend enormous amounts of time on course design: developing learning objectives, sequencing content, creating assignments, writing rubrics, and aligning assessments to outcomes. Generative AI can dramatically accelerate these processes:

Learning objective generation: Given a course description and level, AI can generate draft learning objectives using appropriate cognitive taxonomy language (Bloom’s taxonomy, for instance), which faculty can then review and refine.

Syllabus drafting: AI can produce initial syllabus drafts including course policies, weekly schedules, and reading list structures that faculty adapt to their specific context and content expertise.

Rubric creation: One of the most time-consuming aspects of assignment design, rubric creation can be accelerated significantly with AI assistance. Faculty describe the assignment and desired outcomes; AI produces a detailed rubric that faculty then refine.

Differentiated materials: A single set of course materials can be transformed by AI into multiple versions — simplified for students struggling with the content, enriched for advanced students, translated into other languages, or adapted for accessibility needs.

Case Study: University of Minnesota (2025) The University of Minnesota’s College of Education ran a structured pilot in 2025 in which 45 faculty members used an AI-assisted course design tool built on the Claude API. Faculty reported saving an average of 6.2 hours per course in initial course design work, with the most significant time savings in rubric creation (2.1 hours average) and learning objective alignment (1.8 hours average). Critically, faculty also reported higher satisfaction with their course designs — they felt they had more time to focus on the intellectual substance of their courses because AI handled more of the procedural formatting work.

2.22.2.2 Content Creation and Explanation¶

AI can generate explanatory content at different levels of complexity and in multiple formats, enabling personalization that was previously impossible for individual faculty to produce:

Multi-level explanations: A concept explained at introductory, intermediate, and advanced levels for students with different background preparation

Analogies and examples: AI is remarkably effective at generating multiple analogies for abstract concepts, helping students find one that connects with their existing knowledge

Visual description: For faculty creating lecture slides or course videos, AI can suggest visual representations, diagrams, and figures

Alternative problem sets: Math and science faculty can generate large banks of practice problems with solutions, enabling differentiated practice without manual labor

2.32.2.3 Formative Feedback at Scale¶

Perhaps the most consequential application of generative AI in teaching is its potential to dramatically scale formative feedback — the kind of iterative, specific guidance that helps students improve their work before they submit for a grade.

Research in educational psychology consistently finds that frequent, specific, actionable feedback is among the most powerful drivers of student learning. Yet providing such feedback is enormously time-consuming: a faculty member with 150 students simply cannot provide meaningful written feedback on multiple drafts of every major assignment. AI changes this calculus.

The Writing Feedback Use Case: Tools like Cognii, Grammarly’s AI features, and AI prompts built into LMSs can provide students with immediate, detailed feedback on draft essays — identifying structural weaknesses, logical gaps, weak evidence use, and prose clarity issues. This feedback can be calibrated to specific rubric criteria and learning objectives.

The pedagogical benefit is not just the feedback itself but the iterative process it enables: students can receive AI feedback on draft one, revise, receive feedback on draft two, revise again, and submit a much stronger final product — all before consuming any of the faculty member’s review time.

Case Study: George Mason University Writing Center AI Integration (2024-2025) George Mason University integrated AI writing feedback tools into their university writing center’s online service, enabling students to receive preliminary feedback before their synchronous or asynchronous appointments with human writing tutors. In a study of 1,200 students using the integrated system, writing quality on final submissions (measured by blinded rubric scoring) improved significantly compared to a control group using only human writing center services. Appointment efficiency also improved: tutors reported that students arrived better prepared, enabling more sophisticated conversations about argument and evidence rather than basic mechanics.

32.3 AI and Student Learning: Opportunities and Risks¶

The student relationship with generative AI is the most debated dimension of AI in higher education. Are students using AI to learn more effectively, or to avoid learning altogether? The evidence, as of 2025-2026, suggests the answer is: both, depending on how the AI is used and how assignments are designed.

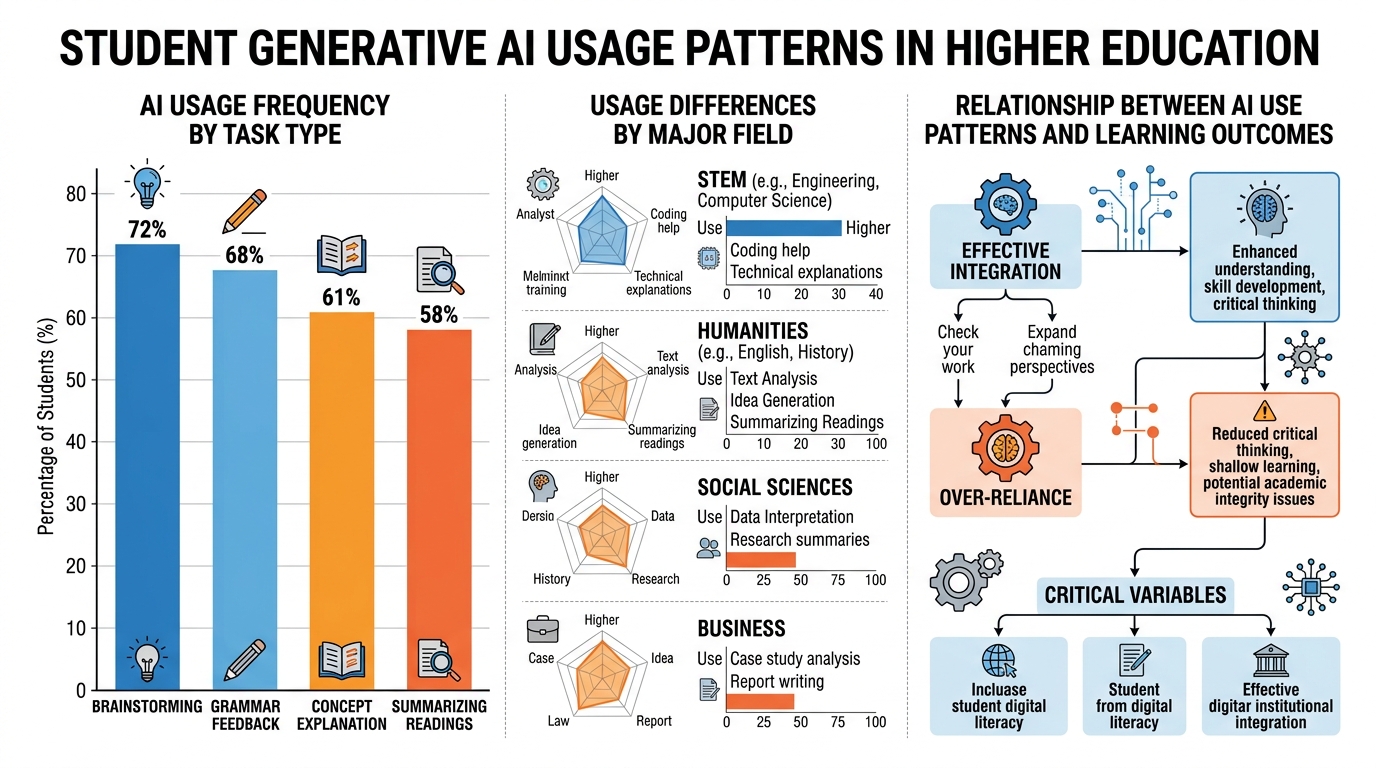

Figure 4:Figure 2.4: Student generative AI usage patterns in higher education (2024-2025 research synthesis). Usage is near-universal, but patterns vary significantly by task type, major, and institutional context. Critically, the relationship between AI use and learning outcomes depends heavily on how students use AI, not simply whether they use it.

3.12.3.1 How Students Are Actually Using AI¶

Survey research from EDUCAUSE, the Educause Center for Analysis and Research (ECAR), and multiple institutional studies provides a reasonably clear picture of student AI usage as of 2024-2025:

86% of college students report using generative AI tools at least occasionally for academic work

The most common use cases are: brainstorming/idea generation (72%), grammar and writing feedback (68%), explaining difficult concepts (61%), and summarizing readings (58%)

More problematic uses — using AI to generate substantial portions of submitted assignments without disclosure — are reported by approximately 30-35% of students in anonymous surveys

Usage patterns differ significantly by major: STEM students report high AI use for code debugging and explanation; humanities students for writing assistance; social science students for research synthesis

First-generation students and students from under-resourced high schools are more likely to use AI as a substitute for foundational writing skills rather than as a tool to enhance strong existing skills — a pattern with equity implications

3.22.3.2 AI Tutoring and Personalized Learning¶

The most pedagogically transformative application of AI for student learning is AI tutoring — providing personalized, interactive instruction that adapts to individual students’ knowledge levels, misconceptions, and learning pace.

Khan Academy’s Khanmigo (launched 2023, expanded 2024-2025) provides perhaps the most mature example. Built on GPT-4 and Claude, Khanmigo acts as a Socratic tutor: rather than simply giving students answers, it asks guiding questions designed to help students arrive at understanding themselves. When a student struggles with a quadratic equation, Khanmigo doesn’t solve it — it asks: “What’s the first step in solving a quadratic? Let’s think about what we know about the structure of the equation.”

Early research on Khanmigo’s effectiveness is promising. A 2025 study at a community college in California found that students using Khanmigo for developmental mathematics (remedial math below college level) advanced through remediation 40% faster than a control group using traditional online homework, with equivalent or better performance on subsequent college-level mathematics.

Duolingo’s AI-Native Platform has moved beyond language vocabulary drilling to provide AI conversation partners that simulate real-world language use, provide pronunciation feedback, and adapt to each learner’s vocabulary gaps and error patterns. In higher education language programs, Duolingo for Schools AI features are being integrated as out-of-class practice tools that free classroom time for higher-level communicative tasks.

3.32.3.3 The Cognitive Offloading Risk¶

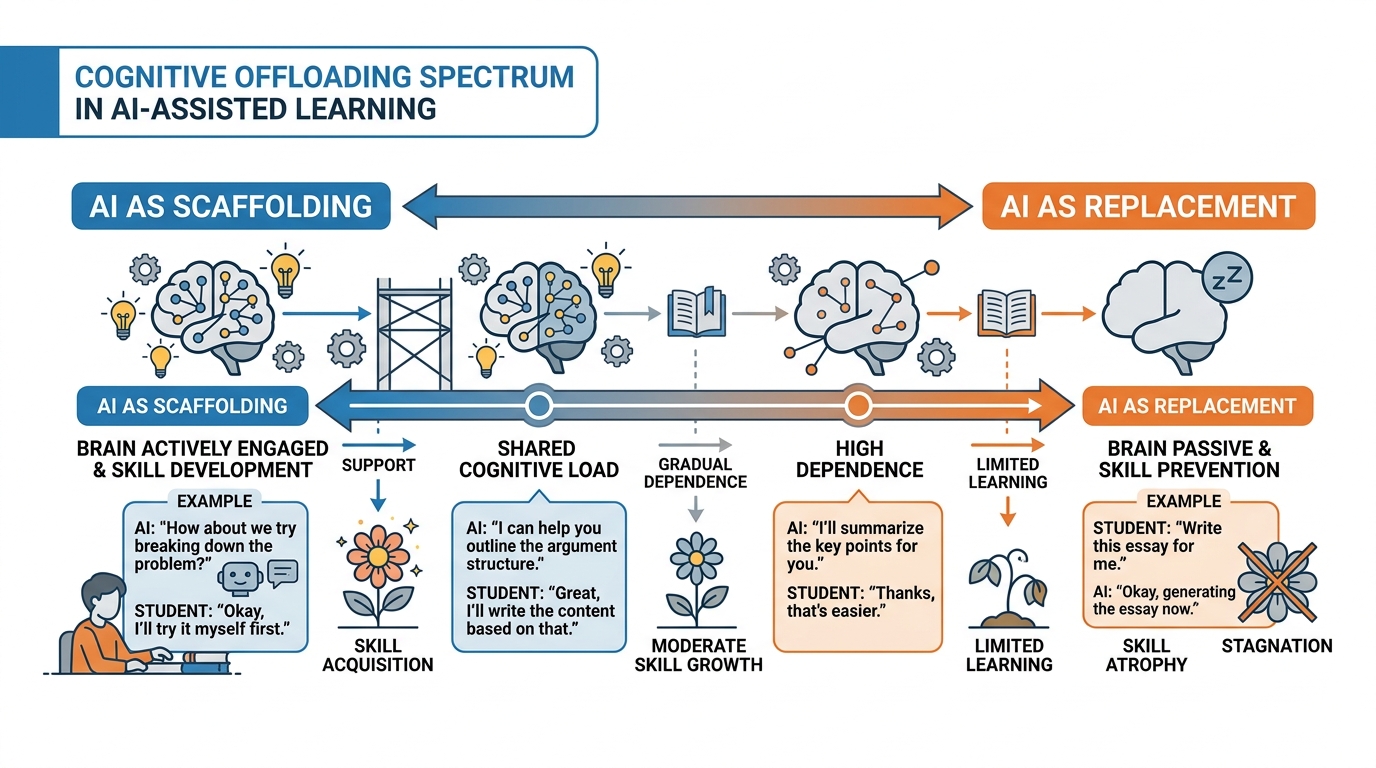

The most serious learning risk from AI is cognitive offloading — students using AI to complete cognitive tasks that they need to practice to develop genuine competence.

Writing is not merely a way of communicating ideas that already exist in one’s head; it is a process through which thinking is developed, refined, and made more precise. Students who use AI to generate the first draft of every paper may submit more polished final products while developing weaker writing ability. The psychological and neuroscientific research on cognitive offloading suggests that outsourcing a cognitive task to an external system reduces the likelihood of the associated neural consolidation that constitutes learning.

This has profound implications for assignment design. The question faculty should ask is not “can students use AI on this assignment?” but “what cognitive processes do I want students to develop through this assignment, and does AI use support or undermine those processes?”

Figure 5:Figure 2.5: The cognitive offloading spectrum. AI assistance exists on a continuum from scaffolding (which supports skill development by providing structured help that students gradually need less) to replacement (which prevents skill development by removing the cognitive challenge entirely). Assignment design determines where on this spectrum a given AI use falls.

3.42.3.4 Academic Integrity in the AI Era¶

Academic integrity is perhaps the most discussed and least satisfactorily resolved dimension of AI in higher education. The fundamental challenge is structural: AI-generated text is currently indistinguishable from human-written text by any reliable automated method.

AI Detection Tools: A False Promise

Tools like Turnitin AI Detection, GPTZero, and Copyleaks claim to identify AI-generated text. The research evidence on their accuracy is troubling:

False positive rates (incorrectly identifying human-written text as AI-generated) range from 1-10% in research studies — high enough that students can be wrongly accused of academic dishonesty

False negative rates (failing to detect AI-generated text) are significant for revised or mixed AI-human text

Non-native English speakers are flagged at significantly higher rates than native speakers, creating potential discriminatory application

Paraphrasing tools can transform AI-generated text in ways that defeat detection

The consensus among educational researchers as of 2025-2026 is that AI detection tools should not be used as the sole or primary basis for academic integrity determinations.

The Policy Landscape

Higher education institutions have adopted dramatically different approaches to AI policy:

Blanket prohibition policies (some institutions ban all AI use in all academic work) have proven largely unenforceable and are increasingly viewed as educationally counterproductive, producing students who cannot use AI effectively for professional work.

Assignment-specific policies (faculty specify AI permissions on each assignment) are the most common approach but create confusion when policies differ between courses and require significant faculty professional development.

AI disclosure and citation requirements (students must disclose and cite AI use as they would any other source) treat AI as a legitimate tool that must be used transparently.

AI-native assignment redesign (designing assignments specifically to leverage rather than prohibit AI) is the most pedagogically ambitious approach and is gaining traction among learning design specialists.

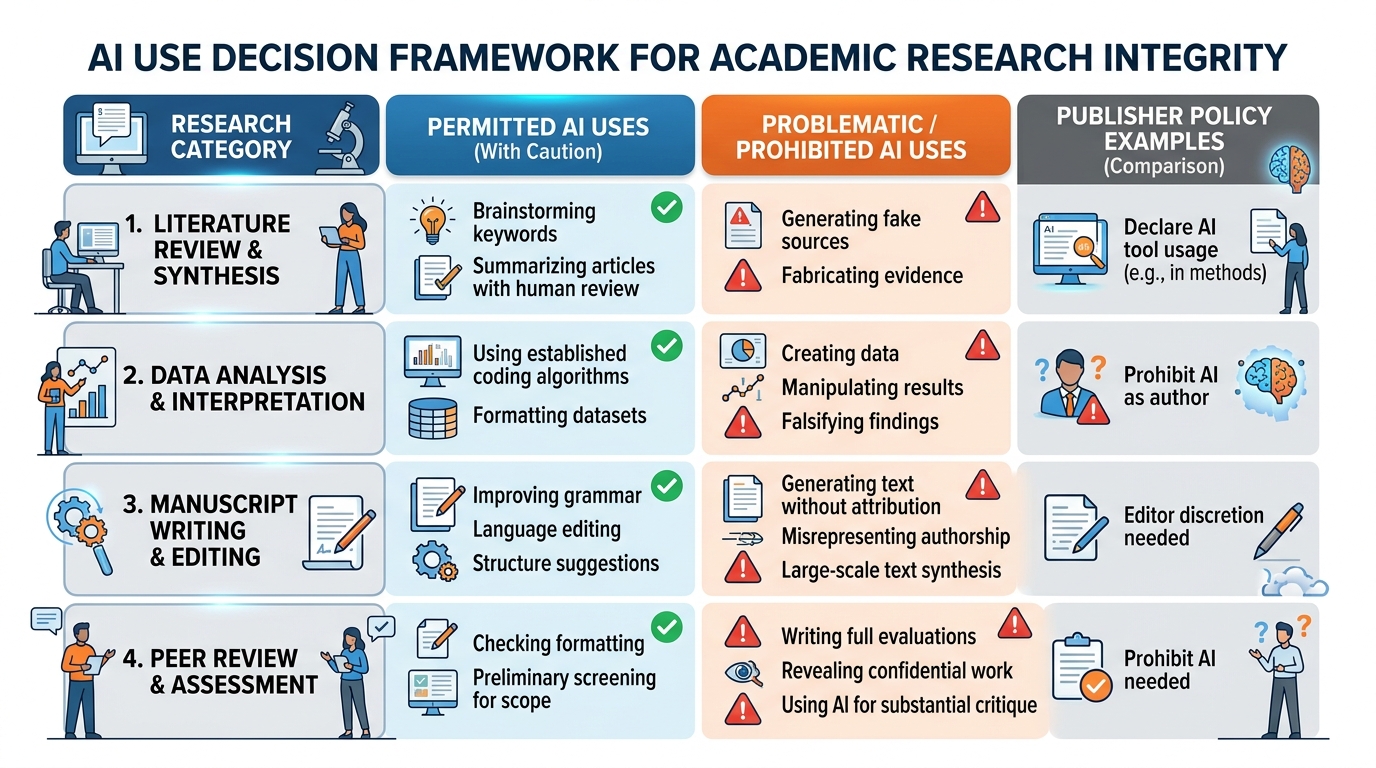

42.4 AI-Powered Research: Transforming Scholarship¶

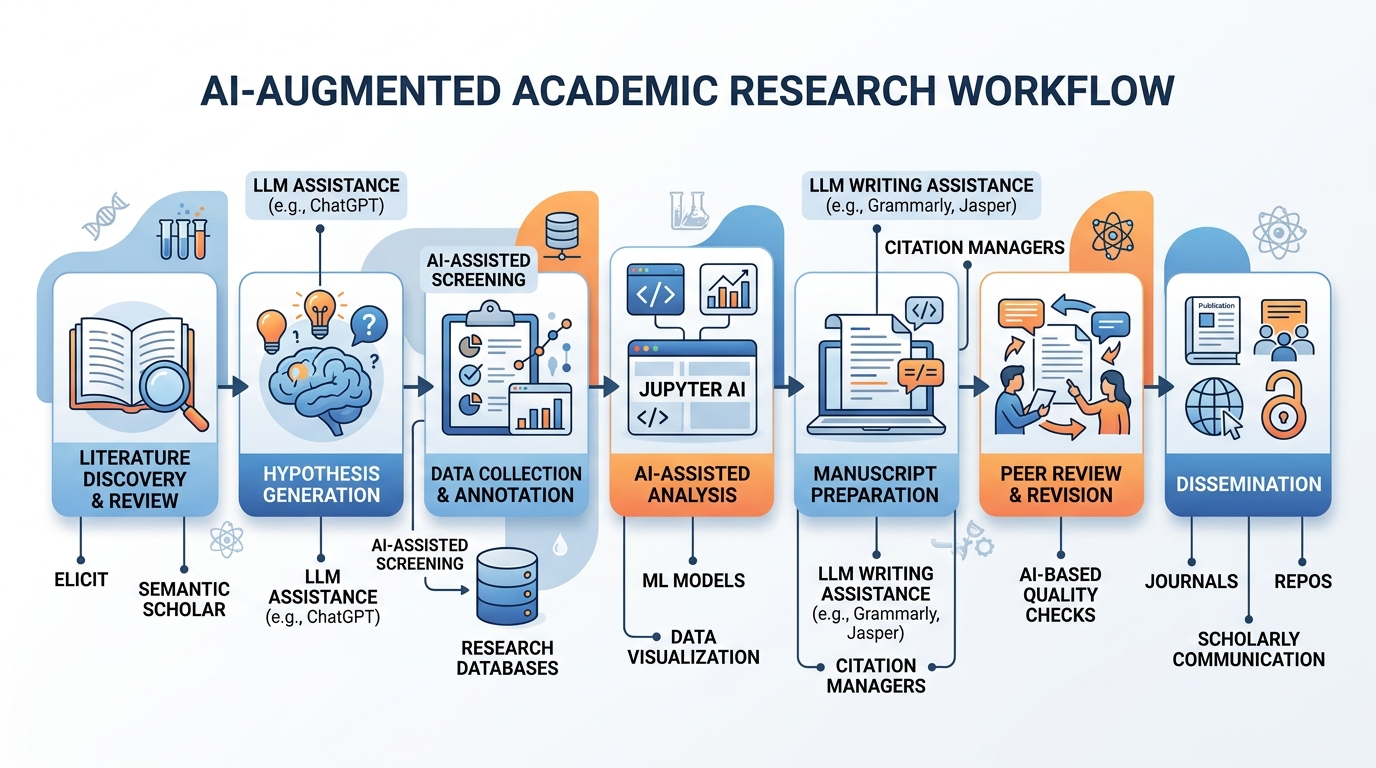

The impact of generative AI on academic research may be the most transformative and least publicly discussed dimension of AI in higher education. AI is changing every stage of the research process — from literature discovery to data analysis to manuscript preparation.

Figure 6:Figure 2.6: The AI-augmented research workflow. Generative AI tools are now integrated across every stage of the research process, from literature discovery (Elicit, Consensus, Semantic Scholar) to data analysis (Code Interpreter, Jupyter AI) to manuscript preparation (Claude, GPT-4) to peer review support. Each stage raises distinct questions about attribution, accuracy, and research integrity.

4.12.4.1 Literature Discovery and Synthesis¶

Academic literature has grown beyond the capacity of any individual researcher to track comprehensively. PubMed alone adds over 4,000 new biomedical research papers every day. AI-powered research tools are addressing this challenge:

Elicit uses language model capabilities to help researchers find relevant papers, extract key information, and synthesize findings across multiple studies. A researcher can ask Elicit “What is the current evidence on the effectiveness of spaced repetition for foreign language vocabulary acquisition?” and receive a structured synthesis of relevant literature with paper-by-paper breakdowns.

Semantic Scholar uses NLP to identify conceptual relationships between papers, enabling citation graph analysis and discovery of thematically related work that traditional keyword search would miss.

ResearchRabbit builds dynamic literature maps that update automatically as new relevant papers are published, enabling researchers to stay current in their fields without manual monitoring.

Consensus uses AI to answer research questions by synthesizing findings from peer-reviewed studies, providing confidence levels and noting when scientific consensus is strong or contested.

The Systematic Review Revolution

Systematic reviews — comprehensive syntheses of research evidence on specific questions — typically take 18-24 months to complete and require teams of researchers. AI is beginning to compress this timeline dramatically. A 2025 study in the Journal of Clinical Epidemiology found that AI-assisted systematic review screening (using AI to make initial inclusion/exclusion decisions on candidate papers) reduced screening time by 65% with no significant loss in review quality, as measured by agreement with gold-standard human screening.

4.22.4.2 Data Analysis and Code Generation¶

For quantitative researchers, AI code generation has become a transformative productivity tool. Researchers who previously needed to write complex statistical analysis code from scratch can now describe their analysis in natural language and receive working code in Python, R, or MATLAB.

Jupyter AI integrates LLM capabilities directly into Jupyter Notebook environments, enabling researchers to write code through natural language prompts, get explanations of unfamiliar functions, and debug errors by describing the problem.

GitHub Copilot is widely used by computational researchers for code completion, function generation, and documentation. Studies of Copilot use among academic researchers suggest productivity improvements of 30-50% for common coding tasks.

Qualitative Research Applications

AI is also transforming qualitative research methods. LLMs are being used to assist with thematic analysis (identifying patterns across large bodies of interview transcripts), grounded theory coding (generating initial codes for qualitative data), and mixed-methods integration (synthesizing findings across quantitative and qualitative data sources).

However, qualitative researchers have raised important concerns about AI use in qualitative analysis: the risk that AI applies majority-culture conceptual frameworks that miss culturally specific patterns, the loss of the interpretive reflexivity that gives qualitative research its epistemological strength, and the possibility that AI “thematic analysis” produces a simulacrum of qualitative findings rather than genuine insight.

4.32.4.3 Research Writing and Manuscript Preparation¶

The use of AI for manuscript preparation is widespread and deeply contested. A 2025 survey by Springer Nature found that 72% of academic researchers used AI in some capacity for manuscript preparation — including brainstorming, outline development, literature review drafting, abstract writing, and revision.

What publishers are permitting: Most major publishers (Elsevier, Springer Nature, Wiley, Taylor & Francis, Nature Portfolio) permit AI assistance for writing but require disclosure. The guidelines generally prohibit listing AI as an author (AI cannot take responsibility for a paper the way human authors can) and require that human authors verify all AI-generated content for accuracy.

The attribution question: When an AI system substantially assists in generating research findings or text, current attribution norms are inadequate. If Elicit synthesizes the literature that forms the foundation of a literature review, and Claude helps draft the review, and the researchers then validate and revise the output — who (or what) has contributed intellectually to the work? The research community is actively developing new attribution norms, but consensus is far from established.

Figure 7:Figure 2.7: AI use in academic research — navigating the integrity landscape. Publisher policies vary but share common principles: AI must be disclosed, AI cannot be listed as an author, and human authors are fully responsible for the accuracy of AI-assisted content. The framework maps permitted and problematic uses across key research activities.

52.5 Assessment Redesign for the AI Era¶

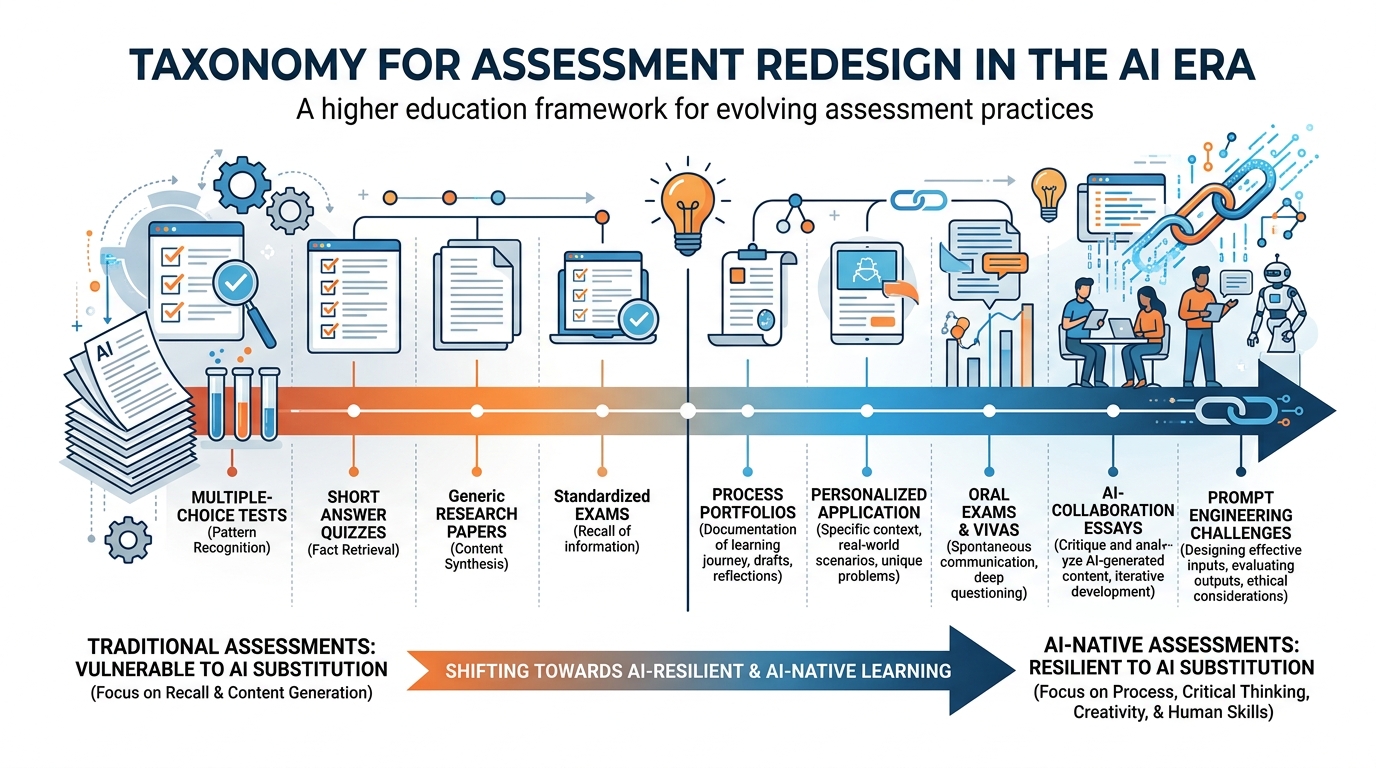

The most urgent practical challenge for faculty in the AI era is assessment redesign — creating evaluation structures that remain valid, meaningful, and resistant to AI substitution while leveraging AI’s genuine pedagogical potential.

Figure 8:Figure 2.8: Assessment redesign taxonomy for the AI era. Traditional single-submission written assignments are highly vulnerable to AI substitution. AI-native assessments incorporate AI as part of the learning process while maintaining genuine learning objectives. The goal is not AI-proofing but pedagogical intentionality.

5.12.5.1 Assessment Approaches That Remain Valid¶

Several assessment approaches maintain their validity in the AI era:

Process-based assessment: Evaluating not just the final product but the documented process — drafts with revision history, annotated bibliographies, process journals, recorded think-alouds. AI can fake a final product but cannot easily fake an authentic creative process.

Personalized application: Assignments that require students to apply course concepts to their own specific experiences, workplaces, communities, or research interests. An assignment asking students to analyze “how the concepts from this week’s readings apply to a specific challenge in your current workplace” cannot be completed by AI alone.

Current events integration: Assignments requiring engagement with events that occurred after AI training data cutoffs, or with locally specific information not available in AI training data.

Oral and multimodal assessment: In-person oral examinations, presentations with Q&A, video explanations recorded under observation, and studio critiques all provide forms of evidence of student understanding that AI cannot fake in real-time.

Collaborative assessment with individual accountability: Group projects with documented individual contributions, combined with individual reflection components that demonstrate personal learning.

5.22.5.2 AI-Native Assessment Design¶

The most forward-thinking assessment approach is AI-native design — treating AI as part of the assessment context rather than an external threat to be excluded:

AI collaboration essays: Students complete an assignment using AI assistance and then write a critical reflection analyzing the AI’s contributions, limitations, errors, and the student’s own interventions and corrections. This demonstrates both subject matter knowledge and AI critical literacy.

AI critique assignments: Students use AI to generate initial content and then systematically critique, fact-check, improve, and annotate it — demonstrating their mastery of the subject matter through their ability to identify AI weaknesses.

Prompt engineering challenges: Students compete to create the best AI prompt for a specific educational goal, demonstrating their understanding of both the subject matter and how to operationalize it effectively.

Human-AI comparison analysis: Students complete an assignment both with and without AI assistance and then analyze the differences — what the AI got right, what it missed, what they contributed that AI could not.

62.6 Faculty Development and Institutional Support¶

The integration of AI into teaching, learning, and research requires significant institutional investment in faculty development — not just technical training, but pedagogical rethinking.

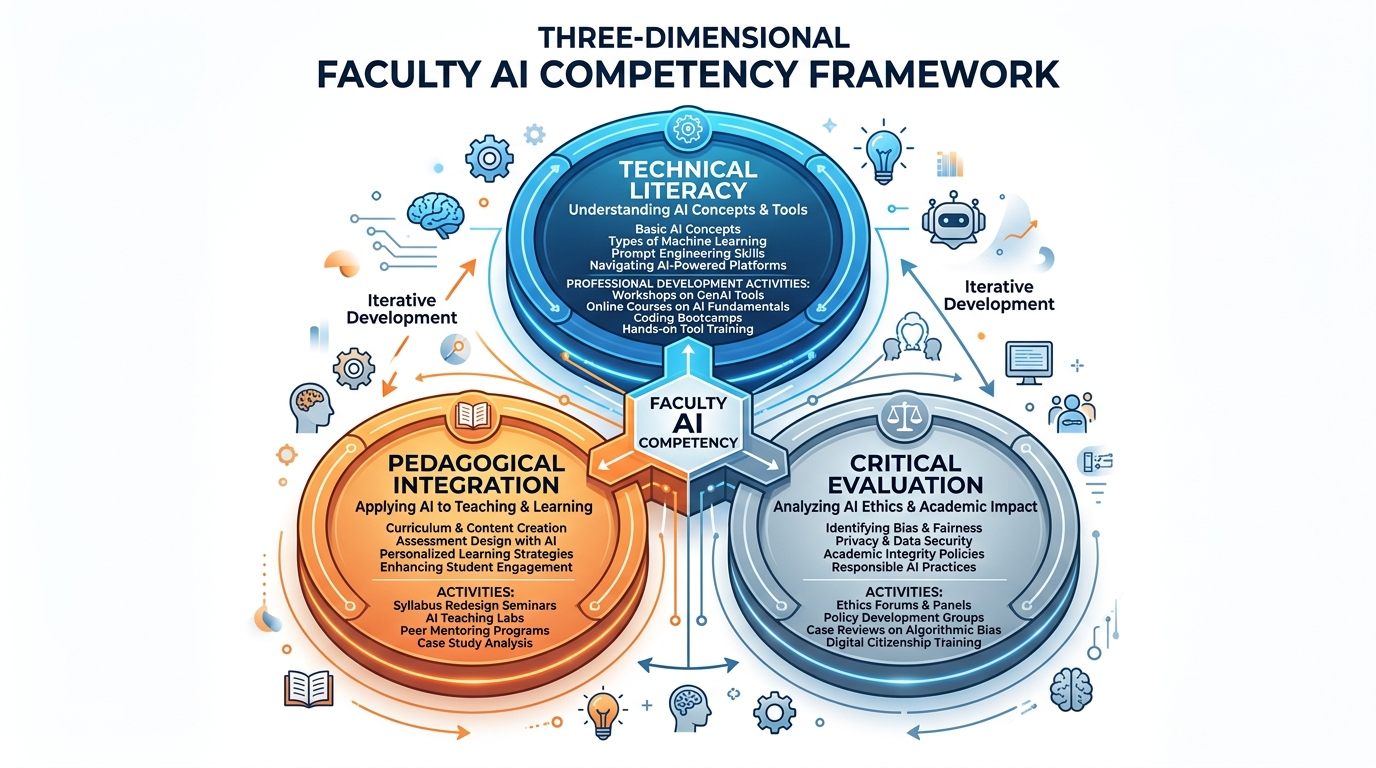

6.12.6.1 The Faculty AI Competency Framework¶

A growing number of institutions are developing frameworks for faculty AI competency that go beyond simple tool tutorials. Effective frameworks address three dimensions:

Technical literacy: Understanding what AI can and cannot do, how to use major AI tools effectively, and how to prompt effectively for educational purposes.

Pedagogical integration: Knowing when and how to incorporate AI into course design and assessment in ways that serve learning objectives rather than undermine them.

Critical evaluation: Ability to assess AI outputs for accuracy, bias, and appropriateness; to understand the limitations and risks of specific AI applications; and to communicate these to students.

Figure 9:Figure 2.9: The faculty AI competency framework. Effective professional development addresses three interconnected dimensions: technical literacy (using AI tools), pedagogical integration (designing AI into courses effectively), and critical evaluation (assessing AI outputs and educating students about AI limitations). Point solutions in just one dimension are insufficient.

6.22.6.2 Institutional Support Structures¶

Institutions that are navigating the AI transition most successfully have invested in several key support structures:

Centers for Teaching and Learning (CTLs) with dedicated AI in education expertise. Several large universities have added full-time AI Pedagogy Specialists to their CTL teams — educators who can consult with faculty on course redesign, assessment reform, and AI tool selection.

Faculty learning communities focused on AI in education. Peer learning among faculty — sharing assignments that work, discussing challenges, developing shared AI policies — is highly effective and relatively low-cost.

Shared AI guidelines and policy templates that give faculty a starting point for their own course policies, rather than requiring each faculty member to independently develop AI policy from first principles.

Rapid prototyping funds that support faculty who want to pilot AI integration with their courses, with structured evaluation and reporting back to the broader community.

6.32.6.3 Case Study: Arizona State University’s AI Integration Initiative¶

Arizona State University (ASU) has been among the most aggressive and systematic institutions in higher education for AI integration. Their approach is worth examining in detail.

In 2023, ASU became one of the first major universities to negotiate an institutional enterprise agreement with OpenAI, providing ChatGPT Enterprise access to all faculty, staff, and graduate students with enhanced privacy protections. They simultaneously launched:

An AI Innovation Challenge that funded 37 faculty-led AI integration projects across disciplines

An AI Pedagogy Certificate Program through their Center for Education Through Exploration, completed by over 800 faculty by 2025

A Student AI Literacy requirement integrated into their general studies curriculum

A University AI Council that coordinates policy, governance, and strategic planning

ASU’s AI integration philosophy, as articulated by their leadership, is explicitly expansive: rather than trying to limit AI use, they aim to ensure that all ASU graduates are proficient in working effectively with AI tools — treating AI literacy as an essential 21st century competency comparable to statistical literacy or writing.

Results as of 2025: Student satisfaction with technology tools increased 12 percentage points; faculty reporting that AI tools “meaningfully improved” their courses increased from 21% in 2023 to 58% in 2025; and ASU has become a nationally recognized resource for other institutions developing AI integration strategies.

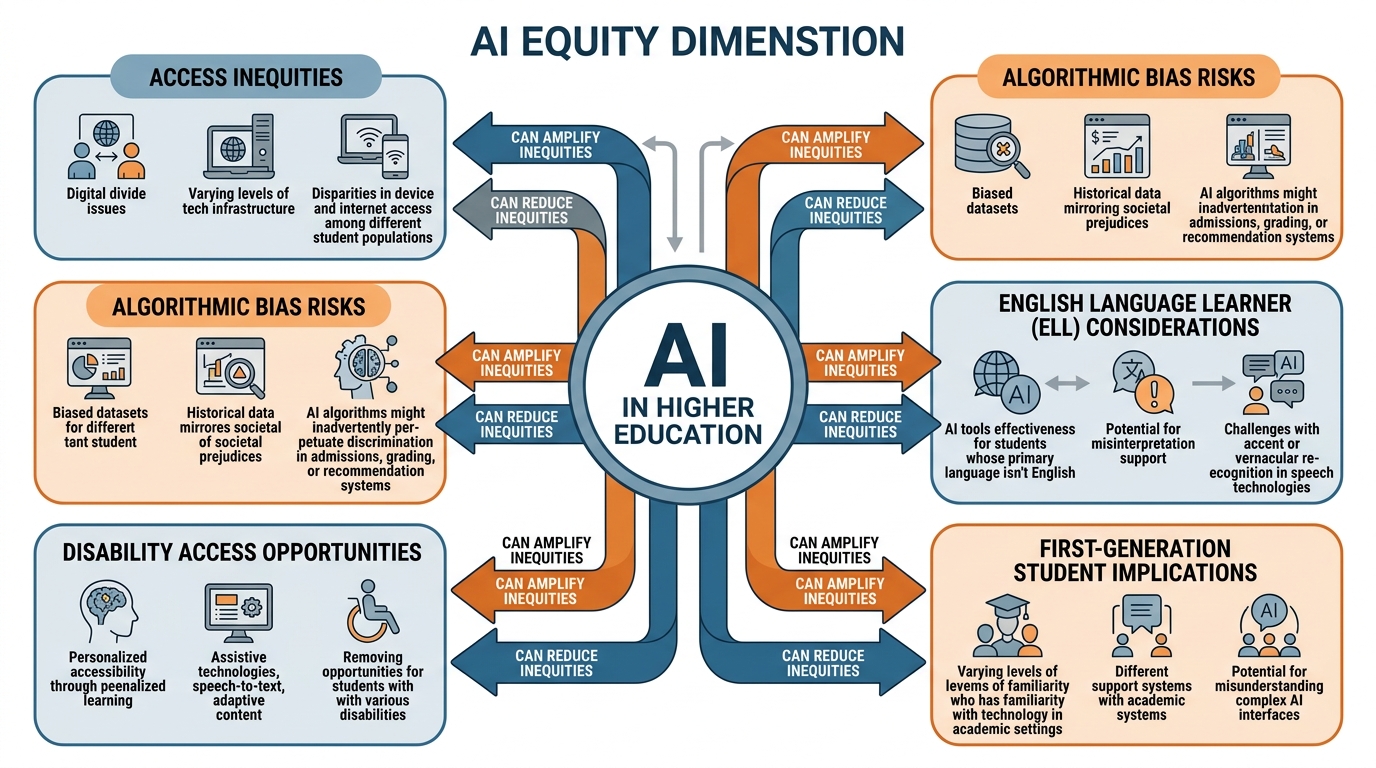

72.7 Equity and Access in AI-Augmented Education¶

The equity dimensions of AI in teaching and learning are both an opportunity and a risk, and institutions must engage them with intentionality.

Figure 10:Figure 2.10: The AI equity framework for higher education. AI in education can either reduce or amplify existing inequities depending on how it is designed, deployed, and supported. The framework maps key equity dimensions that institutions must address proactively to ensure AI-enhanced education serves all students.

7.12.7.1 AI as an Equalizer¶

AI has genuine potential to reduce educational inequities:

Tutoring at scale: High-quality tutoring has historically been accessible primarily to affluent students who could afford private tutors. AI tutoring, if well-designed and accessible, could provide every student with individualized academic support.

Writing support for English language learners: AI writing feedback tools that provide grammar, style, and clarity suggestions help multilingual students develop academic English without stigma — they can receive immediate, private feedback on drafts rather than waiting for faculty office hours.

Accessibility features: AI-powered captioning, screen reading, text-to-speech, and image description tools significantly improve educational access for students with disabilities. Canvas’s AI accessibility scanner helps faculty identify and fix accessibility barriers in course materials before they affect students.

24/7 availability: AI support tools are available at midnight when a student is struggling with homework and their instructor’s office hours are 36 hours away. For working students, single parents, and students in different time zones, this temporal flexibility is significant.

7.22.7.2 AI as an Amplifier of Inequity¶

AI tools can amplify existing inequities if deployed without attention to equity:

Device and connectivity requirements: Sophisticated AI tools require reliable broadband and capable devices. Students without these — disproportionately low-income, rural, and community college students — are excluded.

Language and dialect bias: AI writing feedback tools trained primarily on standard academic English may penalize students who write in African American Vernacular English, provide less accurate feedback for multilingual writers, or fail to recognize rhetorical traditions from non-Western cultures.

AI literacy gap: Students from well-resourced schools may arrive with significantly more experience with AI tools than first-generation students from under-resourced schools — creating an AI literacy gap on top of existing preparation gaps.

Data privacy risks for vulnerable students: AI tools that process student data may raise particular privacy concerns for undocumented students, students in mental health treatment, and others whose data exposure carries real risks.

8Summary¶

Generative AI is not a threat to higher education — it is a transformation of higher education, one that requires institutions to engage with clarity, intentionality, and a genuine commitment to equity.

The chapter has examined AI’s impact across four domains:

Teaching and course design: AI accelerates content creation, enables unprecedented personalization, and creates new possibilities for formative feedback at scale — if faculty invest in learning to use these tools effectively.

Student learning: AI tutoring shows genuine promise for democratizing personalized support, but cognitive offloading risks require careful attention to assignment design and AI literacy education.

Academic research: AI is accelerating literature discovery, data analysis, and manuscript preparation — requiring new norms for attribution, disclosure, and quality assurance.

Assessment: The most urgent faculty challenge is redesigning assessments that remain valid in the AI era — either by making them genuinely resistant to AI substitution or by making AI use part of the assessment itself.

The institutions navigating this transformation most successfully are investing not just in technology but in pedagogy — faculty development, governance, and the patient, evidence-informed work of understanding how AI can serve educational missions rather than merely streamline administrative processes.

9Key Terms¶

Large Language Model (LLM) A class of AI model trained on massive text corpora to predict and generate language. LLMs like GPT-4, Claude, and Gemini underlie most contemporary generative AI applications in education.

Hallucination The tendency of LLMs to generate plausible-sounding but factually incorrect information, including fake citations, incorrect statistics, and fabricated historical events. A critical limitation for educational applications.

Cognitive Offloading The practice of using external systems to perform cognitive tasks that would otherwise be done internally. AI-enabled cognitive offloading can support learning (scaffolding) or undermine it (replacement), depending on the task and how the tool is used.

AI Tutoring AI systems designed to provide personalized, interactive academic instruction and support, ideally using Socratic questioning and adaptive sequencing to guide students toward understanding rather than simply providing answers.

Formative Assessment Ongoing evaluation of student learning during the learning process (as opposed to summative assessment at the end), intended to guide instruction and provide students with feedback for improvement. AI can dramatically scale formative assessment.

AI Detection Tools Software claiming to distinguish AI-generated text from human-written text. Current tools have significant false positive and false negative rates and are not recommended as sole evidence for academic integrity determinations.

Prompt Engineering The practice of designing effective natural language instructions for AI systems to produce desired outputs. An emerging professional skill increasingly relevant for educators, researchers, and students.

AI-Native Assessment Assessment designs that incorporate AI as part of the learning and evaluation process rather than treating AI as an external threat to be excluded. Examples include AI collaboration essays, AI critique assignments, and prompt engineering challenges.

Khanmigo Khan Academy’s AI tutoring system, built on LLMs, that uses Socratic questioning to guide students toward understanding rather than providing direct answers. Among the most widely deployed AI tutoring tools in education.

Academic Integrity The set of values and practices that ensures academic work represents students’ genuine learning and effort. AI poses new challenges to academic integrity that require policy, assignment design, and cultural responses.

Systematic Review A structured synthesis of all available research evidence on a specific question, following explicit methodological protocols. AI is beginning to dramatically accelerate systematic review processes, particularly literature screening.

Elicit An AI-powered research tool that helps researchers discover, screen, and synthesize academic literature using natural language queries and structured data extraction.

Digital Equity Equal access to digital tools and the skills to use them effectively. A critical consideration in AI deployment, as unequal access to AI tools can amplify existing educational inequities.

Faculty Learning Community A structured peer learning group in which faculty from across disciplines engage in collaborative investigation of a shared pedagogical challenge. An effective professional development model for AI integration.

10Discussion Questions¶

The Authenticity Paradox: A student uses Claude to draft the first version of a major research paper, then revises it substantially, fact-checks all claims, adds their own analysis, and produces a final product that genuinely reflects their understanding of the subject. Has academic integrity been violated? What does your answer reveal about what academic writing assignments are actually for?

Equity in AI Tool Access: An institution implements an enterprise ChatGPT agreement that provides access to all enrolled students with an institutional email. A significant percentage of their community college students, however, have unreliable broadband and use only smartphones for coursework. What additional steps should the institution take to ensure equitable AI access? Who bears responsibility for the digital divide?

The Hallucination Liability Question: A third-year student submits a research paper citing three papers generated by AI — papers that do not exist, though the citations look plausible. The student claims not to have known AI could generate fake citations. What responsibility does the institution bear for AI literacy education? What responsibility does the faculty member bear for instruction about AI limitations?

Research Integrity in the AI Era: A computational biologist uses Elicit to conduct the literature review for a grant proposal, uses Jupyter AI to help write the analysis code, and uses Claude to help draft the significance section of the proposal. How much of this should be disclosed? To whom? Does the source of the proposal matter (federal grant, private foundation, internal grant)?

Faculty Development Design: You are the Director of the Center for Teaching and Learning at a mid-sized regional university. Design a faculty AI professional development program that could be completed in one semester. What are the key learning objectives? What format would you use? How would you measure success?

Discussion Guidelines

For your initial post:

Provide a 300-500 word response that goes beyond surface-level analysis to engage with the underlying pedagogical, ethical, or institutional dimensions of the question.

Include at least one citation from a scholarly source or credible professional publication (EDUCAUSE, The Chronicle, peer-reviewed educational research).

Take a clear position where the question calls for one, and defend it.

For your peer responses:

Respond substantively to at least TWO peers (150+ words each).

Engage critically: find a point of agreement and a point where you see a gap, risk, or alternative perspective.

“Great point!” is not a substantive response.

11Exercises¶

11.1Exercise 2.1 — AI Audit Your Own Learning¶

Over one week, document every instance in which you use a generative AI tool for any academic or professional purpose. For each instance, record: the tool used, the task, whether you used it to scaffold your own work or to replace your own work, whether the AI output required significant revision, and your honest assessment of whether the AI use supported or undermined your learning. At the end of the week, write a 500-word reflection on what your audit reveals about your relationship with AI as a learner.

11.2Exercise 2.2 — Assignment Redesign Workshop¶

Select a traditional written assignment from a course in your discipline — ideally one that could easily be completed using ChatGPT or Claude without significant learning. Redesign the assignment in two ways:

Make it more AI-resistant by incorporating elements that AI cannot fake (personal experience, real-time events, in-person demonstration, etc.)

Make it AI-native by explicitly incorporating AI use as part of the assignment and focusing evaluation on the student’s critical engagement with AI outputs

Compare the two redesigned versions: what are the trade-offs? What do they reveal about the learning objectives of the original assignment?

11.3Exercise 2.3 — AI Tutor Comparison¶

Using the same undergraduate-level concept from your discipline, evaluate three different AI tools as tutors: ChatGPT, Claude, and Khanmigo (free educational version). Ask each to explain the concept, answer a follow-up question that reveals a common misconception, and suggest a practice problem. Evaluate each on: accuracy, pedagogical approach (Socratic vs. direct instruction), adaptation to follow-up, and clarity. Write a 400-word comparative analysis.

11.4Exercise 2.4 — Policy Analysis¶

Collect the AI use policies from five institutions: two R1 research universities, one liberal arts college, one community college, and one HBCU. Analyze the policies across dimensions including: scope (course-level vs. institution-level), approach (prohibition vs. disclosure vs. integration), student and faculty guidance quality, enforcement mechanisms, and review/update processes. What do the differences reveal about institutional values, risk tolerance, and pedagogical philosophy?