Strategic AI Transformation — Governance, Ethics, and the Future

Building Institutional Frameworks for Responsible AI Leadership

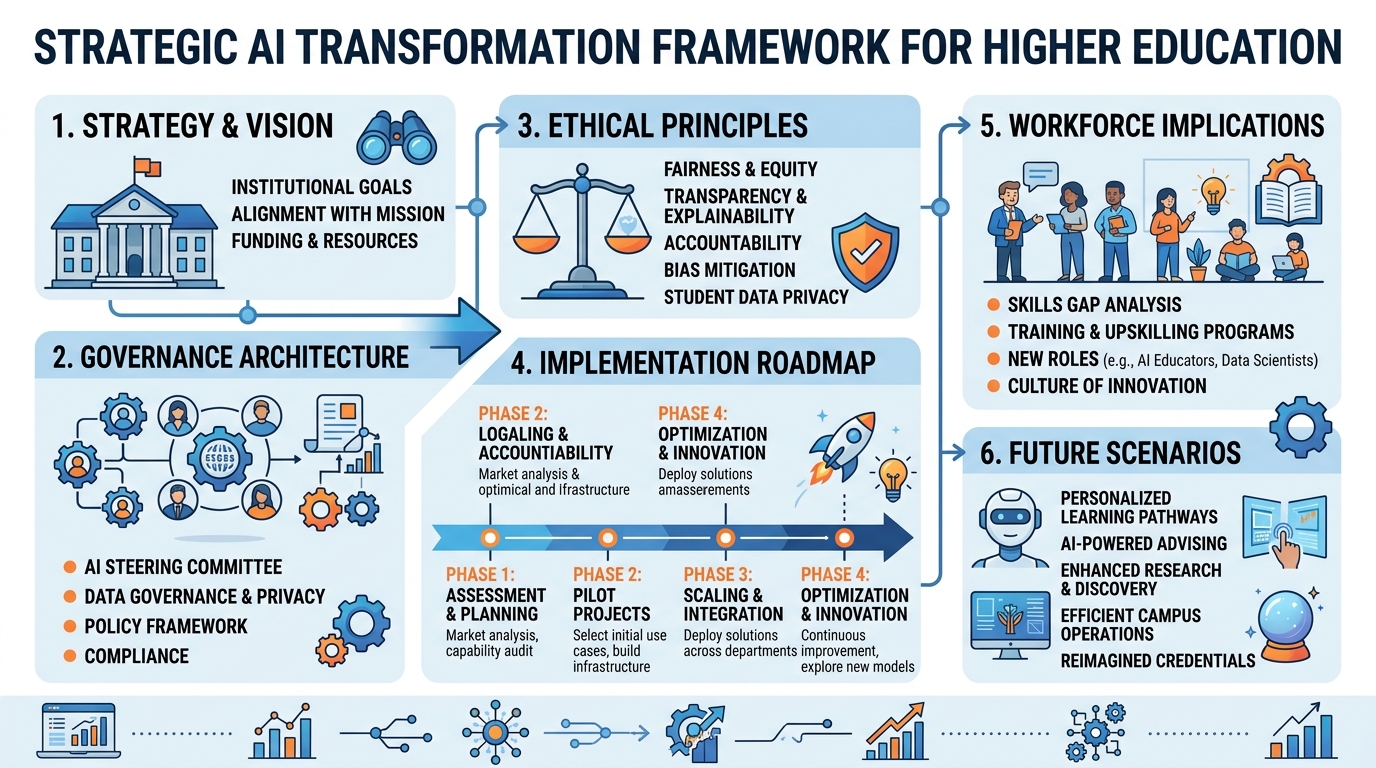

Figure 1:Chapter 3 Overview: Strategic AI transformation in higher education requires more than technology deployment — it demands governance architecture, ethical frameworks, workforce development, change management discipline, and a clear institutional vision for what an AI-native university means in practice.

Institutions that treat generative AI as a technology problem to be solved by the IT department will fail to capture its transformational potential — and may succeed only in adding to their technical debt. The universities and colleges that emerge from this era of AI innovation as stronger, more mission-aligned, more effective institutions will be those that approach AI as a strategic, organizational, and ethical challenge requiring leadership at the highest levels.

This chapter provides the strategic and governance frameworks that institutional leaders — presidents, provosts, CIOs, deans, and faculty governance leaders — need to navigate AI transformation responsibly. It addresses four dimensions of strategic AI leadership: governance architecture, ethical frameworks and responsible AI, workforce and organizational implications, and the long-range vision of the AI-native institution.

13.1 Strategic AI Planning: From Aspiration to Architecture¶

The gap between aspirational AI statements (“We will be an AI-powered institution”) and actionable strategy is where most institutional AI efforts founder. Translating aspiration into architecture requires a structured planning approach that connects institutional mission to specific AI initiatives with clear accountability, resourcing, and success metrics.

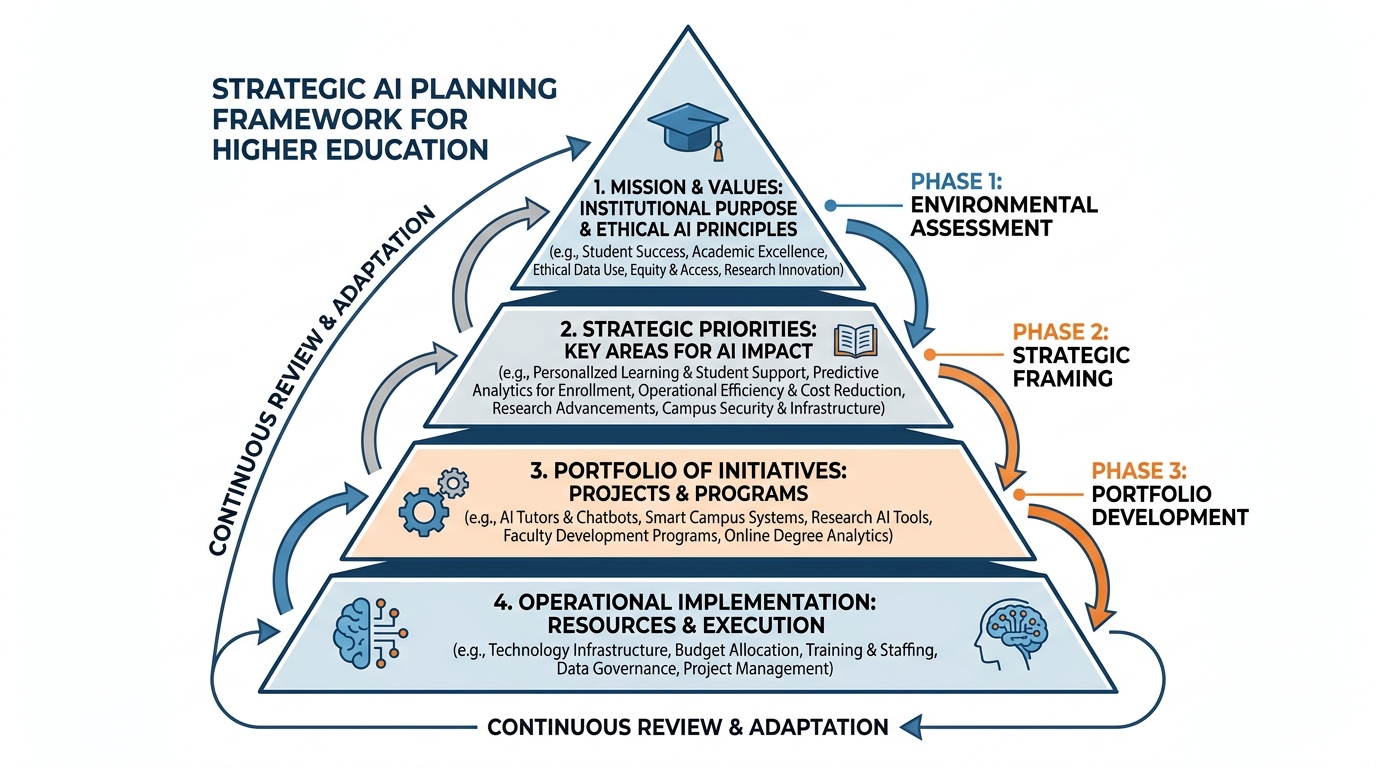

Figure 2:Figure 3.2: The Strategic AI Planning Framework for higher education. Effective AI strategy is not a technology plan — it is a mission-driven, evidence-informed, stakeholder-grounded process that connects institutional values to specific initiatives with clear accountability and success metrics. The four-layer model ensures alignment from mission to operations.

1.13.1.1 The Strategic AI Planning Process¶

Effective institutional AI planning follows a structured process:

Phase 1: Environmental Assessment (Months 1-2)

Before developing strategy, institutions must understand their current state:

Technology audit: What AI tools are already in use, authorized and unauthorized? What is the existing data infrastructure? What are the integration capabilities of current systems?

Capacity assessment: What AI expertise exists among faculty, staff, and administration? What professional development has occurred? What AI governance structures are in place?

Stakeholder mapping: Who are the key stakeholders — faculty advocates, skeptics, student voices, board members — and what are their concerns and aspirations?

Risk inventory: What are the primary risks associated with AI adoption (academic integrity, data privacy, bias, cybersecurity, workforce displacement) and what is the current mitigation posture?

Peer benchmarking: What are peer and aspirational peer institutions doing? What can be learned from early adopters?

Phase 2: Strategic Framing (Months 2-3)

Strategic framing translates the environmental assessment into a set of strategic priorities:

What problems are most urgently addressable through AI? (Student retention? Faculty efficiency? Administrative costs? Research acceleration?)

What AI capabilities are most aligned with the institution’s specific mission and strengths?

What is the institution’s risk appetite — how experimental is it willing to be?

What are the non-negotiables — values, commitments, or ethical lines that AI adoption must not cross?

Phase 3: Portfolio Development (Months 3-6)

A strategic AI portfolio organizes initiatives across a two-dimensional matrix:

Time horizon: Near-term (deployable in 6-12 months with existing technology), medium-term (12-24 months, requiring some infrastructure development), long-term (24+ months, requiring significant organizational change)

Impact: Operational efficiency, educational quality, research capability, student success, institutional resilience

The portfolio approach prevents the common failure mode of pursuing AI initiatives opportunistically — deploying whatever vendors are selling — without a coherent theory of change.

1.23.1.2 The AI Strategy Document¶

The deliverable of the planning process is an institutional AI strategy document. Effective AI strategy documents share common elements:

Mission alignment statement: How does AI support the institution’s core educational and research mission?

Strategic objectives: 3-5 measurable outcomes the institution aims to achieve through AI (e.g., “Increase first-year student retention by 5 percentage points through AI-enhanced advising by 2028”)

Portfolio of initiatives: Specific AI programs with owners, timelines, budgets, and success metrics

Governance architecture: How AI decisions will be made, who has authority, and how stakeholders participate

Ethical framework: The principles that will guide AI adoption and the mechanisms for enforcing them

Resource requirements: Technology, staffing, and professional development investments needed

Risk management: Identified risks and mitigation strategies

Communication and change management plan: How the strategy will be socialized and implemented

23.2 AI Governance Architecture¶

Governance — the structures, processes, and accountabilities that determine how AI decisions are made — is the most consequential institutional design challenge in the AI era. Institutions that invest in thoughtful governance before deploying AI at scale will avoid costly mistakes; those that deploy first and govern later will accumulate technical, reputational, and ethical debt.

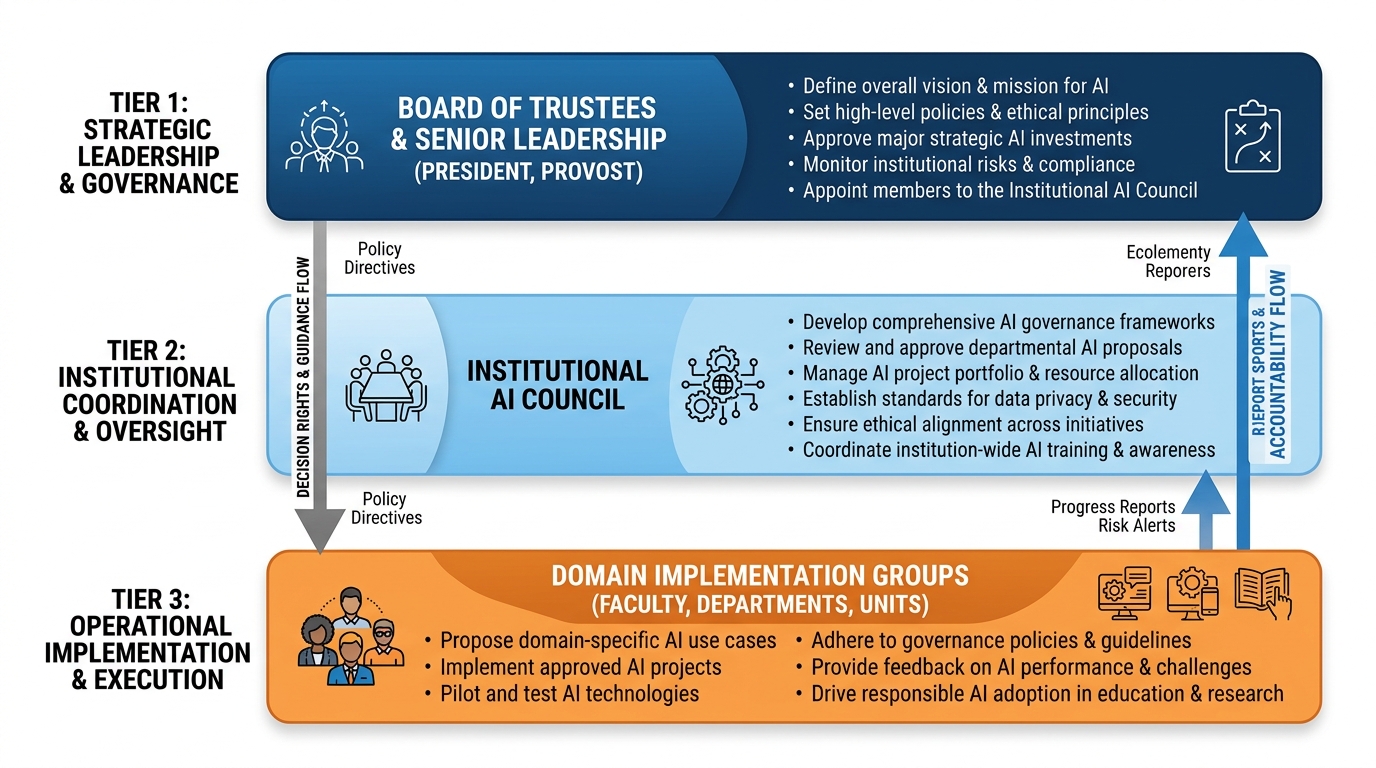

Figure 3:Figure 3.3: The three-tier AI governance architecture for higher education. Effective governance distributes decision rights appropriately: the board ensures strategic alignment and risk oversight; the institutional AI council coordinates policy, procurement, and cross-domain initiatives; and operational groups implement within policy frameworks. Each tier has distinct responsibilities and accountabilities.

2.13.2.1 The Three-Tier Governance Model¶

Tier 1: Board and Executive Leadership

The board of trustees and the president’s cabinet bear ultimate responsibility for institutional AI strategy. Board responsibilities include:

Approving the institutional AI strategy and significant AI investments

Receiving regular briefings on AI risks, opportunities, and policy developments

Ensuring that AI governance reflects the institution’s fiduciary and ethical obligations

Overseeing compliance with relevant regulations (FERPA, state AI legislation, accreditation requirements)

Presidential leadership must establish AI governance as a strategic priority, not delegate it entirely to IT. The president, provost, and chief financial officer must be active participants in AI strategy development.

Tier 2: Institutional AI Council

The Institutional AI Council is the primary governance body for AI decision-making. Composition should include:

Chief Information Officer (chair or co-chair)

Chief Academic Officer (Provost or designee)

Faculty Senate representative(s)

Student Government representative

Chief Financial Officer or designee

Chief Diversity Officer or equity advocate

Research VP or designee

Legal counsel

Marketing/Communications representative (for messaging)

Council responsibilities include:

Reviewing and approving new AI tools before institutional deployment

Developing and updating institutional AI policy

Overseeing AI vendor contracts and data agreements

Monitoring AI system performance, bias, and compliance

Coordinating AI professional development

Reporting to executive leadership and board on AI matters

Tier 3: Domain Implementation Groups

Domain groups implement AI within specific functional areas under policy frameworks established by the Council:

Academic Technology Group: LMS-integrated AI, instructional AI tools, faculty development

Student Success AI Group: AI advising tools, risk prediction, early alert systems

Research Computing Group: Research AI infrastructure, HPC, data science platforms

Administrative AI Group: ERP AI features, automation, HR AI, finance AI

Cybersecurity AI Group: AI-enabled threat detection and security operations

2.23.2.2 AI Procurement Governance¶

One of the most consequential governance decisions is how institutions evaluate and procure AI tools. Ad hoc procurement — faculty or departments independently deploying AI tools without institutional review — creates data privacy risks, security vulnerabilities, and equity concerns.

A responsible AI procurement process includes:

Vendor Assessment Criteria:

Data privacy and security: Where is student/employee data processed? Who can access it? What are the retention and deletion policies?

FERPA compliance: Does the vendor’s data processing agreement provide adequate FERPA protections?

Bias and fairness: Has the vendor conducted and published bias audits? Are accuracy metrics available by demographic group?

Accessibility: Does the tool meet WCAG 2.1 AA accessibility standards?

Transparency: Can the vendor explain how the AI makes decisions? Is the algorithm auditable?

Integration: How does the tool integrate with existing institutional systems? Does it support standard APIs (LTI, etc.)?

Business continuity: What happens to institutional data if the vendor fails or is acquired?

2.33.2.3 Data Governance for AI¶

AI systems are only as good as the data they process. Responsible AI governance requires robust data governance — policies and technical controls that ensure institutional data is managed appropriately when fed into AI systems.

Key data governance requirements for AI:

Data inventories: Knowing what data exists, where it lives, and who can access it

Data minimization: Feeding AI systems only the data they need, not entire databases

Consent and transparency: Informing students and employees when their data is used to train or inform AI systems

De-identification: Where possible, using de-identified data for AI training and analytics

Vendor data agreements: Contractual protections ensuring vendors do not use institutional data to train commercial AI models without explicit consent

Data retention limits: Ensuring AI systems do not retain student data beyond appropriate windows

33.3 Responsible AI: Ethical Frameworks for Higher Education¶

The ethical dimensions of AI in higher education are not peripheral concerns — they are central to the institution’s educational mission and its obligations to students, employees, and society. Institutions need explicit ethical frameworks that go beyond compliance minimums.

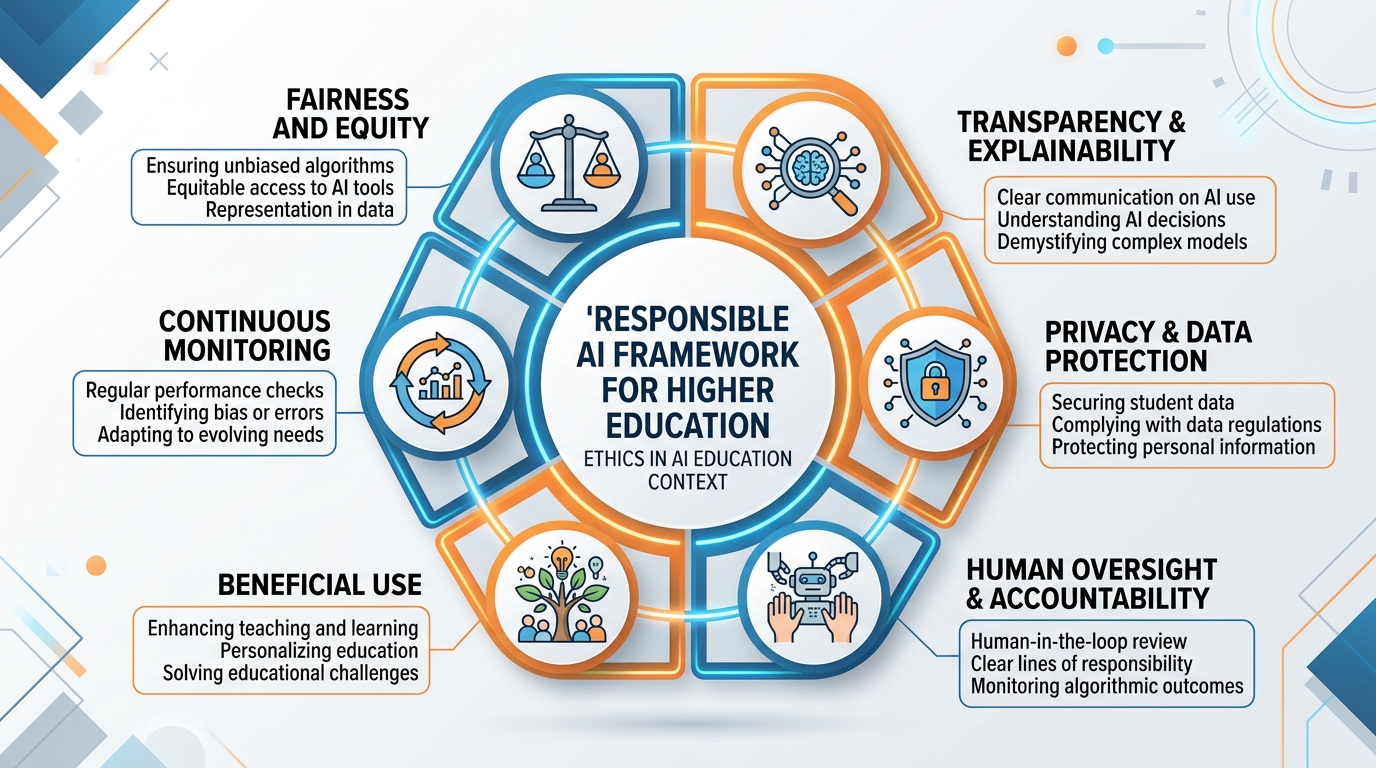

Figure 4:Figure 3.4: The Responsible AI Framework for Higher Education. Six principles — fairness and equity, transparency, privacy, human oversight, beneficial use, and continuous monitoring — provide the ethical architecture for AI governance. Each principle has concrete operational implications for procurement, deployment, and ongoing management.

3.13.3.1 The Six Principles of Responsible AI in Higher Education¶

Principle 1: Fairness and Equity

AI systems must be evaluated for differential impacts across student populations. An AI advising system that is 85% accurate for white students but 72% accurate for Black students is not acceptable, even if the overall accuracy is impressive. Equity review should be standard practice before and during AI deployment.

Fairness also requires attention to access: AI tools must be available to all students, not just those with high-end devices or premium internet connections.

Principle 2: Transparency and Explainability

Students and employees have a right to know when AI is being used to make consequential decisions about them. AI advising recommendations, financial aid packaging algorithms, predictive risk scores, and automated administrative decisions should be explainable to affected individuals.

Institutions should publish clear descriptions of which AI systems are in use, what data they process, how they work, and how affected individuals can seek review of AI-influenced decisions.

Principle 3: Privacy and Data Protection

Student data is protected by FERPA; employee data by a range of state and federal laws. AI systems create new data privacy risks: they may combine data in ways that produce sensitive inferences not present in any individual data source, they may share data with third-party vendors in ways not anticipated by students, and they may retain data beyond appropriate windows.

Privacy-by-design principles — building privacy protections into AI systems from the start rather than adding them afterward — should be required for all institutional AI deployments.

Principle 4: Human Oversight and Accountability

Consequential decisions — those that significantly affect students’ academic careers or employees’ employment — should maintain human oversight even when AI is involved. An AI system that flags a student as high academic risk should generate a human advisor review, not an automated intervention. An AI system that screens job applications should support, not replace, human evaluation.

The institution must be able to explain and defend any AI-influenced decision that is challenged. This requires maintaining logs of AI recommendations, human reviews, and final decisions.

Principle 5: Beneficial Use Aligned with Mission

AI should be deployed in service of the institution’s educational mission, not in ways that compromise it. AI surveillance systems that track student behavior in invasive ways, AI grading systems that reduce faculty-student intellectual engagement, or AI recruitment tools that optimize for yield without regard for student fit — these may produce short-term metrics while undermining long-term mission.

Principle 6: Continuous Monitoring and Improvement

AI systems are not static — they change as underlying models are updated, as use patterns shift, and as the populations they serve evolve. Responsible deployment requires ongoing monitoring for accuracy, bias, and unintended consequences, with defined processes for addressing problems discovered post-deployment.

3.23.3.2 Special Ethical Concerns: Predictive Analytics and Student Privacy¶

Student predictive analytics — AI systems that score students’ likelihood of dropping out, failing courses, or not persisting to graduation — deserve special ethical attention because they combine significant potential benefit with significant potential harm.

The benefit case: Predictive analytics have demonstrated genuine efficacy in improving student retention. Georgia State University’s GPS advising system, which uses predictive analytics to trigger proactive advising interventions, contributed to a 22 percentage point increase in four-year graduation rates for low-income students over a decade — one of the most dramatic retention improvements in higher education history.

The ethical risks:

Labeling effects: Students who know they have been flagged as “at risk” may experience stereotype threat — a phenomenon in which awareness of negative expectations undermines performance.

Differential intervention quality: If flagged students are contacted by less experienced advisors, or with more deficit-framing than asset-based framing, the intervention may harm rather than help.

Determinism: Predictive scores reflect historical patterns. Students who resemble previous drop-out populations may be treated as likely to drop out, creating self-fulfilling prophecies.

Mission creep: Data collected for retention purposes may be repurposed for other institutional uses without students’ knowledge.

43.4 Workforce Implications: The Human Cost and Opportunity of AI¶

No honest treatment of AI transformation in higher education can avoid the workforce question: what happens to the employees whose work is automated or fundamentally altered by AI?

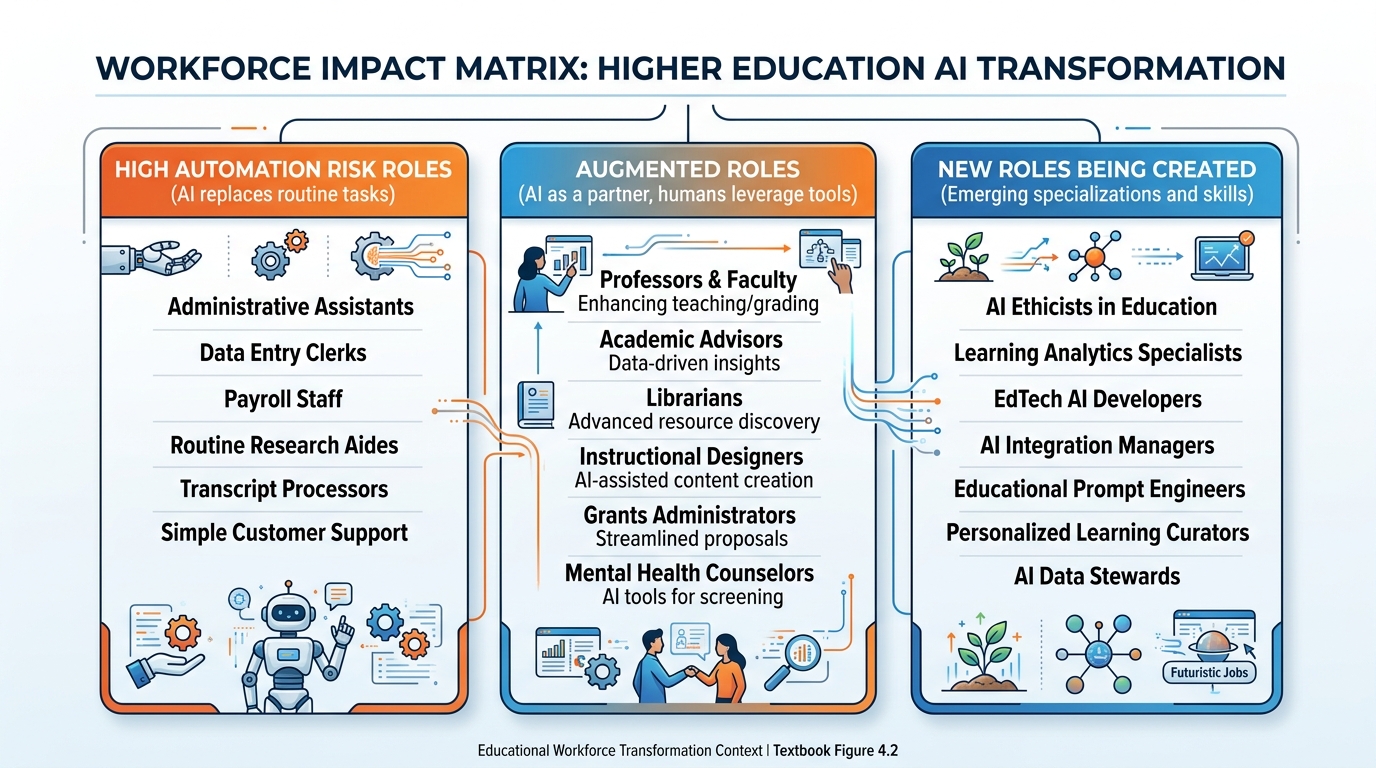

Figure 5:Figure 3.5: Workforce impact matrix for AI transformation in higher education. Jobs exist on a spectrum from high automation risk (routine, rules-based tasks) to low automation risk (relationship-intensive, judgment-intensive, creative work). The matrix maps higher education roles across this spectrum and identifies both risks and emerging opportunities.

4.13.4.1 Which Roles Are Most Affected?¶

Research from McKinsey, the World Economic Forum, and higher education-specific workforce studies identifies patterns in which roles are most susceptible to AI automation versus those most likely to be enhanced:

High automation risk (routine and rules-based):

Basic IT help desk support (Tier 1 ticketing, password resets)

Routine administrative processing (enrollment verification, transcript requests, simple financial aid calculations)

Standard data entry and report generation

Basic library cataloging and document processing

Significant augmentation (AI as partner tool):

Academic advising (AI surfaces risk data; humans provide mentorship)

Faculty course design (AI assists; humans create intellectual substance and pedagogical judgment)

Research (AI accelerates literature review and analysis; humans provide domain expertise and theoretical framing)

Financial aid counseling (AI handles calculations; humans navigate complex family situations)

IT systems management (AI handles monitoring and Tier 1 resolution; humans handle complex problems and strategy)

Growing demand (new and expanded roles):

AI/Instructional Design Specialists

Data Scientists and Learning Analytics professionals

Cybersecurity AI Engineers

AI Governance and Policy Officers

AI Pedagogy Specialists

Research Data Management professionals

4.23.4.2 The Institutional Obligation¶

Institutions have an ethical obligation to their employees that goes beyond legal compliance. This includes:

Transparency: Being honest with employees about how AI may affect their roles, rather than surprising them with automation after the fact.

Investment in reskilling: Providing meaningful professional development opportunities for employees whose roles are being changed by AI. “Take this online course” is not sufficient; structured, paid, role-specific reskilling programs are needed.

Shared benefit: When AI creates institutional efficiency savings, some portion of those savings should return to the workforce — through enhanced benefits, reduced workload, or new employment opportunities — rather than flowing entirely to budget cuts.

No use of AI to undermine collective bargaining: AI should not be deployed as a tool to circumvent fair labor practices or weaken employee bargaining power.

4.33.4.3 The Faculty Workforce Question¶

Faculty are both the most impactful AI adopters in higher education and among the most anxious about AI’s implications for their profession. Faculty anxieties center on several legitimate concerns:

Will AI teaching assistants reduce demand for adjunct faculty and graduate teaching assistants, eliminating important career pathways?

Will AI-generated course content reduce the perceived value of faculty expertise?

Will institutions use AI to justify increasing class sizes or cutting tenure-track positions?

These concerns are not paranoid — they reflect real possibilities that institutional leaders must address explicitly. Institutions that promise “AI will enhance your teaching, not replace you” and then use AI cost savings to eliminate teaching lines will destroy faculty trust in AI initiatives and in institutional leadership.

53.5 Change Management for AI Transformation¶

Technology transformation without change management is waste. The graveyard of higher education technology initiatives is filled with expensive systems that were deployed and abandoned because the organizational change work was not done.

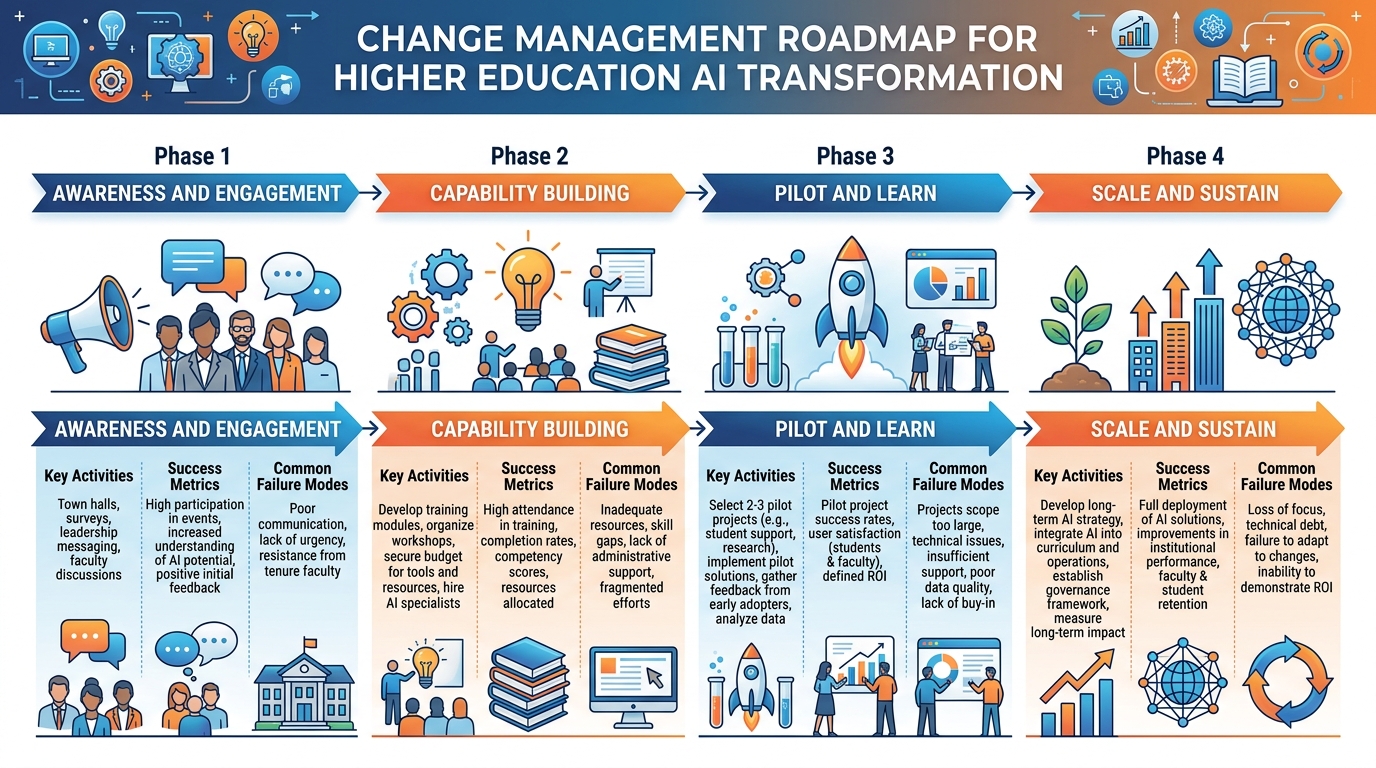

Figure 6:Figure 3.6: The AI Transformation Change Management Roadmap. Four phases — awareness and engagement, capability building, pilot and learn, and scale and sustain — provide a structured approach to organizational change that prevents common failure modes. Each phase requires different leadership behaviors and organizational investments.

5.13.5.1 The Change Management Framework¶

Phase 1: Awareness and Engagement (Months 1-6)

The first task of AI transformation leadership is building understanding and trust — not compliance. Faculty, staff, and students who understand why AI is being introduced, what problems it addresses, and how their concerns have been heard are far more likely to engage constructively than those who experience AI as something being done to them.

Key activities:

Town halls and open forums with leadership present and listening

Faculty governance engagement (not just informing but genuinely consulting)

Student focus groups

Department-level conversations facilitated by faculty peers

Publication of the institutional AI strategy in accessible language

Creation of feedback channels with visible responsiveness

Common failure mode: Leadership announces AI strategy before adequate consultation, creating a fait accompli that generates resistance. The order of operations matters — consultation must precede announcement.

Phase 2: Capability Building (Months 6-18)

Awareness without capability is anxiety-producing rather than enabling. Faculty and staff need real skills — not just conceptual familiarity — to work effectively with AI tools. Capability building requires:

Structured professional development programs with protected time

Peer learning communities that sustain engagement beyond formal training

Department-level champions who provide ongoing informal support

Accessible resources (guides, prompt libraries, FAQ) that people can use in the moment

Student AI literacy programs that create shared understanding across the institution

Phase 3: Pilot and Learn (Months 12-30)

Pilots should be designed to generate learning, not just demonstrate success. Key principles:

Small enough to be manageable; large enough to generate meaningful data

Diverse enough to test equity implications across different student populations

Structured with clear hypotheses, baseline data, and evaluation plans

Public enough that the whole institution learns from outcomes

Permission to fail: pilots that produce null or negative results are valuable if findings are shared

Phase 4: Scale and Sustain (Month 24+)

Scaling AI initiatives requires the same rigorous change management as piloting, applied at larger scope. Common failure mode: assuming that because something worked in a pilot, it will automatically work at scale without equivalent organizational support. Scale introduces new complexity: more diverse user populations, more integration points, more edge cases, greater visibility of failures.

5.23.5.2 Faculty Governance and Shared Governance¶

In higher education, change management without faculty governance engagement is not just strategically risky — it may be procedurally illegitimate. Accreditation standards and the principles of shared governance require faculty participation in decisions about academic programs, educational technology, and student assessment.

This is not merely a compliance issue. Faculty governance engagement, when done well, produces better AI policy and higher-quality implementations. Faculty bring deep pedagogical expertise, disciplinary knowledge, and student-centered values that improve the design of AI systems and policies. Technology decisions made without faculty input tend to be technically competent but pedagogically naive.

63.6 The AI-Native Institution: Future Scenarios¶

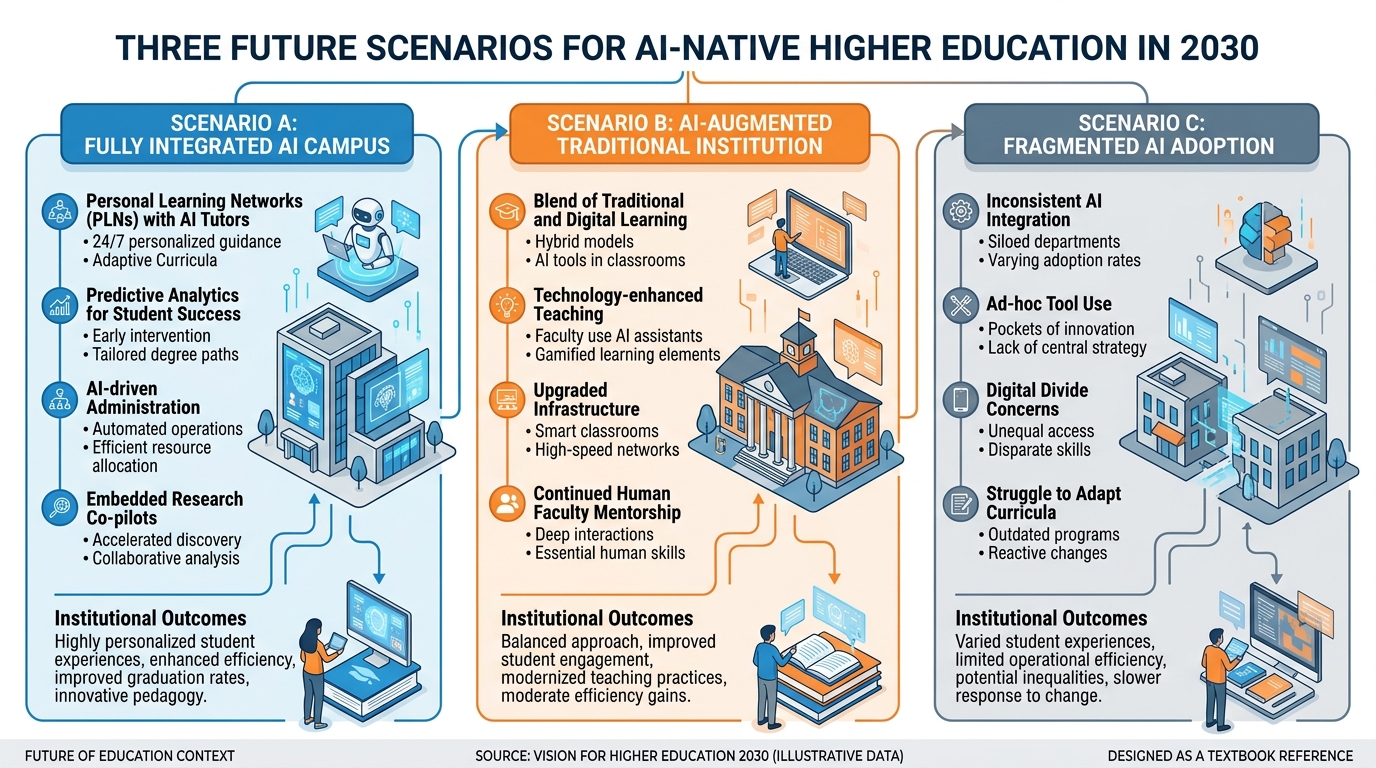

Looking beyond the current transition period, what does the AI-native university look like in 2030 and beyond? Three scenarios represent the range of institutional futures.

Figure 7:Figure 3.7: Three future scenarios for higher education in 2030. The trajectory an institution follows depends not on technology but on strategic choices made now — about governance, investment, equity, and the depth of organizational change the institution is willing to undertake.

6.13.6.1 Scenario A: The Fully Integrated AI Campus (2030)¶

In the most ambitious scenario, AI is woven into every dimension of institutional life:

Personalized learning at scale: Every student’s educational path is dynamically adapted to their learning goals, prior knowledge, pace, and career aspirations. AI tutors provide 24/7 personalized support in every course. Adaptive assessments continuously calibrate to demonstrate what students know rather than administering fixed tests.

AI research infrastructure: AI accelerates discovery across every discipline. Every research lab has access to domain-specific AI tools. Literature monitoring, data analysis, hypothesis generation, and manuscript preparation are AI-augmented. Research output per faculty member increases substantially.

Intelligent campus operations: Building management systems optimize energy use in real time based on occupancy patterns. Predictive maintenance prevents equipment failures before they occur. Space allocation responds dynamically to demand patterns. Safety systems use AI-enhanced monitoring.

AI-enhanced student services: Advising, financial aid counseling, mental health support triage, career services, and disability support all use AI to scale their reach while maintaining human oversight for consequential decisions. Students receive proactive, personalized support rather than generic services.

This scenario requires massive institutional investment, sustained leadership commitment, successful change management, and strong governance. It is achievable — but not for institutions that approach AI incrementally or opportunistically.

6.23.6.2 Scenario B: The AI-Augmented Traditional Institution (2030)¶

The most likely scenario for most institutions is selective, mission-aligned AI augmentation: AI is deeply integrated in high-impact domains (student success analytics, research tools, administrative efficiency) while traditional educational models continue in areas where they demonstrably serve the mission.

Faculty teach courses that are AI-enhanced but not AI-replaced. Research is dramatically faster. Administration is more efficient. Student services are more responsive. But the core model — faculty expertise, intellectual community, campus life, credentialing — continues to provide the framework.

This scenario is achievable for well-governed institutions with adequate resources and strategic discipline. It requires resisting both the tendency to deploy AI everywhere indiscriminately and the tendency to resist AI where it genuinely adds value.

6.33.6.3 Scenario C: Fragmented AI Adoption (2030)¶

The risk scenario: institutions deploy AI tactically and opportunistically, without governance or strategy. Different departments use incompatible tools. Faculty use AI inconsistently, with no shared norms. Student experiences vary dramatically depending on which faculty and programs they encounter. Equity gaps widen because well-resourced programs deploy AI well while under-resourced programs cannot.

This scenario requires no deliberate choice — it is the default if institutions do not invest in governance and strategy. It produces the costs and risks of AI without capturing the benefits, while embedding new inequities into institutional practice.

73.7 Emerging Technologies: Beyond Current Generative AI¶

Strategic AI planning must account for technologies beyond the current generation of LLMs, as the pace of AI capability development makes near-term obsolescence of current tools likely.

7.13.7.1 Multimodal AI and Immersive Learning¶

Current LLMs process primarily text, with growing capabilities for images and audio. The next generation of AI tools will integrate seamlessly across modalities — text, image, video, audio, 3D spatial data — enabling educational experiences that are currently impossible.

AI-enhanced virtual and augmented reality will enable laboratory simulations, field experiences, historical reconstructions, and clinical training environments that are more realistic, more accessible, and more data-rich than current digital simulations.

Multimodal assessment — evaluating student learning through spoken explanation, hand-drawn diagrams, lab technique video, and written argument simultaneously — becomes feasible at scale.

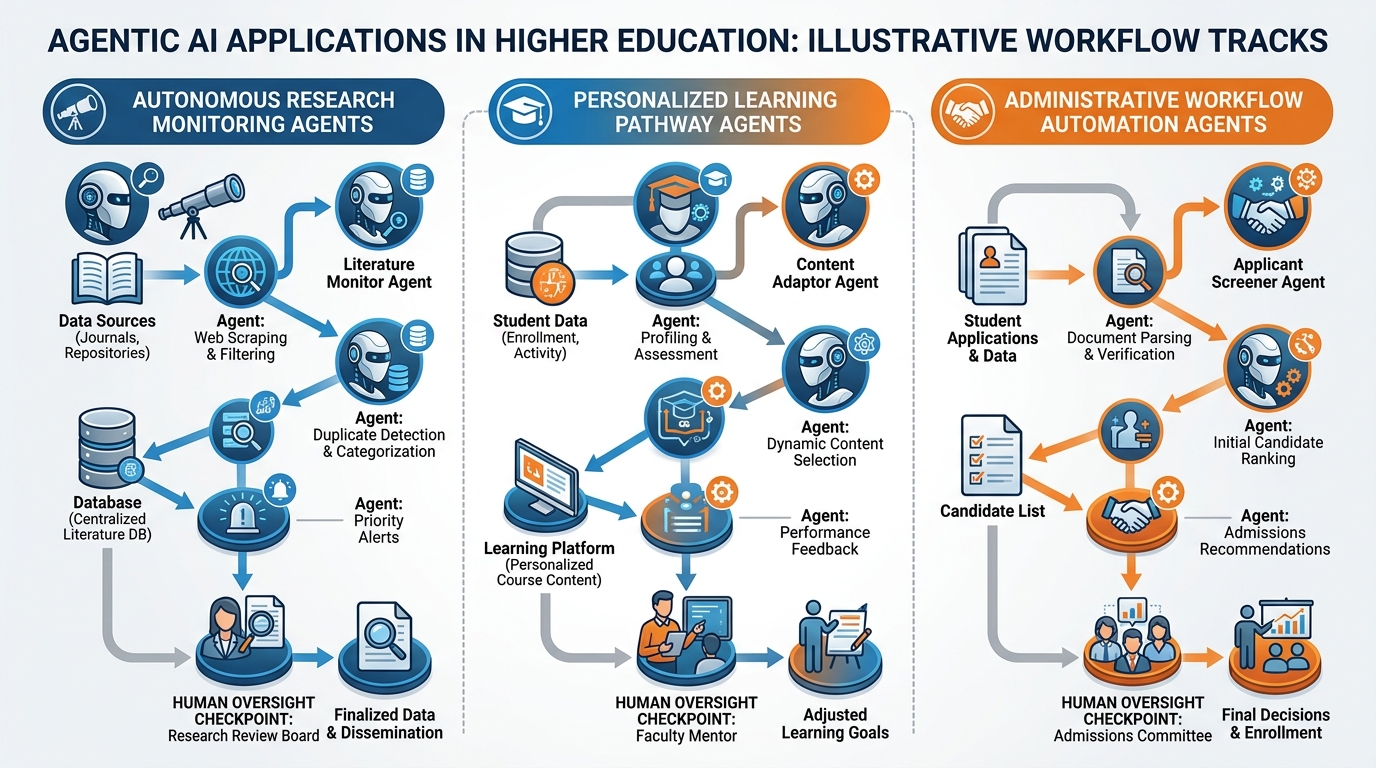

7.23.7.2 Agentic AI in Higher Education¶

Agentic AI — AI systems that take sequences of actions autonomously to accomplish goals — is moving from research labs into practical deployment. In higher education contexts, agentic AI enables:

Automated research workflows: AI agents that continuously monitor literature, flag relevant developments, summarize findings, and update research databases without human intervention

Personalized learning pathways: AI agents that continuously adapt course content, pacing, and assessment based on student performance data

Administrative automation: AI agents that handle complex multi-step administrative processes (scholarship applications, course equivalency evaluation, degree audit updates) that currently require significant human labor

Figure 8:Figure 3.8: Agentic AI in higher education. Unlike single-turn AI interactions, agentic systems execute multi-step workflows autonomously — monitoring literature, adapting learning pathways, and processing administrative workflows without constant human direction. Human oversight checkpoints remain essential for consequential decisions.

7.33.7.3 AI and the Credential¶

Perhaps the most profound long-term question for higher education is what happens to credentials — degrees, certificates, micro-credentials — in an AI-augmented world.

If AI can demonstrate competence in many domains that degrees currently certify, what is the signal value of a degree? Does a computer science degree mean the same thing when graduates completed many assignments with AI assistance? Employers are beginning to ask these questions explicitly.

Several responses are emerging:

Competency-based education that certifies demonstrated skills rather than seat time becomes more meaningful when AI can help students learn faster

Human-centric skill certification — credentials that specifically certify uniquely human capabilities (leadership, ethical reasoning, complex collaboration, creative direction) that AI cannot replace

AI literacy credentials — certifications that demonstrate proficiency in working effectively with AI tools, increasingly valued by employers

Process portfolios — evidence of learning journeys, not just endpoints, that demonstrate student development over time

83.8 Building the AI-Ready Institution¶

Drawing together the frameworks of this chapter and this book, what does it mean for an institution to be AI-ready? It means more than having the right technology — it means having the governance, culture, capacity, and commitment to deploy AI in service of educational mission.

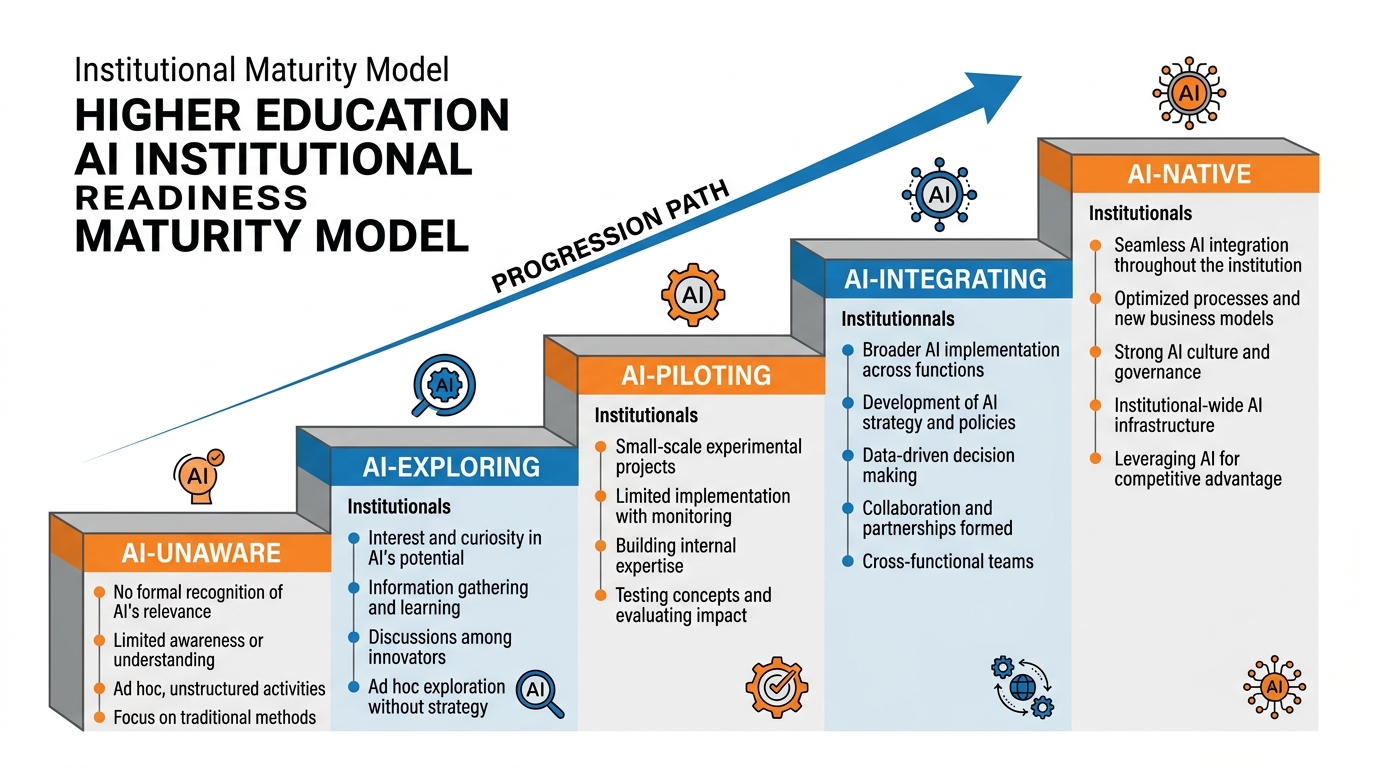

Figure 9:Figure 3.9: The AI Institutional Readiness Maturity Model. Five levels — AI-unaware, AI-exploring, AI-piloting, AI-integrating, and AI-native — describe the trajectory from no strategic AI activity to full AI integration. Most institutions in 2025 are at levels 2-3. Advancing requires deliberate investment in all five dimensions: strategy, governance, capability, culture, and infrastructure.

8.13.8.1 The Five Dimensions of AI Readiness¶

Strategic Clarity: Has the institution articulated a clear, mission-aligned AI strategy with specific objectives, accountability, and resource commitment? Is the strategy reviewed and updated regularly?

Governance Maturity: Does the institution have the structures, processes, and authorities needed to make responsible AI decisions? Are faculty, students, and equity advocates meaningfully included in governance?

Organizational Capability: Do faculty, staff, and administrators have the knowledge and skills to use AI effectively in their domains? Is there investment in continuous professional development?

Cultural Readiness: Is the institutional culture characterized by experimentation, evidence-based learning, and willingness to change? Or is it characterized by risk aversion, bureaucratic resistance, and change fatigue?

Technical Infrastructure: Does the institution have the data management capabilities, security architecture, integration platforms, and cloud infrastructure needed to deploy AI at scale? Is there adequate investment in cybersecurity?

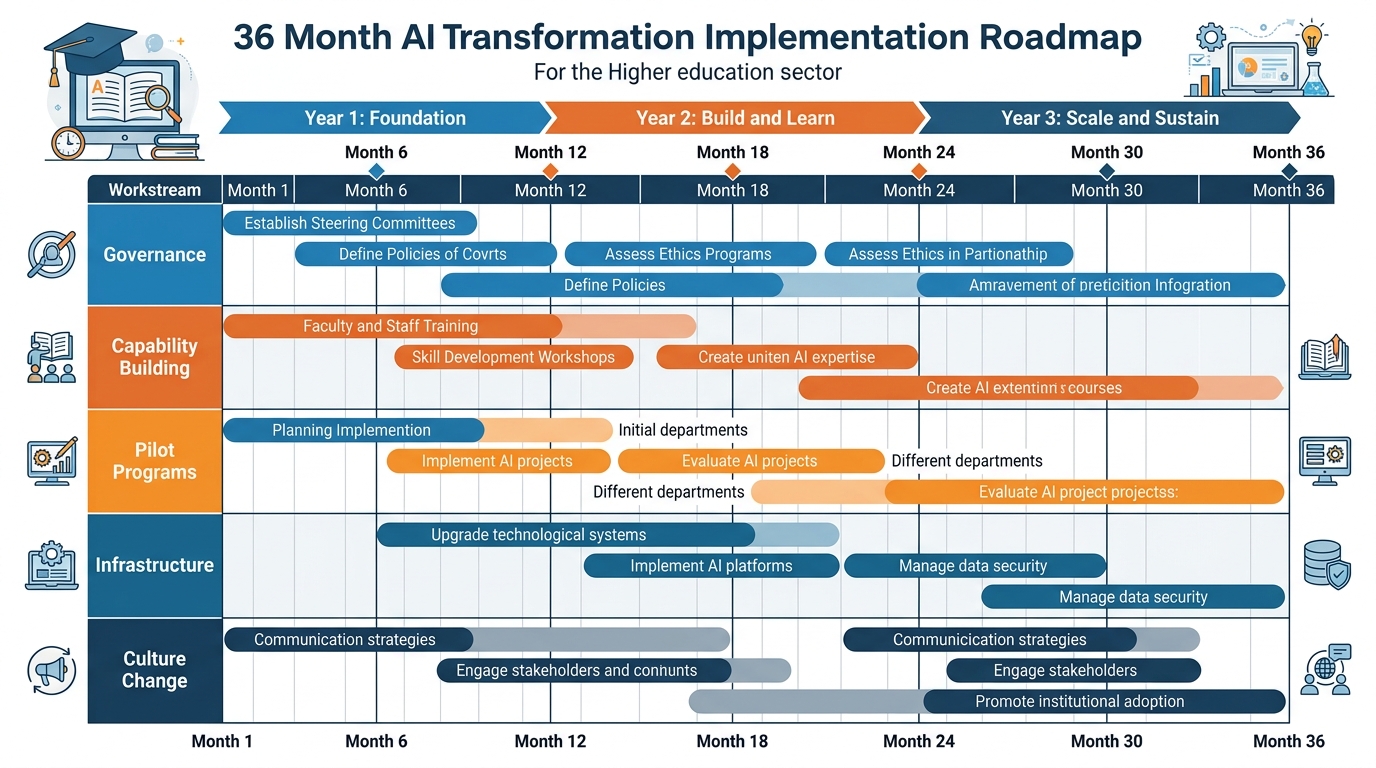

Figure 10:Figure 3.10: The 36-Month AI Transformation Implementation Roadmap. Structured transformation requires simultaneous progress across five workstreams — governance, capability building, pilots, infrastructure, and culture — with milestone-based accountability and regular board-level reporting. The roadmap is a starting point to be adapted to institutional context.

8.23.8.2 The 36-Month Roadmap¶

Year 1: Foundation

Establish institutional AI Council with diverse composition

Complete environmental assessment and strategy development

Launch faculty AI professional development program

Initiate 3-5 high-potential AI pilots with strong evaluation plans

Negotiate enterprise AI platform agreements with privacy protections

Develop and publish institutional AI policy

Communicate transparently about strategy, rationale, and governance

Year 2: Build and Learn

Evaluate pilots and scale successful initiatives

Deepen faculty capability building; launch student AI literacy curriculum

Address equity gaps identified in Year 1 pilots

Develop data governance framework for AI

Expand AI governance to include more faculty and student voices

Conduct first formal AI ethics review of deployed systems

Publish first AI impact report for institutional accountability

Year 3: Scale and Sustain

Scale successful initiatives across the institution

Integrate AI into strategic planning cycles and accreditation self-studies

Develop institutional AI maturity assessment and continuous improvement process

Build external partnerships (peer institutions, research consortia, industry) to accelerate learning

Establish AI scholarship, student innovation programs, and research initiatives

Review governance structures for adequacy at scale

Develop succession planning for AI expertise — ensuring institutional knowledge is not concentrated in a few individuals

9Summary¶

This chapter has provided the strategic and governance framework for leading AI transformation in higher education. The key insights:

Strategy before technology: The institutions that capture AI’s potential are those that develop clear, mission-aligned strategies before deploying tools. Technology without strategy creates technical debt without educational return.

Governance is the differentiator: The quality of AI outcomes in higher education is largely determined by governance quality — the structures, processes, and values that shape which tools are deployed, how, and for whom.

Responsible AI is not optional: The ethical dimensions of AI in higher education — equity, privacy, transparency, human oversight — are not compliance burdens but expressions of institutional mission and commitments to students.

Workforce welfare is a leadership obligation: AI transformation creates both risks and opportunities for employees. Institutions have an obligation to invest in reskilling, share the benefits of AI efficiency, and protect vulnerable workers — particularly contingent faculty.

Change management is the implementation work: Technology transformation without organizational change management fails. The human work — building understanding, developing capability, creating feedback loops, earning trust — is as important as the technical work.

The AI-native institution is achievable: For institutions willing to invest strategically and lead courageously, the AI-native institution of 2030 — more personalized, more effective, more equitable, and more efficient — is within reach. It requires not just better technology but better institutional leadership.

The academy has survived — and often led — transformations of comparable magnitude: the research university model, universal access, the internet age. Generative AI is the next great transformation. The institutions that approach it with clarity, intentionality, and a genuine commitment to educational mission will emerge stronger. Those that approach it reactively, defensively, or opportunistically will find themselves on the wrong side of history.

10Key Terms¶

AI Strategy A documented, mission-aligned plan for how an institution will adopt and govern AI technologies, including specific objectives, accountability structures, resource commitments, and ethical frameworks.

Institutional AI Council The primary governance body responsible for AI policy, procurement review, ethics oversight, and strategic coordination for AI initiatives across a college or university.

Responsible AI The set of principles and practices that guide ethical AI development and deployment, typically including fairness, transparency, privacy, human oversight, and mechanisms for accountability and correction.

Predictive Analytics Statistical and machine learning methods applied to institutional data to forecast student outcomes (retention, graduation, academic performance) and support proactive intervention.

Data Governance The policies, processes, and technical controls that ensure institutional data is managed appropriately — including access controls, retention schedules, consent practices, and vendor data agreements.

Shared Governance The principle, foundational to American higher education, that faculty, administrators, and sometimes students share authority over decisions about academic programs, educational policies, and institutional direction.

Change Management The structured organizational process of preparing, equipping, and supporting individuals through institutional transitions. In AI transformation, change management addresses culture, capability, communication, and resistance.

Agentic AI AI systems capable of executing multi-step workflows autonomously to accomplish complex goals, with minimal human intervention during execution, though requiring human oversight design and monitoring.

AI Readiness Maturity Model A framework for assessing an institution’s current state and trajectory on AI adoption, typically organized in levels from initial/unaware through optimizing/native.

Privacy-by-Design An approach to system development that incorporates privacy protections into system architecture from the outset, rather than adding them as an afterthought.

Cognitive Augmentation The use of AI tools to enhance human cognitive capabilities — memory, analysis, pattern recognition, language — enabling more effective performance on complex tasks rather than replacing human judgment.

Micro-credential A short-form credential that certifies competence in a specific skill or knowledge domain, often stackable toward larger qualifications. AI is accelerating the micro-credential market by enabling faster, more personalized learning pathways.

FERPA The Family Educational Rights and Privacy Act (1974), which protects the privacy of student educational records and governs how institutions may share student data. Critical compliance context for AI systems that process student information.

Algorithm Audit A systematic evaluation of an AI algorithm’s outputs for accuracy, bias, and unintended consequences, conducted by internal or independent reviewers. Increasingly recommended as standard practice before deploying AI in consequential educational decisions.

Competency-Based Education (CBE) An educational model in which students advance by demonstrating mastery of specific competencies rather than completing a fixed number of credit hours. AI accelerates CBE by enabling personalized, adaptive pathways to demonstrated competency.

11Discussion Questions¶

The Governance Design Challenge: A regional comprehensive university with 8,000 students wants to deploy an AI-powered early alert system that will automatically contact students who show signs of academic risk based on LMS engagement data, grade trends, and attendance patterns. Design the governance process that should precede deployment. What decisions need to be made? Who should make them? What ethical considerations require explicit policy resolution?

The Workforce Equity Question: A community college identifies that AI automation could reduce the cost of its financial aid counseling function by 40% by automating routine inquiries and document processing. The financial aid office employs 12 staff, many of whom are long-term employees who themselves represent the demographic communities the college serves. What obligation does the college have to these employees? What does a responsible approach to implementing this AI look like?

Scenario Planning: Which of the three 2030 scenarios described in Section 3.6 is most likely for your institution or a similar institution in your state? What specific institutional decisions made in the next two years will most determine which scenario materializes? What are the two or three highest-leverage strategic choices?

The Credential Question: If AI can help students demonstrate competencies faster and more effectively, does this mean higher education should move more aggressively toward competency-based education and micro-credentials? What is lost and what is gained compared to traditional degree models?

Faculty Governance and AI: A provost wants to deploy an AI teaching assistant in all sections of a required first-year writing course without going through faculty governance, arguing that the AI is a tool like a word processor, not a change in academic program. Faculty Senate leadership disagrees. Who is right? What should happen?

Discussion Guidelines

For your initial post:

Provide a 350-500 word substantive response that engages with the complexity of the question rather than providing a simple answer.

Include at least one citation from a scholarly source, EDUCAUSE research report, or peer-reviewed journal.

Take a clear and defensible position where appropriate; acknowledge countervailing considerations.

For peer responses:

Respond substantively to at least TWO classmates (150+ words each).

Engage with specifics: critique the reasoning, identify a factor not considered, or provide evidence that supports or challenges your peer’s position.

“Nice post!” and “I hadn’t thought of that” without elaboration do not qualify as substantive responses.

12Exercises¶

12.1Exercise 3.1 — AI Strategy Audit¶

Select a higher education institution that has publicly released an AI strategy document, AI use policy, or significant AI initiative description (check the institution’s website, EDUCAUSE presentations, or press releases). Evaluate the strategy or policy against the framework presented in this chapter:

Does it include strategic objectives with measurable outcomes?

Does it describe governance structures?

Does it address equity and access?

Does it include workforce implications?

Does it specify ethical principles?

Write a 500-word evaluation identifying strengths, gaps, and specific recommendations for improvement.

12.2Exercise 3.2 — Governance Design Simulation¶

In groups of 4-6, simulate an Institutional AI Council. Each participant takes a role: CIO, Provost, Faculty Senate representative, student representative, equity officer, and legal counsel. The council must make a decision on the following scenario:

A vendor is offering a 12-month pilot of an AI proctoring system for online exams. The system records video and audio of students during exams, uses eye-tracking and behavioral analysis to flag potential cheating, and charges $8/student/exam. The online learning program director strongly supports it. Three faculty members have written letters of protest. The student government has not yet taken a position.

Conduct the council discussion, make a decision, and document the decision with rationale, conditions, and dissenting views.

12.3Exercise 3.3 — Workforce Impact Analysis¶

Interview three individuals currently working in higher education administrative, faculty, or student services roles about their current use of AI and their perceptions of how AI may affect their work in the next five years. (If interviews are not feasible, use published accounts from EDUCAUSE, Chronicle, or Inside Higher Ed.)

Synthesize your findings into a 500-word analysis that addresses: What AI uses are already occurring? What concerns do employees have? What professional development do they feel they need? What institutional support would make them more confident about AI’s role in their work?

12.4Exercise 3.4 — Future Scenario Development¶

Develop your own fourth scenario for higher education in 2030 — one not covered by the three scenarios in this chapter. Your scenario should:

Be plausible given current technological trajectories and institutional dynamics

Address at least three of the dimensions covered in this chapter (governance, workforce, ethics, pedagogy, credentials)

Describe what the scenario means for students, faculty, staff, and institutional viability

Identify the specific decisions and investments that would lead to this scenario