“The difference between a tool and a weapon is who is holding it — and whether they know what they’re building.”

You’ve just completed Day 1.

Take a moment to appreciate that. You walked into this room knowing that AI existed. You’re leaving the first half of this book knowing how to use it — to query it, prompt it, structure it, interrogate it, and deploy it inside Vertex AI at enterprise scale.

That is not nothing. That is, in fact, where most organizations stop.

And that’s exactly why you’re about to leave them behind.

1The Ladder¶

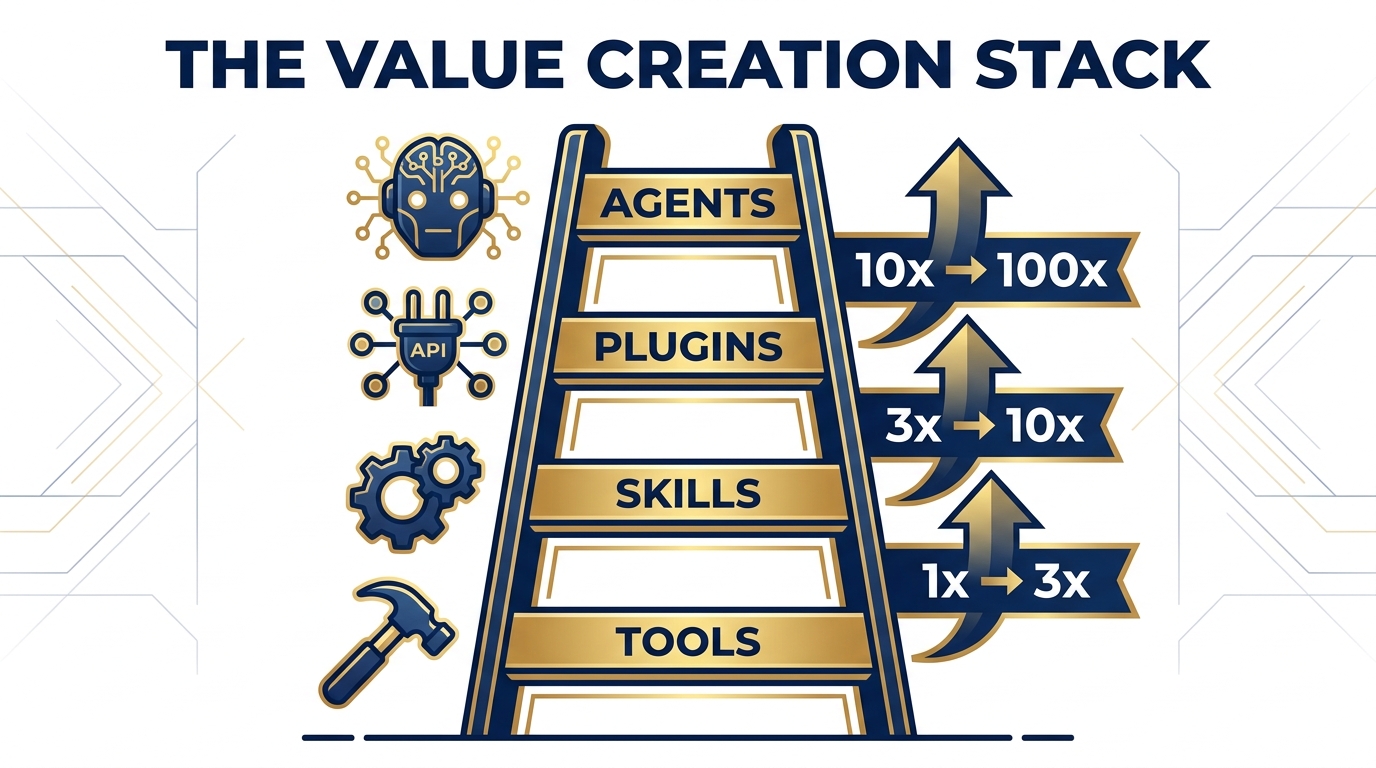

The Value Creation Stack. Each rung multiplies the value of the one below it. Most organizations live on Rung 1.

There is a four-rung ladder that separates AI users from AI operators. Most people never make it past the first rung. The ones who do — the ones who climb all four — don’t just work faster. They work differently. They build systems. They lead packs.

Here’s the ladder.

Rung 1: Tools¶

You use them.

Gemini. AI Studio. NotebookLM. Vertex AI. These are the tools. They are extraordinary. They represent the most concentrated deployment of intelligence in the history of humanity, accessible to anyone with a browser and a prompt.

And yet.

A great tool with no skill is a hammer without a carpenter. The hammer doesn’t care if you’re building a house or smashing your thumb. The model doesn’t care if your prompt is vague, circular, or leading it toward a hallucination. It will answer either way. It will answer confidently either way.

Day 1 was about learning to wield the hammer. You learned the grain of the wood. You learned how context windows fill and how to pace your prompts. You learned that grounding to real data is the difference between a useful analysis and a convincing fiction.

You are now, officially, a competent tool user. In 2024, that was a competitive advantage. In 2026, it’s table stakes.

Rung 2: Skills¶

You configure them.

A skill is a tool with memory of purpose. It’s a reusable prompt template, a structured persona, a calibrated instruction set that produces consistent results across different inputs, different users, different days.

Think about the difference between a surgeon who picks up a scalpel for the first time and a surgical team with a pre-op protocol, a post-op checklist, and fifteen years of refined procedure. Both are using the same tool. One is improvising. One is operating.

Skills are how you stop improvising.

When you build a skill — a Gem in Google’s ecosystem, a custom agent persona in AI Studio, a structured prompt template in NotebookLM — you encode your best judgment into a repeatable system. You stop having to be in the room for every interaction. The skill carries your expertise forward, even when you’re not there.

This is the first rung of leverage. You are no longer just working. You are multiplying your work.

A great skill with no plugin, though, is a sharp knife that can’t reach the ingredient. The skill knows what to do. It just can’t see the world yet.

Rung 3: Plugins¶

You connect them.

A plugin is a bridge. It’s the connection between your AI system and the living, breathing data of the world — APIs, databases, MCP servers, real-time feeds, internal enterprise systems.

Without plugins, your AI knows what it knew when it was trained, plus whatever you pasted into the context window. That’s a remarkably capable analyst who hasn’t checked their email in six months.

With plugins, your AI knows what’s happening now. It can query your CRM. It can pull the latest threat intelligence. It can check inventory, read a contract, scan the morning briefing, and synthesize all of it into a single coherent analysis before you’ve finished your first coffee.

This is the second rung of leverage. Your system is no longer isolated. It’s connected — to your data, your infrastructure, your organization’s nervous system.

And when you connect a skilled AI system to live data, something interesting happens. It starts to become capable of something that tools alone could never do.

It starts to become an agent.

Rung 4: Agents¶

You supervise them.

An agent is a skilled, connected AI system that can act autonomously — that can take a goal, break it into steps, execute those steps using tools and plugins, self-correct when it encounters errors, and deliver a result without requiring you to hold its hand through every decision.

A great agent with no supervision, however, is a contractor with no scope of work. Capable. Energetic. Expensive. Potentially disastrous.

The agent is the top of the ladder. But it’s also the beginning of a new discipline — the discipline of supervision at scale. Because when your agents are running, your leverage is enormous. And so is your surface area for error.

We’ll come back to that.

2The Shift¶

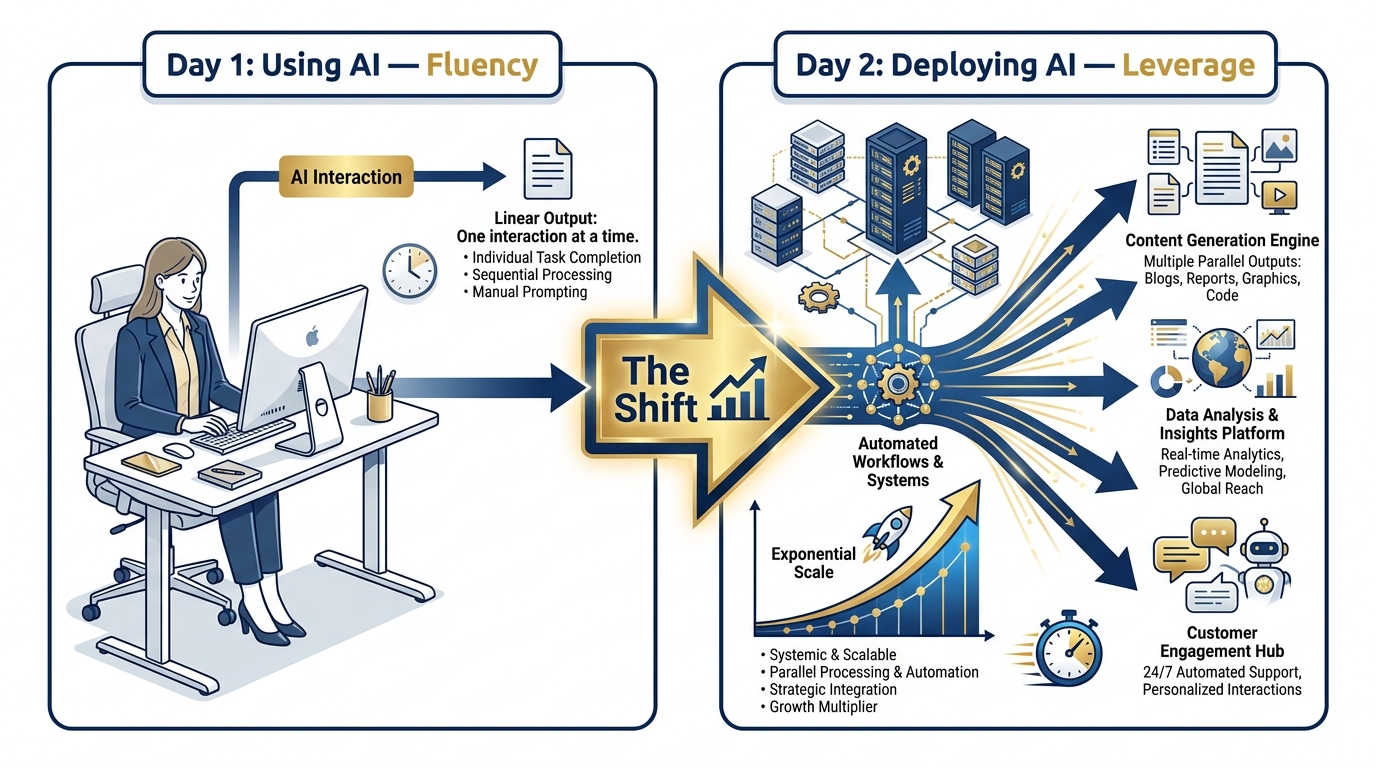

The Fundamental Shift. Day 1 builds fluency. Day 2 builds leverage. Both are necessary. Only one scales.

The shift from Day 1 to Day 2 is not a technical upgrade. It’s a philosophical one.

Day 1 was about fluency — learning to speak the language of these models, to understand how they think, where they excel, where they fail, and how to structure your communication to get consistently useful output. That fluency is real and it matters. You cannot deploy AI well without understanding AI first.

Day 2 is about leverage — building systems that work while you’re in a meeting. That work while you’re asleep. That work while you’re deployed. That carry your judgment, your institutional knowledge, your analytical rigor into interactions and workflows you’re not physically present for.

This is a different kind of work. It’s the work of an architect rather than a laborer. It’s the work of someone who builds systems rather than someone who operates them one task at a time.

The transition is subtle at first. You’re still writing prompts. You’re still thinking about context. But the prompts you’re writing aren’t for a single interaction — they’re for a system that will run thousands of interactions. The context you’re managing isn’t for one conversation — it’s for an agent that will have many conversations on your behalf.

This is where the exponential begins.

3The Lukos Angle¶

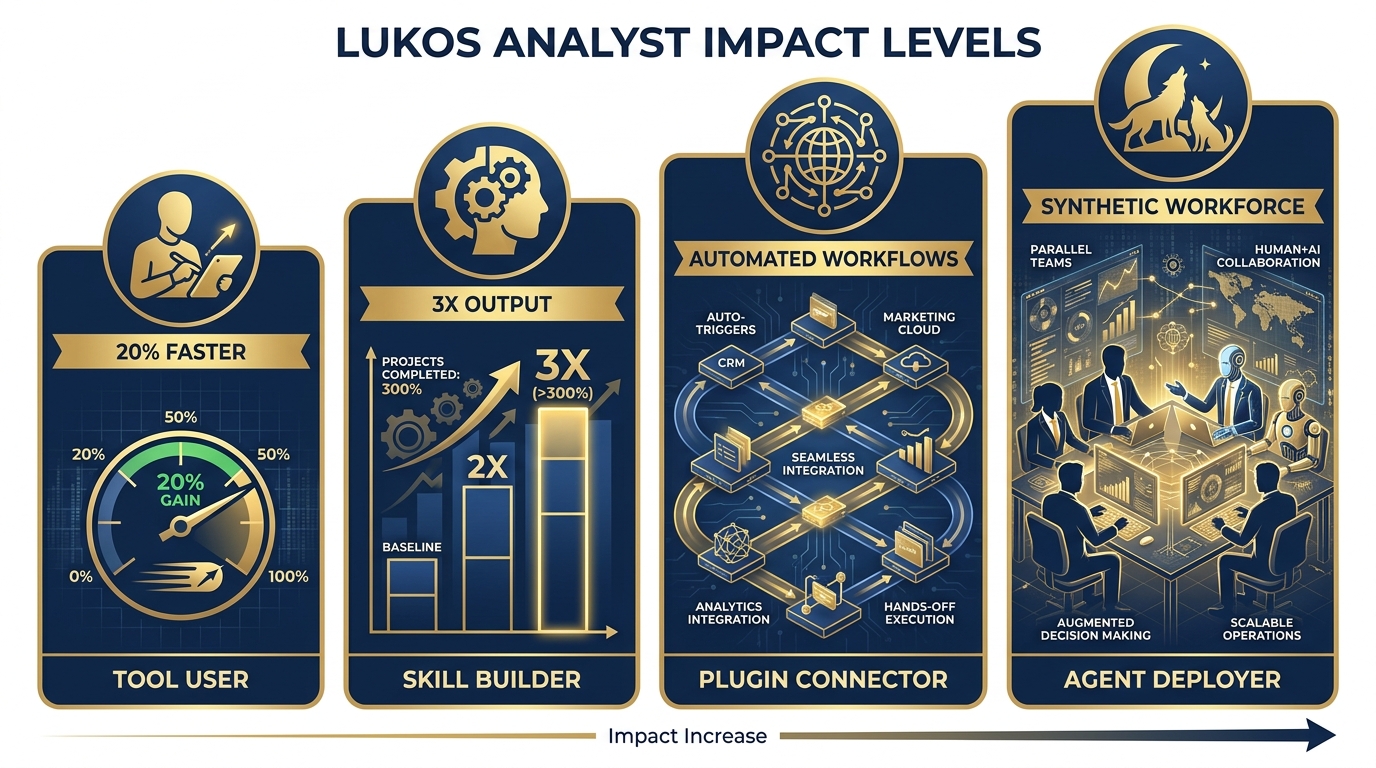

The Lukos Impact Ladder. Each rung of the value creation stack transforms what’s possible for Lukos operations.

Let’s make this concrete for the room.

At Lukos, the value creation stack maps to a set of analyst profiles that represent genuinely different operational realities — not just different speeds.

The Tool User: 20% Faster

This analyst has learned to use Gemini, AI Studio, and NotebookLM. They can prompt well. They finish their reports faster, their research is better structured, and they make fewer errors on first draft. They are more productive than they were before.

They are also, functionally, doing the same job they were doing before — just faster. The ceiling on their output is still the ceiling of human-hours. They have forty hours in their week. AI has given them back maybe eight of those hours.

This is a real win. It’s just not a transformation.

The Skill Builder: 3x Output

This analyst has stopped just using tools and started building skills. They have a library of reusable prompt templates for their most common analysis types. They have configured personas that maintain consistent voice and analytical framing. They have structured their workflow so that routine tasks are handled by the system, not by them personally.

Now the ceiling has moved. They’re not just faster at individual tasks — they’re producing more tasks, higher quality tasks, with less cognitive overhead. The work that used to take two hours now takes forty-five minutes, and the output is more consistent because it’s not dependent on how much coffee they had that morning.

This analyst is beginning to think like an architect.

The Plugin Connector: Automated Workflows

This analyst — or more likely, this team — has connected their AI skills to live data and internal systems. Their analysis workflows now pull from real-time feeds automatically. Their reporting systems generate first-draft outputs before anyone sits down at their desk. Their monitoring systems flag anomalies and surface them to human attention, rather than requiring humans to manually scan for them.

The team hasn’t gotten larger. But its effective output has. The AI is doing the routine, the repetitive, the first-pass. The humans are doing the judgment, the escalation, the final call.

This is a qualitative change in how the team operates. They are not just faster. They are structurally different.

The Agent Deployer: Synthetic Workforce

This operation has agents running alongside the human workforce. Not replacing it — running alongside it. The synthetic workforce handles defined domains: intake processing, initial analysis, data synthesis, pattern flagging, report generation. The human workforce handles judgment, relationships, escalation, and the cases that fall outside the agent’s defined scope.

The operation has effectively doubled its analytical capacity without doubling its headcount. Its response times are faster. Its coverage is broader. Its people are doing the work that humans are actually suited for, rather than the work that can be systematized.

This is the competitive moat. This is what “deploying AI” looks like at full expression.

4The Warning¶

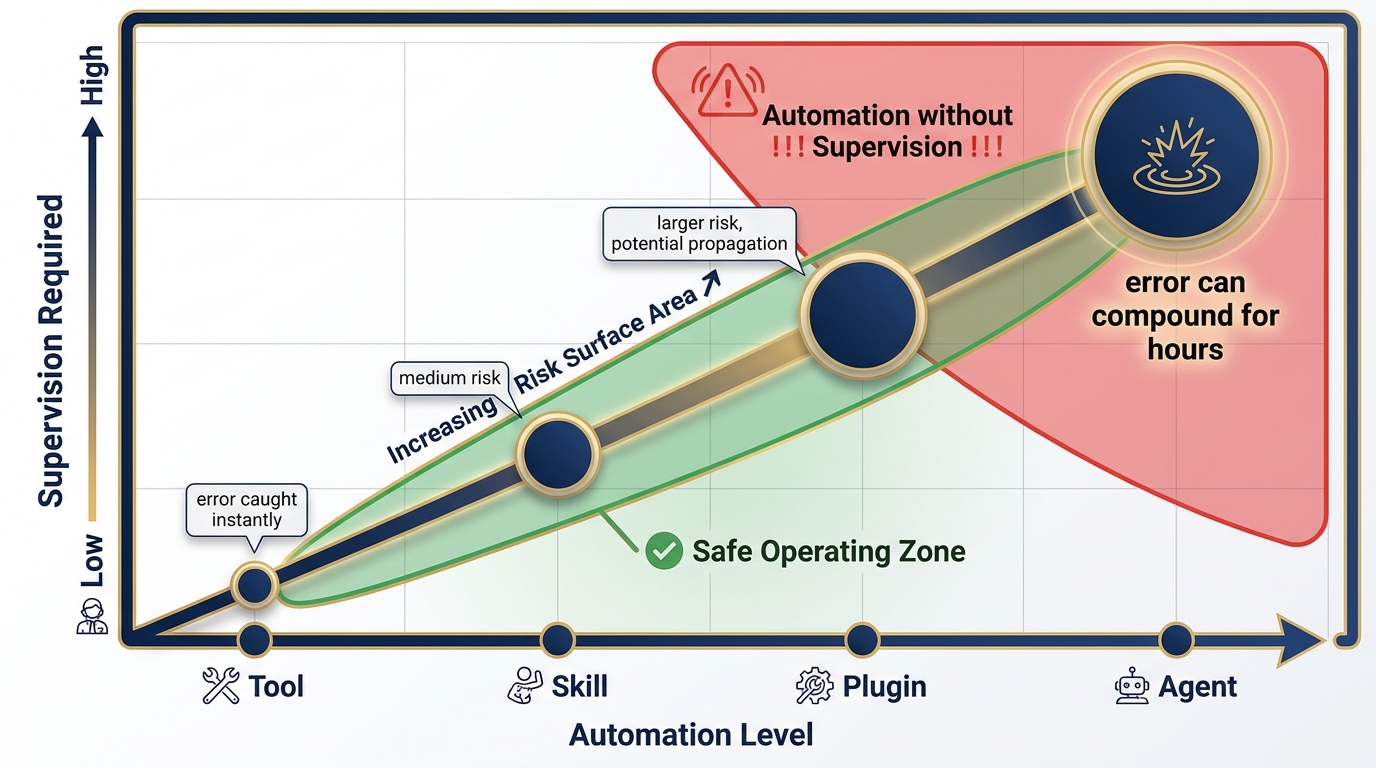

The Risk Stack. Automation and supervision must scale together. The failure to understand this is where most AI deployments break down.

Here is the thing no one tells you at the AI hype conference.

The value creation stack is also a risk creation stack.

A tool error is caught instantly. You ask Gemini something, it gives you a wrong answer, you notice it’s wrong, you ask again. The feedback loop is seconds. The blast radius is zero.

A skill error is slightly larger. If your prompt template has a systematic flaw, it produces systematically flawed outputs across every use of that template. You might not notice for days. The blast radius is larger, but it’s still contained — because a human is still in the loop on every output.

A plugin error is more dangerous. If your connected system is pulling from the wrong data source, or misinterpreting a live feed, it’s building analysis on a corrupted foundation. And it’s doing it automatically, at scale, potentially before anyone reviews the output.

An agent error can run for thirty minutes before anyone notices. Or three hours. The agent is autonomous. It’s executing. It’s producing results and taking actions. And if its core assumptions are wrong, or its scope has drifted, or it’s encountered an edge case it wasn’t designed for — every minute it runs, the error compounds.

This is not a reason to avoid agents. It’s a reason to build the supervision discipline before you deploy the agents. The supervision must scale with the automation. The review processes, the exception flags, the human-in-the-loop checkpoints — these aren’t bureaucratic overhead. They’re the structural integrity of the system.

The wolf that runs without the pack has no one to tell it when it’s heading in the wrong direction.

5What Comes Next¶

Day 2 is a build.

Chapter 6 will give you the forge — the capability to build AI-native applications that connect your skills to real-world systems. Chapter 7 will build your skill library — a reusable, shareable, institutional repository of your team’s best analytical thinking, encoded into AI systems. Chapter 8 will show you the synthetic wolfpack — how to design, deploy, and supervise a multi-agent operation.

By the end of Chapter 8, you will have the full stack operational. Not as a concept. Not as a demo. As a working system, calibrated to your context, built by your hands.

Every rung of the ladder will be in place.

The wolf that only uses tools is a wolf that hunts alone. It’s faster than it was before. It can cover more ground than it could before. But it is still one wolf, hunting one target, with one pair of eyes.

The wolf that deploys agents leads the pack. It sets the direction, supervises the hunt, and brings the full force of a coordinated operation to bear on any target it chooses.

That wolf doesn’t just succeed more often. It operates at a different level of reality.

Day 2 starts now.

Let’s build.

The chapters ahead assume you are ready to shift from using AI to deploying it. If anything from Day 1 still feels uncertain — prompt structure, context management, grounding, Vertex AI configuration — the appendices have you covered. There is no shame in drilling the fundamentals. The best operators always know their basics cold.